I tried it once the problem turned out to be ONNX having no support for Adaptive Average Pooling. Yet the layer is so important.

Hello Devon, I did the same, but I also used bounding box during training to crop the images. Doing so got a better accuracy 93%. Have a look at https://github.com/iyersathya/course-v3

Working on it

Hi, I tried building “Land Use Classifier from Satellite Images”. Achieved error rate of 0.030702. Below are some top losses.

https://gist.github.com/ksasi/6278dbf8f474c5535e870865c0058a48 is the link for the notebook.

I really like this! Moreso than any materials I’ve previously come across, this set of loss function animations do the best job of helping me to understand/visualize the effects of learning rate size.

I created this Medium article about how to put your model into production. This is hosted with AWS. Just basic tutorial but I tried to made this as simple as plausible to make it easier understand.

I’ve created the Image Classifier for Caltech-256 data set. Here is the link to the medium post.

I am getting about 86% accuracy. Is this reasonable to expect ?

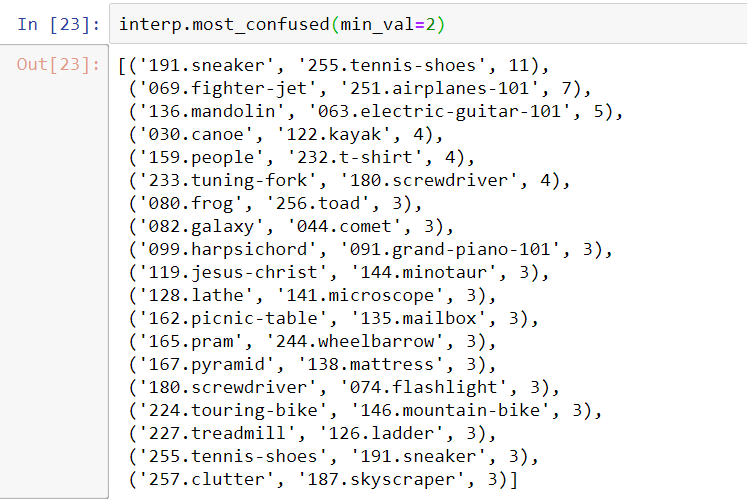

Most confused objects,

I wanted to share what we’ve been working on at team 26. We wanted to explore high-performance use cases, so we created a web app to do real-time facial expression recognition.

To maximize performance, on the client side the we track the face with javascript and crop it out so that we only need to send the face across the network. This works well: an average request is only 300 bytes and completes full round trip in under 300ms.

Please check out this demo video from chrome on a mac.

Also, go ahead and give it a try. It requires webrtc but that is available on all modern browsers. Note that if you’re on ios you need to use the safari browser and not an embedded webview since webrtc does not work in webviews.

Full write up, source code, and model weights coming soon!

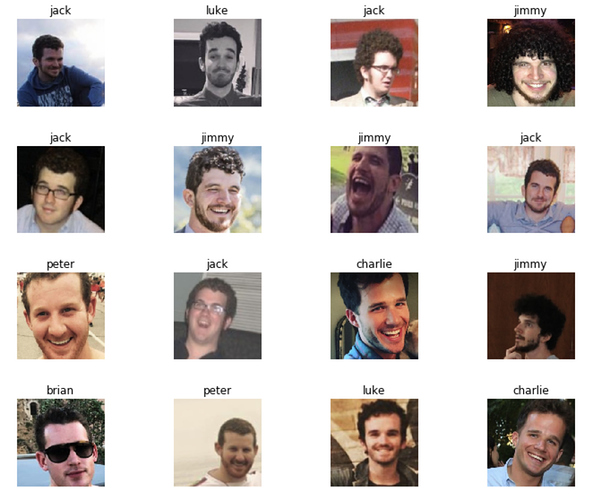

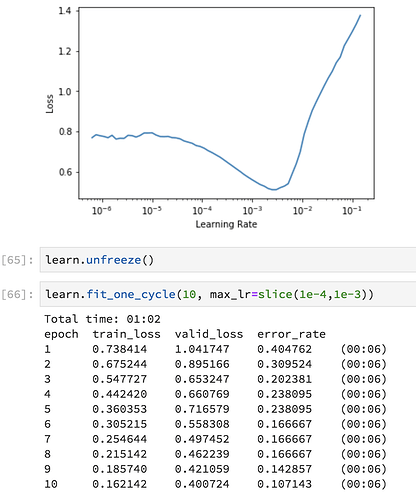

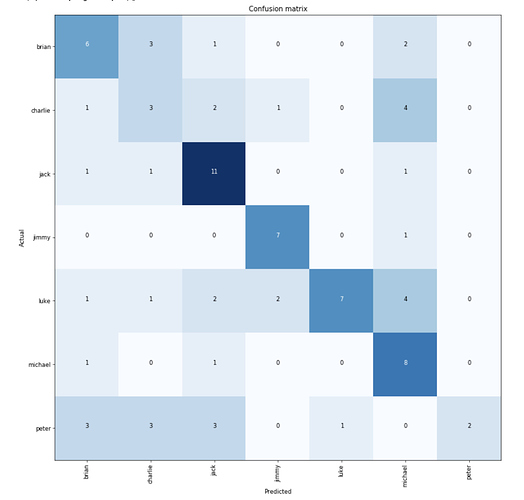

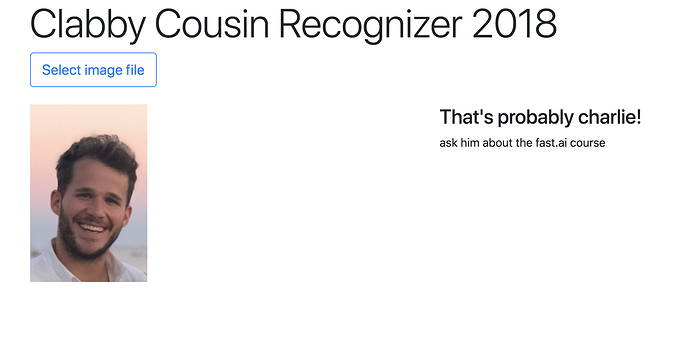

I’ve got a lot of cousins (36 first cousins), so I’ve been working on a Clabby cousin facial recognizer app in preparation for my fiancee’s visit to my aunt’s house for Thanksgiving.

I’ve labeled 7 of our male cousins, and was able to get it close to 90% accurate:

I’m serving the trained model on a FloydHub serving job using a simple Flask app, and you can test it out here:

Given my extensive unique domain knowledge, I also added little hints about each cousin when it makes a prediction. For example, if you take a photo of me, the app might tell you “Ask him about the fast.ai course” or “Don’t bring up his receding hairline”:

Working a blog post, too, which I’ll share soon  !

!

Thank you to everyone who tried my hummingbird classifier and especially those who gave me feedback. Thanks to that I have now been able to get it to recognise 15 of the 17 species of hummingbirds documented as seen in Trinidad and Tobago with distinguishing between male and female in some cases while maintaining the 75% accuracy based on experimental results. Hopefully now I can get some time to make it look a little prettier so the results of its predications aren’t so plain Jane. https://hummingbirds.azurewebsites.net

Yes. We have tested two approaches in our project:

- models offered by torchivision (pure PyTorch)

- models used for Computer Vision in fastai (custom model from torchvision)

I think, as long as PyTorch 1.0 preview tag is still intact, the whole process is going to be very bumpy. I won’t stop anyone from trying though. Whether it is worth the time or not, I’ll let you decide.

Are you using fastai with opencv ?

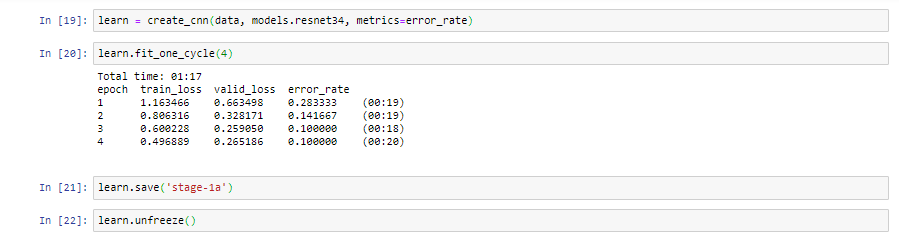

So … I tried to address a socially meaningful issue. That of accurately being able tell apart turtles from tortoises. Something that has kept me awake many a night.

I acquired some 200 images of each from Google images. That was a bit of a challenge mostly from being JavaScript impaired. Moving the images to the Jupyter note was a little hit and miss as well. Eventually I had to hunt down the folders and place them in the correct library. I probably did not specify the path correctly.

But, the results were pretty darn stellar (I think??)

Total time: 00:33

epoch train_loss valid_loss error_rate

1 0.873743 0.381626 0.142857 (00:12)

2 0.585818 0.311341 0.077922 (00:07)

3 0.455686 0.207306 0.077922 (00:06)

4 0.374820 0.175838 0.051948 (00:07)

Then I moved the code to production, and voila!!

That is indeed a turtle (hmmm … wonder if the image comes through)

Anyway, the next step is the online applet. Which is proving to be a challenge.

But, this has gone well so far …

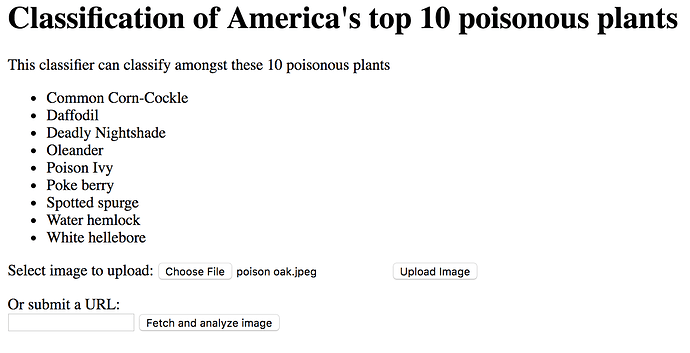

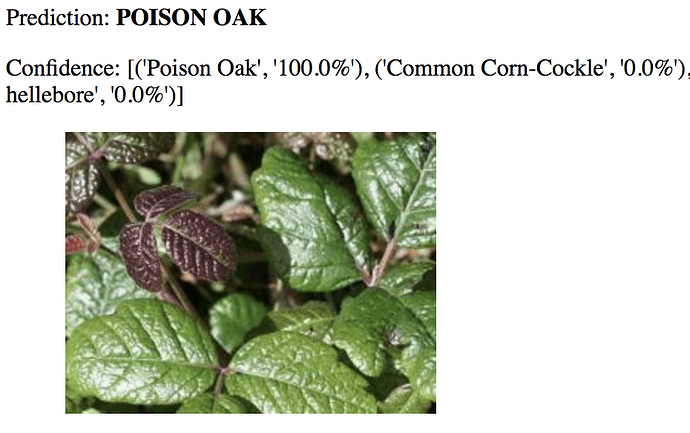

This week I used the ResNet34 and some basic functions to automate the downloads of images by passing a list of keywords and the number of images requested. Using this approach I scraped a website to find get the names of the top ten poisonous plants in the US and pass it to my downloader, where I downloaded 100 images each. My final accuracy was ~83% and I used my fellow Trinidadian @nissan docker and Azure tutorial to refresh on docker , @nikhil_no_1 's (heroku) wonderful tutorials to host my webapp on heroku and off-course @simonw. Thank you @nissan for helping me out and answering all of my questions!

Link to app: http://poisonousplantsus.herokuapp.com/

Github: https://github.com/sparalic/Poisonous-Plants-Image-Classifier/tree/master

No so pretty UI:

Example prediction:

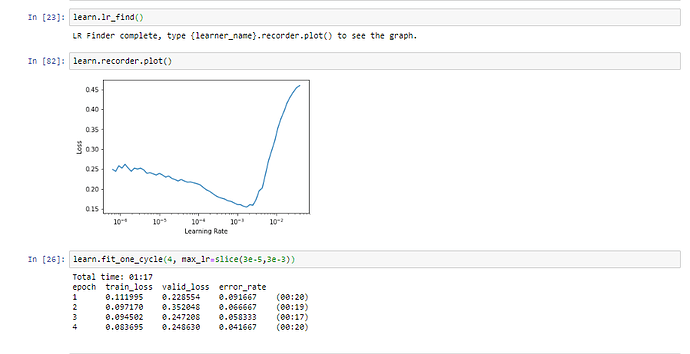

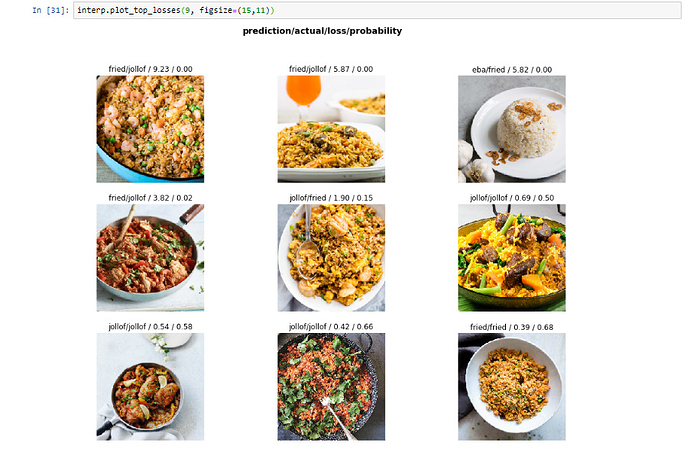

I created image classifier for Nigerian local cusine (Jollof Rice, Fried Rice and Eba) using the Jeremy’s type of bears classification methodology.

My images were sourced from Google Images, and results below are before any Image cleanup

Using a resnet34, I got a 90% accuracy without optimization of hyper parameters

After optimizing for hyper parameters, the accuracy improved 96%

Looking at images with the top losses

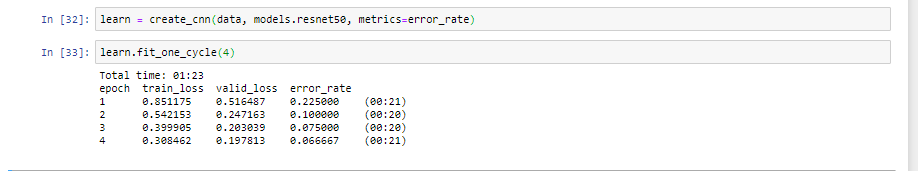

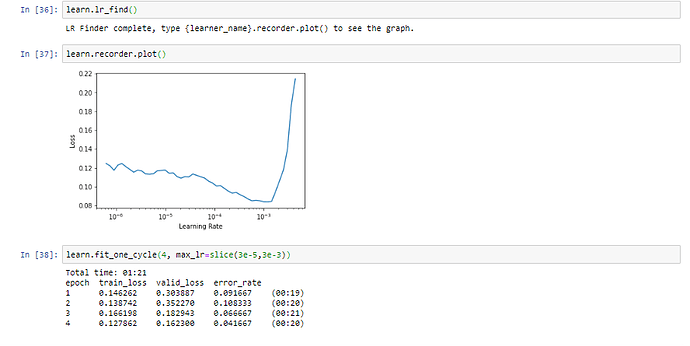

I also tried resnet50, I got a 93% accuracy without optimization of hyper parameters

After optimizing for hyper parameters, there wasnt any improvement in accuracy using resnet50

I made an album art genre classifier. Takes in an album cover and predicts the genre. Generated dataset by downloading images from Spotify’s Web API.

The model currently supports 2 output classes. I’m working on adding more genres, and improving accuracy. Thanks to @simonw for the deployment approach. Source here.

Running here. Give it a spin.

Fantastic! Looking forward to it.

Oh those all look quite yummy! Which one is best?

Thanks. I will share too.

I love Jollof Rice because it is spicy, though I think you will prefer Fried Rice