Session conversion prediction based on clickstream data

Problem statement: Predict probability of conversion in user’s last session(site visit) given clickstream data of users.

Business use cases: Large online businesses(e-commerce, digital media, edtech) selling a product has a notion of conversion(purchase). The product and marketing team wants to know the likelihoods of a user making a purchase based on user’s recent activities and past behaviors. This helps them target users in a promotional/retargeting emails/ad campaigns, and also discover key signals contributing to conversions.

Model definition: p(conversion on last session | events up to last X days, user)

Traditional approach:

- Create RFM(Recency, Frequency, Monetary value) features from clickstream event time series. Excellent read on this topic: https://www.kdd.org/kdd2016/papers/files/adf0160-liuA.pdf

- Create a structured dataset with a target label(user converted or not converted on the last visit) and predictors(features)

- Use GBM or Logistic regression or SVM

Challenges with the traditional approach:

- Clickstream event data is messy, each event contains several dimensions(time, metadata, taxonomy)

- It is extremely hard and time-consuming to manually create features from this large feature space

- Feature engineering requires domain understanding

- Capturing hidden patterns in browsing patterns is hard

- GBM works reasonably well in the presence of good features, not otherwise

Approach with CNN:

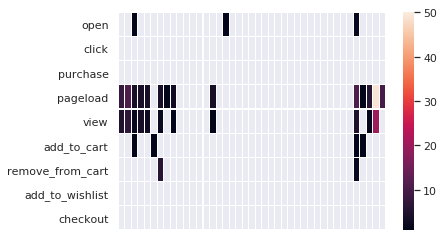

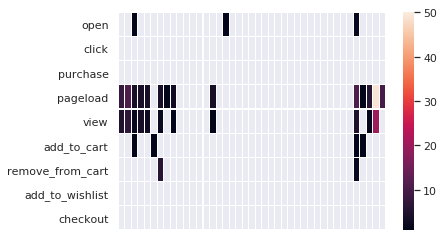

- Represent clickstream as a heat map matrix

- Heatmap contains x-axis: time intervals, y-axis: events, cell value as number of event occurrences in a given time interval

- Normalize the heatmap intensity, i.e count values goes from 1 to 50. Mask all cells with no activity

Benefits

- Can use simple aggregation such as sum as the intensity value

- No special treatment for an event. Could easily normalize intensity for each event based on event count distribution across users.

- (Hyperparameter) Use variable time intervals - smaller for recent history, larger for old history

- Automated feature engineering - learns hidden browsing patterns

- Transfer learning: use this approach to learning embeddings of user clickstream, use in other models as features or use it for user segmentation(clustering)

- Less overfitting compared to GBM

Limitations:

- Using different aggregates as cell values

- Not practical to use this approach alone for prediction. Often user attributes need to be included in the model as well, which are numerical/categorical

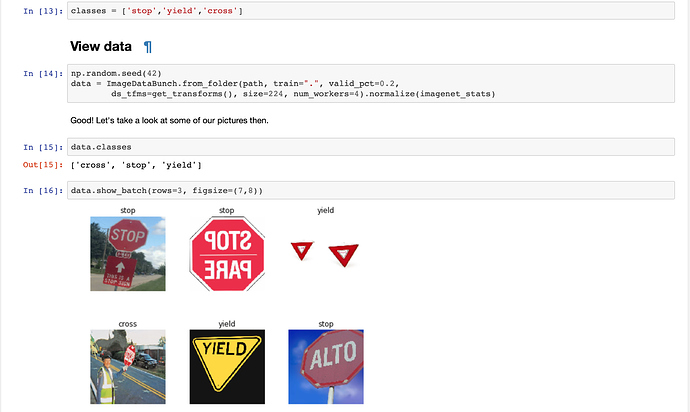

Exercise

- Training row: User’s activity up to last 7 days since their last session

- Target label: purchase or no purchase in the last session

- Time intervals - 5 mins up to 1 hour, 1 hour up to 1 day, 1 day up to X days

- Dataset - tried this for an e-commerce client, however, this approach is vertical agnostic.

- Dataset size: 15k images, 4% conversion rate. Next step is to test on bigger dataset

- Events - [pageload, product_view, add_to_cart, wishlist, checkout, purchase, email_click, email_open]

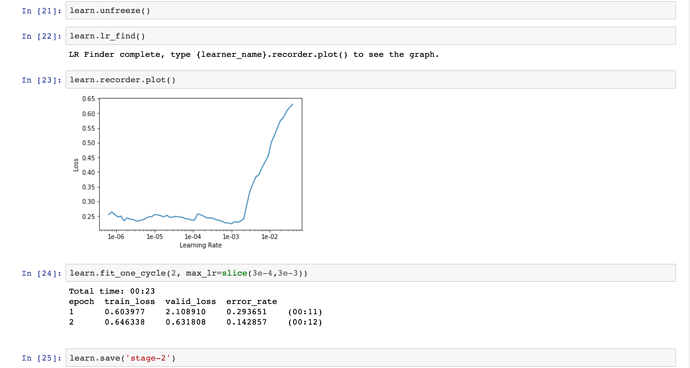

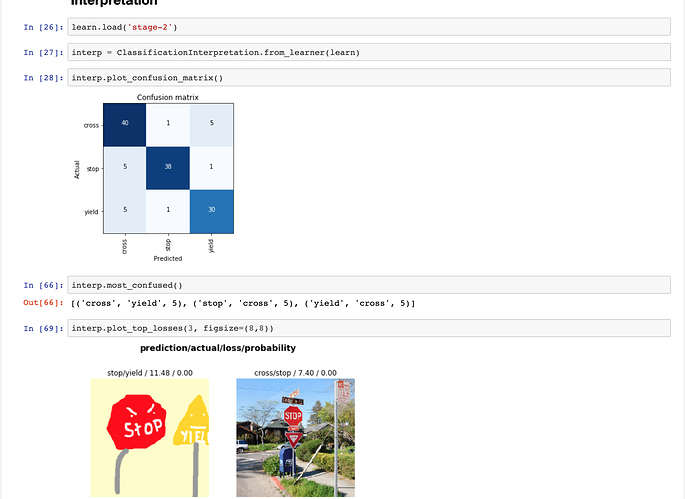

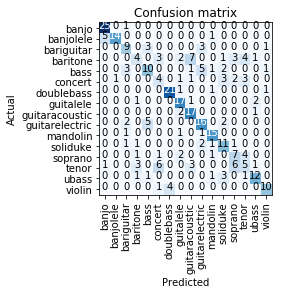

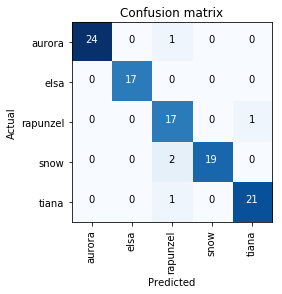

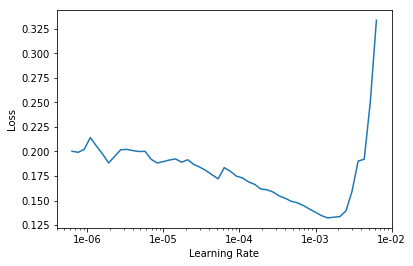

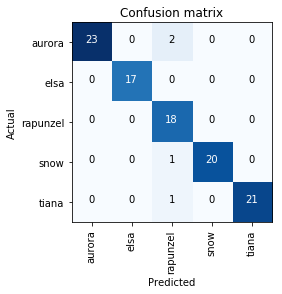

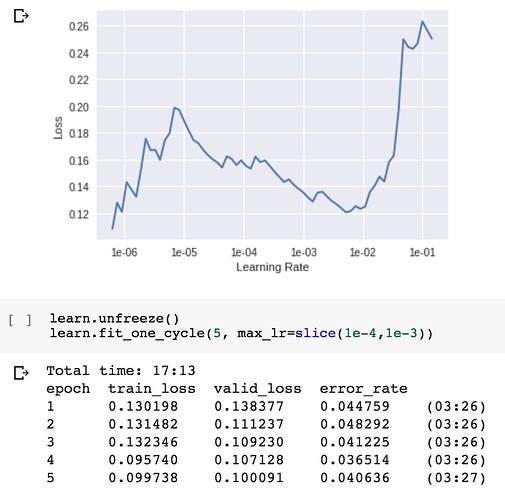

Performance:

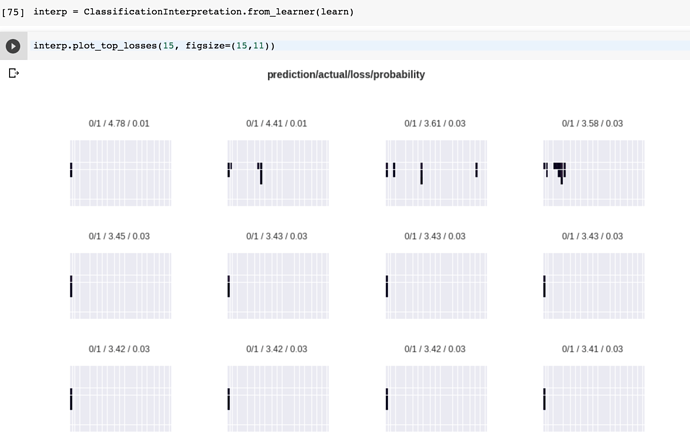

Update 2: Tried cropping and padding the image at center (0, 0.5) and using the 244x244 image size. This ensure that critical part of the image is not cropped. The accuracy is still the same. At this point, it looks like there is no issue with the learner and we could blame the dataset. Most top losses samples are new users(with no prior history) who converted with few activity. A better dataset and some baseline models to compare the performance would be the next step.

TODO: Benchmark against XGBoost/GBM.

crop_pad_tfms = [crop_pad(size=244, padding_mode='reflection', row_pct = 0.5, col_pct = 0.0)]

tfms = (crop_pad_tfms, crop_pad_tfms)

data = ImageDataBunch.from_name_re(path_img, fnames, pat, bs=bs, ds_tfms=tfms, size=244)

Update 1: Model isn’t performing too well on True labels. 96% accuracy is mainly attributed to TNs. I’m not sizing the image to size=224 as recommended for resnet setting because this takes the image edges out, edges contains the most meaningful information(recent activity). Could this be contributing to the performance? I don’t fully understand the implications of this recommended size setting but I could recreate images such that meaningful information is not lost on resizing to 224. This would verify the assertion. ds_tfms aren’t applied.

TODO: Extend the lookback window(currently 7 days) to borrow more past browsing behaviors