Pretty cool.

How did you get the data.

Did it manually?

88% might be really good, though. Oftentimes, or maybe most of the time, the external cues for the model variations amount to little more than trunk badges or chrome trim vs body-color trim. I bet the accuracy would improve materially if your classes were just a bit broader, like Mercedes C-class, 4th gen, etc. Of course, updating the labels would be a fair bit of manual work, but you could use the new image relabeler that just came out to replace / supplement the FileDeleter.

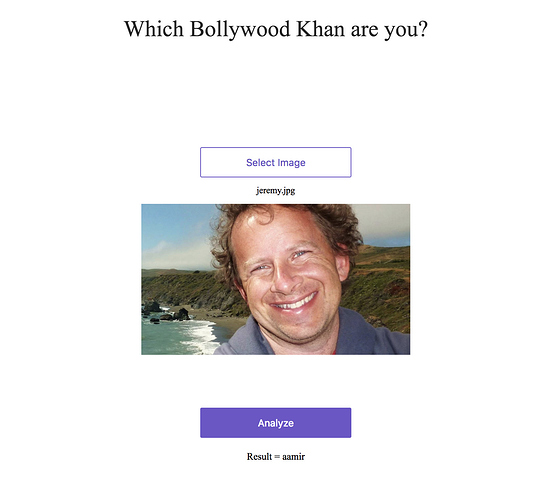

I tried to create a model to identify different people. I thought it would be challenge if I train it on actors who look different in different movies with make up and transformations. So I trained it it identify three actors who rule the Bollywood Shahrukh Khan, Salman Khan and Aamir Khan. I was able to achieve 95% accuracy using resnet50.

. The gist of my notebook can be found here.Few learnings:

- More data + Improved data quality helped improve accuracy. Thanks to @cwerner for fastclass.

- FastAI defaults are amazing. The same code works most of the times.

- Overfitting is when error rate starts to gets worse. It helped me train my model more.

Hopefully, I’d be able to improve it further with future learnings.

The model will always predict something, so I thought it would be fun to find out which Bollywood khan do you look like? You can check that out with this app here.

In this picture Jeremy looks like Aamir Khan if you were wondering:

I’ll admit I’ve spent most of the week googling and searching the forum to answers on errors I’ve gotten, dealing with jupyter notebooks not opening in my browser, trying to set up a new paperspace machine, etc. There have been some quite discouraging moments but also a lot of learning moments - and I think that’s valuable even if my models aren’t the coolest!

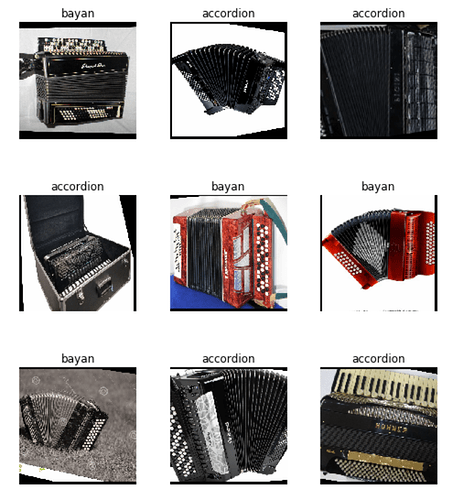

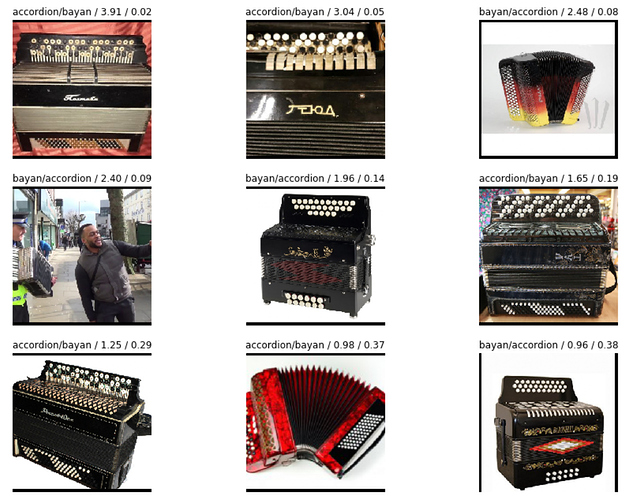

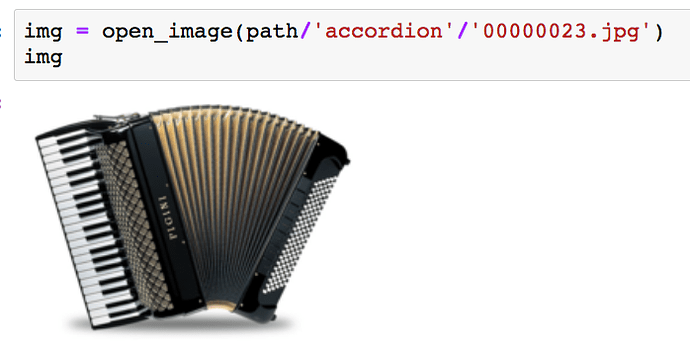

I decided to use the google image scraper method to do an piano accordion / bayan classifier. I originally wanted to include the concertina and the bandaneon but getting a clean dataset with these was fairly difficult.

What makes them different?

All of these are reed wind instruments that are pulled open and pushed closed to pass air through.

- Piano Accordion - Keys on one side, buttons on the other. Large.

- Bayan - buttons on both size. Same size as accordion usually. Type of button key accordion.

- Concertina - small, square or hexagonal shaped. Buttons on both sides.

- Bandoneon - small - square shaped.

As you can see the accordion and bayan can look very similar. The biggest trouble is that bayan is also the name for a drum instrument. Finding bayan accordions can lead to a lot of images of accordions or comparisons between the two.

Images of people playing the instruments can sometimes block one of the sides, and both sides need to be visible to tell between the styles.

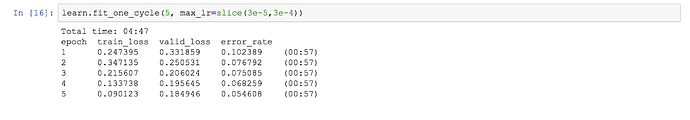

I played with different models, learning rates and epochs quite a bit, and am fairly happy with the error rate I’ve achieved - I’ve been getting 50 & 40% when I started out. When over-fitting the model I got 36%

Although I feel I’ve had a slow start this week I still wanted to share what I’ve been able to achieve this week - I’m still proud!

(I also play the accordion.)

Please don’t at(@) jeremy.

It’s mentioned in many places.

If you want him to read your post, this is the worst thing you can do.

I’ll remove it. Thanks for pointing it out.

+1 wrt to the effort needed to set up the work environment and create the datasets. We can rest easy, though, knowing that we’ve started developing a full machine learning / data science skill set. That is, we can tell potential employers that we don’t need carefully curated datasets or $12k custom desktops to make valuable contributions.

At least, that’s what I keep telling myself.

Hear hear! That’s what I keep telling myself while I apply for jobs…

Hello!

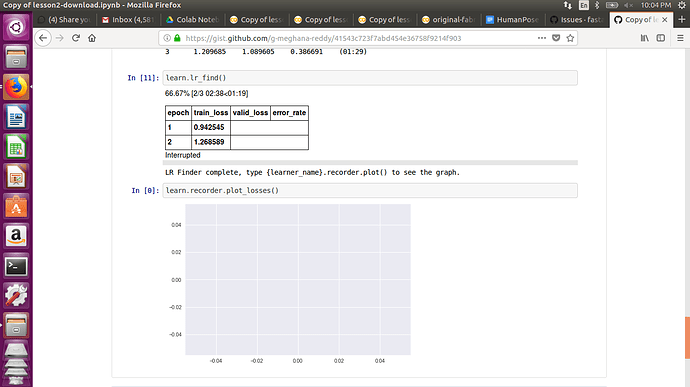

I am facing issue when I am using lr_find() in colab. It gets interrupted while running on the third epoch. It looks something like in the image. And due to this I am unable to plot losses as well.

Link to colab- https://gist.github.com/g-meghana-reddy/41543c723f7abd454e36758f9214f903

(Dataset is collected from Google images)

@Meghana_G After lr_find() you need to use learn.recorder.plot() instead of learn.recorder.plot_losses() Your learn.lr_find() can be interrupted if loss is constantly increasing. There is no point in checking more with higher learning rates if losses is already getting worse. Once it is done, you can use learn.recorder.plot() to see how it performed.

Check https://docs.fast.ai/basic_train.html#Recorder.plot and https://docs.fast.ai/basic_train.html#Recorder.plot_losses for the difference between plot and plot_losses

But in lesson 1 notebook (unmodified) also I am facing the same issue ( getting interrupted )with lr_find(), previously it was not the case.

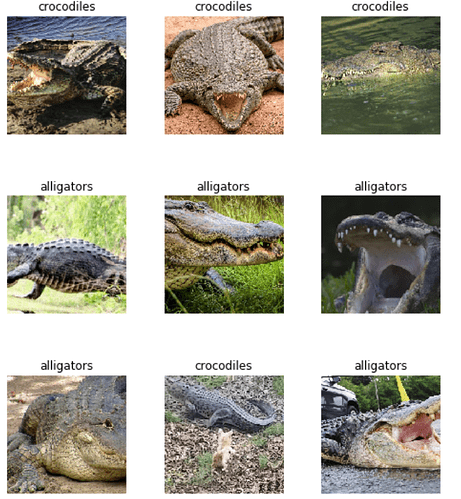

Made a simple image classifier that can distinguish between alligators and crocodiles.

After cleaning the data a bit and playing around with the learning rate, the accuracy turned out to be 85%. Which I think is good since they’re very similar classes. The code is here https://gist.github.com/mlsmall/5f84c685267f5733a0e4882b03dfb34c.

You can test it yourself here: https://zeit-wibwuxpygu.now.sh/

If you’re using the Zeit guide to build your web app, be careful on how you name your classes. You may have to switch the class names around.

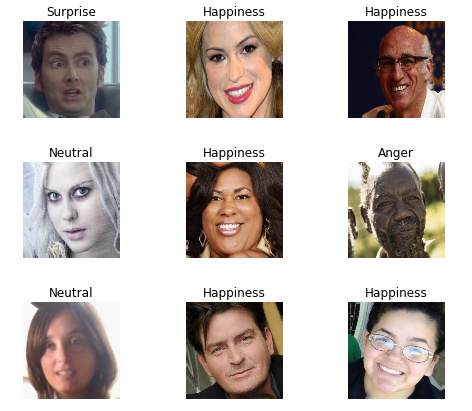

Hi, we have published all of the source including the model training notebook, model weights, inference server, and javascript face tracking.

For more info check it out:

@gokool describes how he trained the model on the large AffectNet dataset. @lauren describes how she made a high performance fastai inference server, and I describe how we increased performance by cropping the faces client-side.

The demo is still live here:

https://fastai.mollyai.com/

Whiskey or Wine?

I made a simple whiskey or wine image classifier.

Then using some of the code provided by @nikhil_no_1 and his write-up, I made a web app and fed it some images.

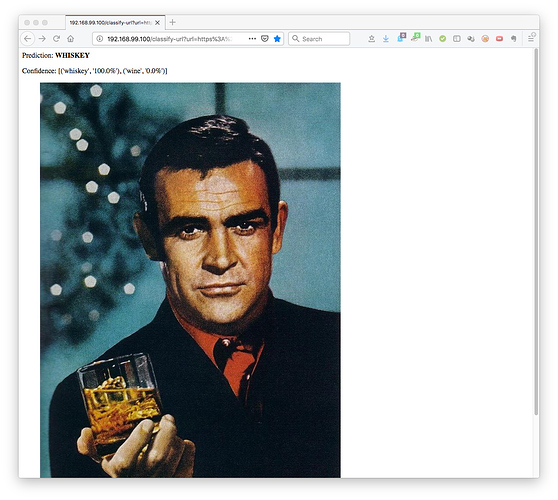

The classifier is quite “confident" around James Bond:

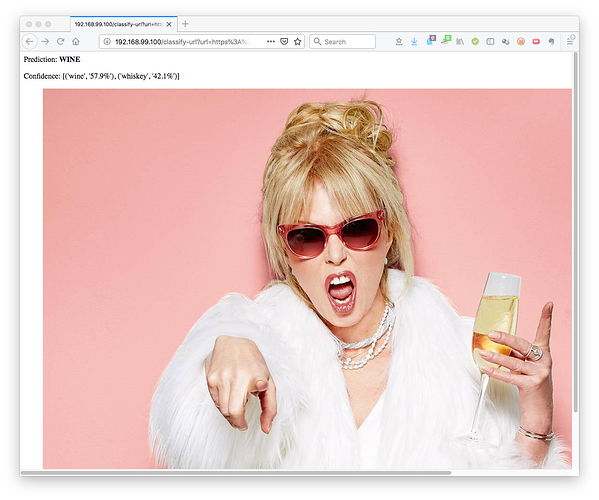

Less confident about Patsy from Absolutely Fabulous (it’s less confident but in fairness her glass might have both):

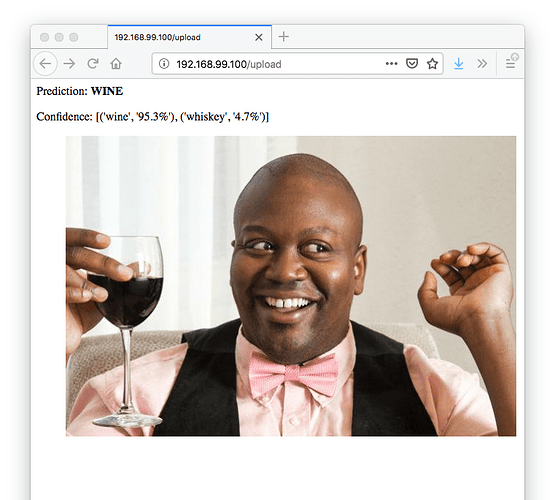

It does well as with Tituss Burgess:

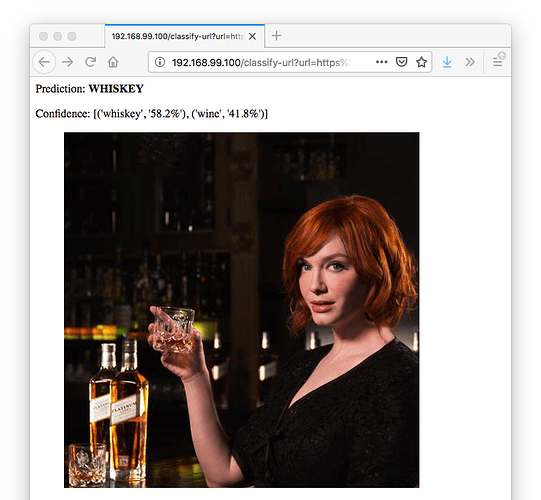

The classifier is a lot less confident around Christina Hendricks:

As I feed the app more images (and let my friends play with it), it seems like what I would really want to do with images from "the wild” is to first focus in on the area of the picture that has a glass, then crop to that part of the image, and classify that cropped part. Do folks have any advice or examples for how to tackle such a step-by-step process like that (or a similar one)?

This is my work for lesson 2 and It’s my first article! much appreciated if you can give some comments

Thx

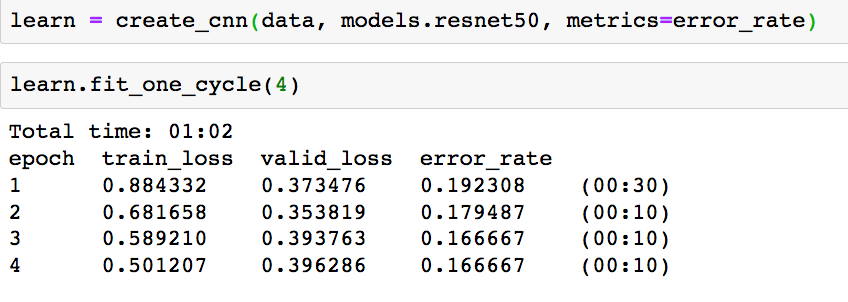

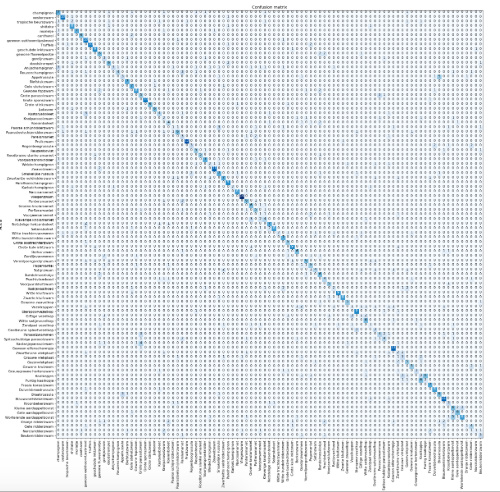

Accuracy is about the same for resnet34 and resnet50 (no unfreezing yet):

Total time: 04:43

epoch train_loss valid_loss error_rate

1 3.811586 2.541733 0.616438 (01:13)

2 2.713833 2.102657 0.515279 (01:11)

3 2.036132 1.925441 0.484721 (01:10)

4 1.560148 1.894532 0.474183 (01:08)

Which is quite amazing for 93 mushroom classes and a noisy dataset; when looking at the wikipedia of the 15 a lot are actually not distinguishable by picture only.

Update: next to that I discovered a lot of rare subtypes get confused because dataset noise due to googling the images automatically. E.g. when looking for the ‘hairy X’ it also finds images for the ‘shaggy X’ and the ‘yellow X’.

Would be great to include this info in the app (‘probably it’s X, but it’s often confused with [the poisoned] Y’). Or to check what’s the probability someone using the app is getting ill because of a wrong prediction …

I made a classifier that differentiates between three Indian dishes: Khakra, Papad and Parantha.

This will identify whether the food is papad, khakra or parantha. These are common Indian dishes that are round, similar in colour but differ in thickness and taste.

- Khakra: Crispy, salty and sour stuff.

- Papad: Crispy, not as salty as khakra, great with salad stuff.

- Parantha: Soft, filled with any vegetable, buttery stuff.

The accuracy is 87.5%, which I think is alright given that it misclassified 4 images and trained on only 91 images. I am having some issues with deploying the webapp. Will add that here when its done. The notebook is here. PS: It’s written specifically for colab.

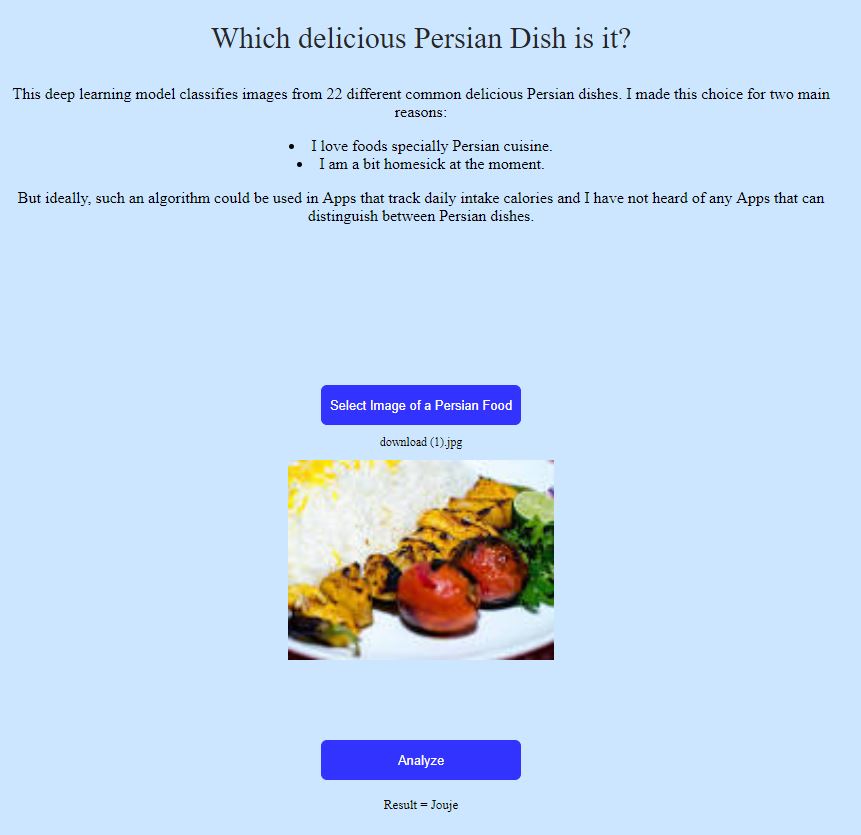

Hi all, I made a classifier for 22 different Persian dishes, using around 80 images from Google for each class. I deployed it using this guide. Thanks to @jeremy, @simonw and @navjots, It was straightforward for some like me without any background.

Here is the App: https://persiandish.now.sh/

Like @visingh, I attempted to classify architectural styles. In contrast to that effort, though, I used images retrieved from a web search using the Bing API. After obtaining the images and cleaning up the file names, the code pretty well mirrors the notebook template provided from the lesson. Accuracy ended up in the high-80%s, if I remember correctly, without much tuning. As an educational exercise, I’m pleased with it; as a real model, it would be wholly inadequate. Check out the gist here if you want.