share under google colab?

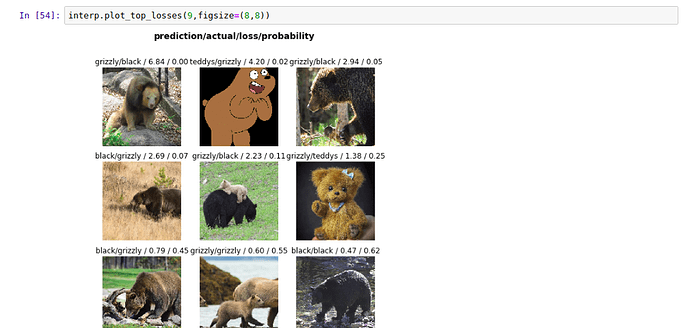

The ImageDeleter is not showing files with the highest loss.

I plotted the top losses for the bear dataset and the some are obviously wrong images, i would like to delete those but the deleter widget is not showing those images at all

Hi Tabish,

Did you run DataFormatter first, as detailed in these docs? https://docs.fast.ai/widgets.html#DatasetFormatter

Hopefully that helps.

Hello Everyone,

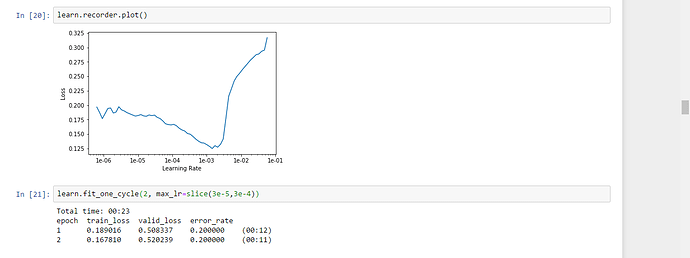

When using lr_find(), I am getting this kind of plot and my error rate is 20%. Am I doing anything wrong because I have never seen this kind of plot

This lr plot seems fine. Your range should be something like1e-6 to 1e-4.

Try to run epochs.

Your valid loss is improving.

You are right @balnazzar. python -c ‘import torch; print(torch.cuda.device_count());’ returns 0. Not sure why, and I have given up on paperspace. I have gotten everything working just fine on my home system running a GTX 1070 GPU and it doesn’t cost me any hourly fee, other than electricity cost. If I give cloud VM another try I will probably use Salamander at this point. Thank you for your help!

Hello,

Can anyone please explain what is the different between fit and fit_one_cycle? I have looked into fastai documentation and my understanding is that fit_one_cycle calls a callback function internally to reduce the learning rate within max and min range based on loss…

Thanks

Amit

In lecture 2, Jeremy used a notebook to collect Google Images of teddy bears. There is an amazing github(https://github.com/hardikvasa/google-images-download) repo that automates collecting images given keywords. You can do a pip install to just run it in one command! Ive used it and it works great!

Has anyone developed web app by Starlette? i am trying to have a model and then expose through web service/…

Thanks

Amit

Hello all. Further update to FileDeleter!

We’ve merged both the FileRelabeler and FileDeleter into the same widget: ImageCleaner. Everything else is the same. Updated docs coming soon.

ds, idxs = DatasetFormatter().from_toplosses(learn, ds_type=DatasetType.Train)

ImageCleaner(ds, idxs)

create_cnn() is always trying to use gpu:0, even though i try to set it to other gpu device with

‘torch.cuda.set_device(2)’

i have another model running on gpu:0, so it throws this error

RuntimeError: CUDA out of memory. Tried to allocate 2.25 MiB (GPU 0; 7.92 GiB total capacity; 29.46 MiB already allocated; 7.00 MiB free; 804.50 KiB cached)

any help plz

Set defaults.device to the device you want.

how to set that?

defaults.device=2 doesn’t work .

For the lesson 2 sgd notebook, I wanted to play around with different functions other than linear. So I started simple and just made my plot quadratic with a bit more noise:

y = (x@a)**2 + torch.rand(n)*2

a = tensor(1.,1)

y_hat = (x@a)**2

mse(y_hat, y)

OK, now my y_hat is also quadratic. Now I change my update method:

def update():

y_hat = (x@a)**2

loss = mse(y, y_hat)

if t % 10 == 0: print(loss)

loss.backward()

with torch.no_grad():

a.sub_(lr * a.grad)

a.grad.zero_()

When I try to run my gradient descent (mse):

lr = 1e-1

for t in range(100): update()

i get the following error:

Trying to backward through the graph a second time, but the buffers have already been freed. Specify retain_graph=True when calling backward the first time.

Any ideas or thoughts?

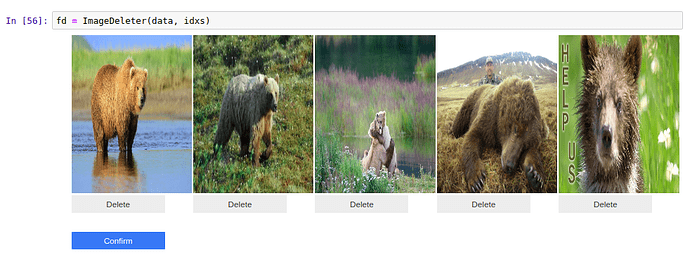

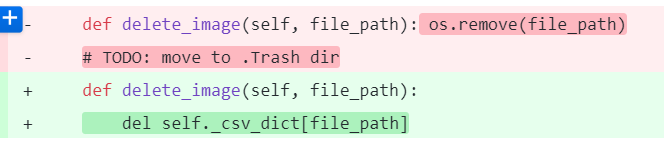

Hello! @zachcaceres, I just found that now after run ImageCleaner(ds, idxs) it doesn’t delete the selected images, so I found this on the changes in github ():

So it doesn’t remove the images anymore, how can it be fixed?

hey @jm0077!

yeah, we opted for a non-destructive action in the widget. Even though people want the file removed from their particular model/project, that doesn’t mean that they want to destroy the file itself. It was too easy to wreck your data.

The widget now uses its own CSV to manage the inclusion and labels of files.

@lesscomfortable could you share the relevant example here?

please help to know how to delete the files with top losses or know the filename to delete them manually.

So basically you need to run the ImageCleaner as before but your changes are saved to a csv. To use your changed dataset you just need to build a new ImageDataBunch.from_csv and use your cleaned.csv. We included this line commented-out in the lesson2 notebook, you can find it at the beginning of the “View Data” section.

@lesscomfortable In the notebook, you run ImageCleaner on the validation dataset. If you use the csv to generate a new databunch, wouldn’t you just be looking at the cleaned validation dataset, rather than the training data and the cleaned validation?

I just want to make sure I understand

True. I am working to solve that, see Create a Learner with no Validation Set. In the meantime, you can create an ImageDataBunch with as little validation set as possible (0 returns error) and do that again when loading from csv. If you figure out how to create an ImageDataBunch with all the dataset in train_ds and no valid_ds and you can create a Learner with it please let me know!