Neither I. I had to truncate my long post to limited number of words. Maybe in discourse settings something can be found.

Lesson 1 你的宠物

如何启动你的第一个GPU

0:00-0:47

ytcropper Lesson 0 How to get GPU running

- Lesson 0 How to get GPU running gpu

如何启动你的第一个GPU

你需要做些什么准备

0:30-3:16

What else do you need to get started

你需要做些什么准备?

- Jupyter notebook基础

- Python 基础,学习资源

- 做实验的心态准备

如何最大化利用课程视频与notebook

3:13-4:29

How to make the most out of these lesson videos and notebooks

如何最大化利用课程视频与notebook?

- 从头看到尾,不要纠结概念细节

- 一边看,一边跑代码

- 试试实验代码

你可以期望的学习成就高度与关键学习资源

4:29-5:28

what you expect to be with fastai course

- 成为世界级水准深度学习实践者,构建和训练具备达到甚至超越学术界state of art水平的模型

为什么我们要跟着Jeremy Howard学习

5:26-6:47

why should we learn from Jeremy Howard

- 从事 ML 实践25年+

- 起始于麦肯锡首位数据分析专员,然后进入大咨询领域

- 创建并运行了多个初创企业

- 成为Kaggle主席

- 成为Kaggle排行第一级的参赛者

- 创建了Enlitic, 史上第一个深度学习医疗公司

- San Francisco大学教职人员

- 与Rachel Thomas共同创建了fastai

- 是务实非学术风格,聚焦于用深度学习做有用的事情

fast.ai让人人都成为深度学习高手的策略方针

6:40-7:26

How to make DL accessible to everyone to do useful things

- 创建fastai library

- 能用最简洁,最快速,最可靠的构建深度学习模型

- 创建fast.ai系列课程

- 帮助更多人免费便捷地学习

- 将学术论文成果落地 fastai library

- 将学术界最高水平的技术融入到fastai library中,让所有人便捷使用

- 创建维护学习社区

- 让深度学习实践者能够找到并帮助彼此

需要投入多少精力,会有怎样的收获

7:25-8:51

How much to invest and What I get out

- 我们需要投入都少精力学习?

- 看完课程视频至少需要14小时,完成代码实验整个课程至少需要70-80小时

- 当然具体情况,因人而异

- 有人全职学习

- 也有人只看视频,不做作业,只需要获取课程概要

- 如果跟玩整个课程,你将能够做到:

- 在世界级水平实践深度学习,甚至训练出打败学术state of art水平的模型

- 对任意图片数据集做分类

- 对文本做情感分类

- 预测连锁超市销售额

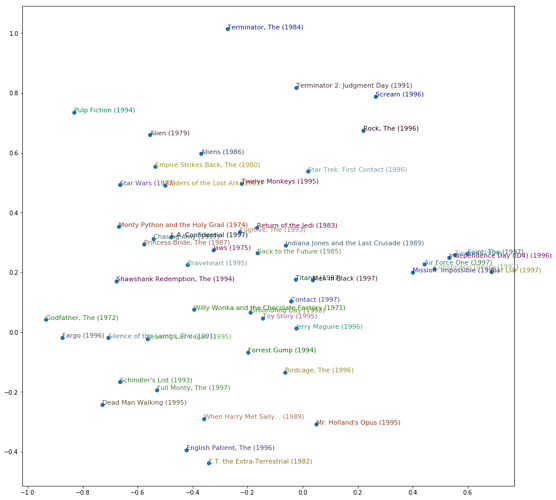

- 创建类似Netflix的电影推荐系统

学习深度学习的必要基础是什么,以及常见的误解与成见

8:51-10:23

Prerequisites and False assumptions and claims on DL

- 仅凭1年python和高中数学,你是无法学会深度学习的!

- fast.ai让其不攻自破

- 深度学习是黑箱,无法理解

- 很多模型是可解释可理解的

- 比如可以用可视化工具理解CNN参数的功能

- 需要巨量数据才能做深度学习

- 迁移学习不需要大量数据

- 可分享的训练好的模型

- 做前沿研究需要博士学位为前提

- fastai 或则 keras libs 和MOOCs就足够了

- Jeremy只有哲学学位

- 好应用仅限于视觉识别领域

- 优秀应用同样存在于speech, tabular data, time series领域

- 需要大量GPU为前提

- 一个云端GPU即可,甚至是免费的

- 当然对于大型项目,的确需要多个GPU

- 深度学习不是真正的AI

- 我们不是在造人造大脑

- 我们只是在用深度学习做造福世界的事情

完成第一课后你将能做些什么

10:22-11:04

What will you be able to do at the end of lesson 1

- 创建你的图片数据集的分类器

- 你甚至可以仅用30张图实现曲棍球和棒球的接近完美的分类器训练

fast.ai的教学哲学是什么

11:04-12:31

What is fastai learning philosophy

- 从代码开始,然后在尝试理解理论

- 不需要在博士最后一年才开始真正写代码做项目

- 创造多个模型,研究模型内部结构,掌握良好的深度学习实践者的直觉

- 用Jupyter notebooks做大量代码实验

如何像专业人士一样使用jupyter notebook

12:30-13:17 *

How to use Jupyter notebook as a pro

- 从最基础的快捷键开始,每天学习3-5个快捷键,每天用,很快就上手了

什么是Jupyter magics

13:17-14:00

What are Jupyter magics

什么是fastai库以及如何使用

14:00-17:56

What are fastai lib and how to use it

-

fast.ai课程和机构名称 -

fastai库的名称 - 依赖的library

-

pytorchis easier and more powerful than tensorflow

-

- fastai 支持四大领域应用

- CV, NLP, Tabular, Collaborative Filtering

- 两种

*的使用都支持-

from fastai import *(个人评论:但实际作用几乎为零) -

from fastai.vision import *引入所需工具

-

个人评论:代码探索发现 from fastai import *并无真用途

学术和Kaggle数据,CatsDogs 与 Pets数据的异同

17:56-21:08

Academic vs Kaggle Datasets, CatsDogs vs Pets dataset

两大数据集来源

- 学术和Kaggle

学术数据集特点?

- 学术人员耗费大量时间精力收集处理

- 用来解决富有挑战的问题

- 对比不同方法或模型的表现效果,从而凸显新方法的突破性表现

- 不断攀登和发表学术最优水平

为什么这些数据集有帮助?

- 提供强大的比较基准

- 排行榜与学界最优表现

- 从而得知你的模型的好坏程度

记住要注明对数据集和论文的引用

- 同时学习了解数据集创建的背景和方法

你的宠物数据集问题的难度

- 猫狗大战相比较是非常简单的问题

- 二元分类,全部猜狗也有50%准确率

- 而且猫狗之间差异大,特征比较简单

- 刚开始做猫狗大战竞赛时,80%已经是行业顶级水平

- 如今我们的模型几乎做到预测无误

- 宠物数据集要求识别37不同种的猫狗

- 猜一种只会有1/37正确率

- 因为不同种猫和不同种狗之间差异小,特征难度更高

- 我们要做的是细微特征分类fine-grain classification

如何下载数据集

21:07-23:56

How to download dataset with fastai

AWS 在云端为fast.ai所需数据集提供免费告诉下载

我们去Kernel中看untar_data的用法

如何进入图片文件夹以及查看里面的文件

23:56-25:49

How to access image folders and check filenames inside

我们去Kernel中看Path,ls,get_image_files的用法

如何从文件名中获取标注

25:40-27:53

How to get the labels of dataset

如何理解用regular expression 提取label,见笔记

运行代码理解其用法,见kernel

为什么以及如何选择图片大小规格

27:48-29:15

Why and how to pick the image size for DataBunch

- Why do we need to set the image size?

- every image has its size

- GPU needs images with same shape, size to run fast

- what shape we usually create?

- in part 1, square shape, most used

- in part 2, learn rectangle shape, with nuanced difference

- What size value work most of the time generally?

size = 224

- What special about fastai? *

- teach us the best and most used techniques to improve performance

- make the all the decisions for us if necessary, such as

size=224now

什么是DataBunch

29:15-29:56

What is a DataBunch

What does DataBunch contain

- training Dataset

- images and labels

- texts and labels

- tabular data and labels

- etc

- validation Dataset

- testing Dataset (optional)

如何normalize DataBunch

29:56-30:19

What does normalize do to DataBunch

to make data about the same size with same mean and std

如果图片大小不是224会怎样

30:19 - 31:50

What to do if size is not 224

* get_transforms function will make the size so

- data looks zoomed

- center-cropping

- resizing

- padding

- these techniques will be used in data augmentation

normalize 图片意味着什么

31:50-33:01

What does it mean to normalize images

* all pixel start 0 to 255

* but some channels are very bright and other not, vary a lot

* if all channels don’t have mean 0 and std 1

* models may be hard to train well

为什么图片尺寸是224而不是256

33:01- 33:34

Why 224 not 256 as power of 2

- because final layer of model is 7x7

- so 224 is better than 256

- more in later

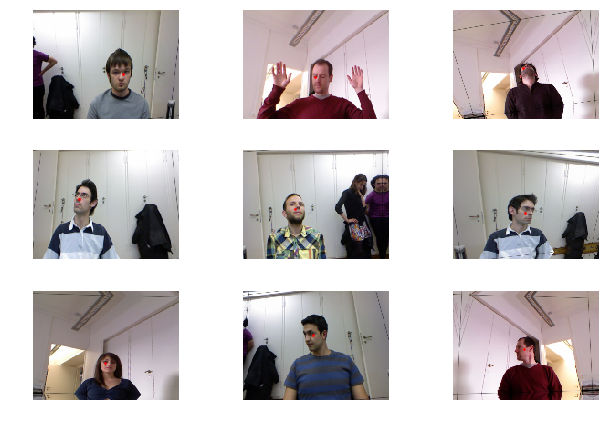

如何查看图片和标注

33:34-35:06

How to check the real images and labels

* to be a really good practitioner is to look at your data

* how to look at your images

* data.show_batch(rows=3, figsize=(7,6))

* how to look at your labels

* print(data.classes

- what is data.c of DataBunch

* number of classes for classification problem

* not for regression and other problems

如何构建一个CNN模型

35:06-37:25

How to build a CNN learner/model

what is a Learner?

- things can learn to fit the data/model

what is ConvLearner?

- to create convolution NN

- ConvLearner is replaced by create_cnn *

what is needed to make such a model?

- required

- DataBunch

- Model: resnet34 or resnet50

- metrics = is from kwargs *

How to pick between resnet34 and resnet50?

- always start with smaller one

- then see whether bigger is better

What is metrics

- things to print out during training

- e.g., error_rate

为什么要用训练好的模型的框架与参数

37:25-40:03

Why use a pretrained model (framework and parameters) for your CNN? in other words, What is transfer learning?

* the model resnet34 will be automatically downloaded if not already so

* what exactly is downloaded

* pretrained model with weights trained with ImageNet dataset

* why a pretrained model is useful?

* such model can recognize 1000 categories

* not the 37 cats and dogs,

* but know quit a lot about cats and dogs

* what is transfer learning?

* take a model which already can do something very well (1000 objects)

* make it do your thing well (37 cats dogs species)

* also need thousands times less data to train your model

什么是过拟合?为什么我们的模型很难过拟合?

40:03-41:40

what is overfitting? why wouldn’t the model cheating?

How do we know the model is not cheating?

- not learn the patterns to tell cricket from baseball

- but only member those specific objects in the images

How to avoid cheating

- use validation set which your model doesn’t see when training

what use validation set for?

- use validation set to plot metrics to check how good model is fairly

Where is validation set?

- automatically and directly baked into the DataBunch

- to enforce the best practice, so it is impossible to not use it

如何用最优的技术来训练模型?

41:40-44:33

How to train the model with the best technique

we can use function fit, but always better to use fit_one_cycle

What is the big deal of fit_one_cycle?

- a paper released in 2018

- more accurate and faster than any previous approach

- ::fastai incorporates the best current techniques *::

keyboard shortcut for functions

* tab to use autocomplete for possible functions

* shift + tab to display all args for the function

how to pick the best number of epochs for training?

- learn how to tune epochs (4) in later lessons

- I don’t remember it had been discussed in later 6 lessons *

- not too many, otherwise easy overfit

如何了解模型的好坏?

44:28 - 46:42

How to find out how good is your model

- How to find out the state of art result of 2012 paper?

- Section of Experiment, check on accuracy

- Oxford, nearly 60% accuracy

- What is our result

- 96% accuracy

如何放大课程效果?

46:47-48:41

How to get the most out of this course

What is the most occurred mistake or regret?

- spend too much on the concept and theory

- spend too little time on notebooks and codes

What your most important skill is about

- understanding what goes in

- and what comes out

fastai的业界口碑以及与kera的对比

48:41-52:53

The popularity of fastai library

* Why we say fastai library becomes very popular and important

* major cloud support fastai

* many researchers start to use fastai

* what is the best way of understand fastai software well?

* docs.fast.ai

* How fastai compare with keras

* codes are much shorter

* keras has 31 lines which you need to make a lot of decisions

* fastai has 5 lines which make the decisions for you

* accuracy is much higher

* training time is much less

* cutting edge researches use fastai to build models

* “the ImageNet moment” for NLP done with fastai

* github: “towards Natural Language Semantic code search”

* Where on the forum people talking about papers?

* Deep Learning section

学员能够用fast.ai所学完成的项目

52:45-65:51

What students achieved with fastai and this course

Sarah Hooker

- first course student, economics (no background in coding)

- delta analytics to detect chainsaw to prevent rainforest

- google brain researcher and publish papers

- go to Africa to setup the first DL research center

- dig deep into the course and Deep learning book

Christine Mcleavey Payne

- 2018 year student

- openAI

- Clara: a neural net music generator

- background: math and …. too much to mention

- pick one project and do it really well and make it fantastic

Alexandre Cadrin

- can tell MIT X-ray chest model is overfitting

- bring deep learning into your industry and expertise

Melissa Fabros

- English literature degree, became Kiva engineer

- help Kiva (micro-lending) to recognize faces to reduce gender and racial bias

Karthik and envision

- after the course started a startup named envision

- help blind people use phone to see ahead of you

Jeremy helped a small student team

- to beat google team in ImageNet competition

Helena Saren? @glagoli…?

- combine her own artistic skills with image generator

- style transfer

a student as Splunk engineer to detect fraud

Francisco and Language Model Zoo at the forum

- use NLP to do different languages with different students

Don’t feel intimidated and ask for help and contribute

为什么选择ResNet 而非Inception作为模型框架和已训练的参数

66:00 - 67:57

Why use Resnet rather than Inception

* DAWNBench on ImageNet classification

* “Resnet is good enough” for top 5 places

* edge computing

* but the most flexible way is let your model on cloud talk with your mobile app

* inception is memory intensive and not resilient

如何保存训练好的模型

67:57-68:43

How to save a trained model

What is inside the trained model?

- updated weights

why do we need to save model?

- keep working and updating the previous weights

how to save a model?

- learn.save("stage-1")

where will be the model be located?

- in the same fold where data is

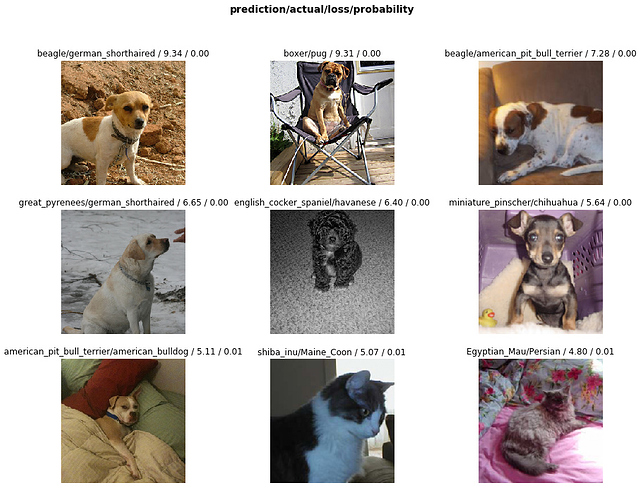

如何画出损失值最高的数据/图?

68:43-73:22

how to plot top losses examples/images

How to create model interpreter?

- interp = ClassificationInterpretation.from_learner(learn)

Why plot the high loss?

- to find out our high prob predictions are wrong

- they are the defect of our model

How to plot top losses using the interpreter?

- interp.plot_top_losses(9, figsize=(15,11))

How to read the output of the plotting and numbers?

- doc(interp.plot_top_losses) -> doc & source

What does those numbers on the plotting mean?

- prediction, actual, loss, prob of actual (not prediction)

Why fastai source code is very easy to read?

- intension when writing it

- don’t be afraid to read the source

Why it is useful to see top loss images

- figure out where is the weak spot

- error analysis * (Ng)

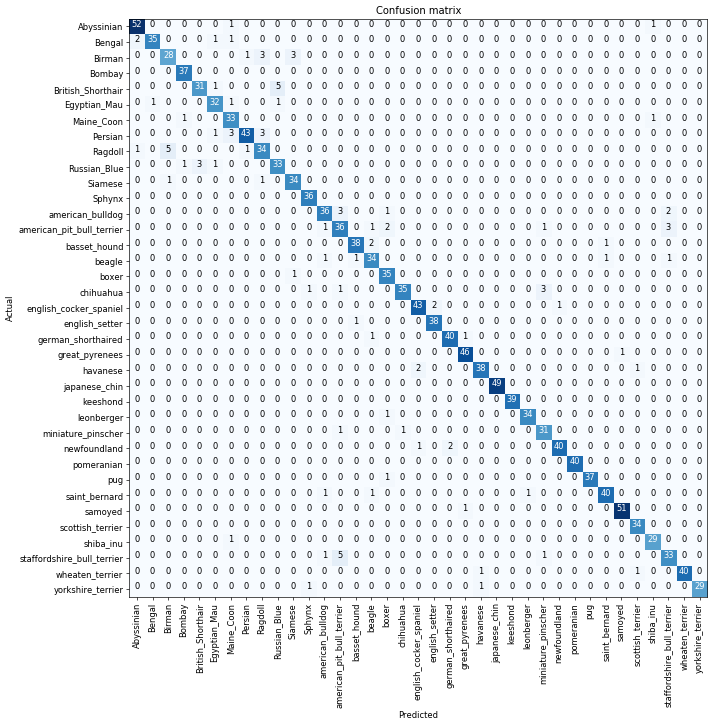

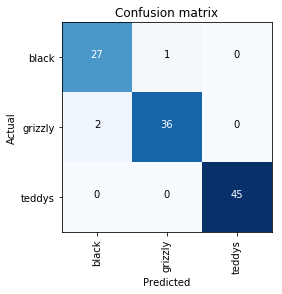

如何找出模型混淆度最高的图片?

73:20 -74:39

How to find out the most confused images of our model

* why we need the confusion matrix to interpret the model?

* when not to use interp.plot_confusion_matrix(figsize=(12,12), dpi=60)?

* when to use interp.most_confused(min_val=2)?

如何用微调改进模型?

74:37 - 76:26

How to improve our model

What is the default way of training?

- add a few layers at the end

- only train or update weights for the last few layers

What is the benefit of the default way?

- less likely to overfit

- much faster

How to train the whole model?

- unfreeze the model learn.unfreeze()

- train the entire model learn.fit_one_cycle(1)

why it is easy to ruin the model by training the whole model?

- learning rate is more likely to set too large for earlier layers *

- to understand it please see the next question

CNN模型在学习些什么?以及为什么直接用全模型学习效果不佳?

76:24 - 82:32

what is CNN actually learning and why previous full model training didn’t work

what is the plot of layer 1?

- coefficients? weights? filters

- finding some basic shapes

what are the plots of layer 2?

- 16 filters

- each filter is good at finding one type of pattern

What are the plots of layer3?

- 12 filters

- each is more complex patterns

What are inside plots of layer4 and layer 5?

- filters to find out even more complex patterns using previous layer patterns

Which layer’s filter pattern can be improved?

- less likely for layer 1

- maybe not layer 4-5

- probably much later layers should be changed to some extent

Why the previous full model train won’t work?

- the same learning rate is applied to earlier and later layers

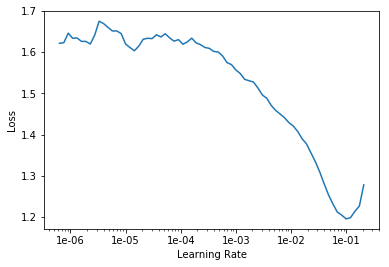

训练全模型的正确方式是什么

82:32- 86:55

How to train the whole model in the right way

How we go back to the unbroken model by full training?

- load the backup model

- learn.load('stage-1');

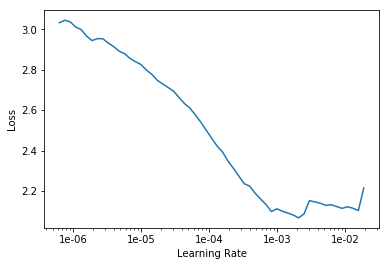

How to find the best learning rate?

- to find the fastest learning rate value

- learn.lr_find()

How to plot the result of learning rate finding?

- learn.recorder.plot()

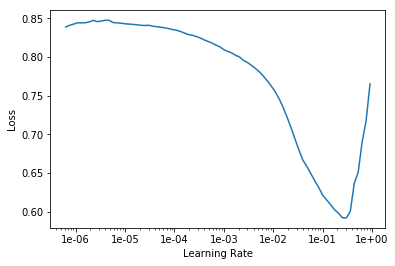

how to read the learning rate plot?

- learn.unfreeze()

- learn.fit_one_cycle(2, max_lr=slice(1e-6,1e-4))

- how to find the lowest/fastes learning rate?

- find the lr value before loss get worse

- how to find the highest/slowest learning rate?

- 10x smaller than original learning rate

- how to give learning rate value to middle layers?

- distribute values equally to other middle layers

Why you can’t win Kaggle easily?

- many fastai alumni compete on Kaggle

- this is the first thing they will try out

如何用更大的模型来改进效果

86:55-91:00

How to improve model with more layers

to use ResNet50 instead of ResNet34

- data = ImageDataBunch.from_name_re(path_img, fnames, pat, ds_tfms=get_transforms(), size=299, bs=bs//2).normalize(imagenet_stats)

- learn = create_cnn(data, models.resnet50, metrics=error_rate)

what to do when GPU memory is tight?

- due to model is too large and take too much GPU memory

- less 8 GPU memory can’t run ResNet50

How to fix it?

- shrink the batch_size when creating the DataBunch

How good is 4% error rate for Pets dataset?

- compare to CatsDogs 3% error rate

- 4% for 37 similar looking species is extraordinary

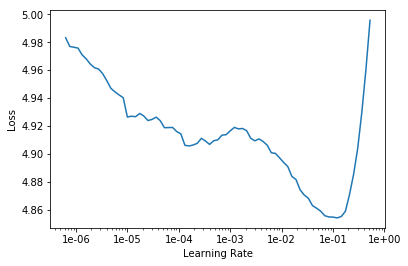

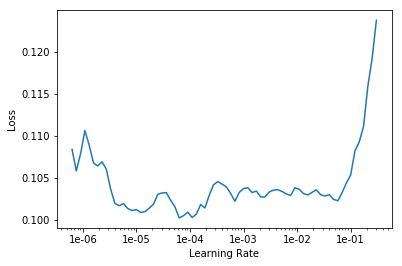

Why ResNet50 still use the same lr range from ResNet34? *

- the lr plot looks different from that of ResNet34

- but why we still use the following code

- learn.fit_one_cycle(3, max_lr=slice(1e-6,1e-4))

- problem asked on formum

How to use most confused images to demonstrate model is already quite good?

- check out the most confused images online

- see whether human can’t tell the difference neither

- if so, then model is doing good enough

- it teaches you to become a domain expert

生成DataBunch的不同方式有哪些

91:35-95:10

Different ways to put your data into DataBunch

How to use MNIST sample dataset?

* path = untar_data(URLs.MNIST_SAMPLE); path

How to create DataBunch while labels on folder names?

- data = ImageDataBunch.from_folder(path, ds_tfms=tfms, size=26)

How to check the images and labels?

How to read from CSV?

- df = pd.read_csv(path/'labels.csv')

How to create DataBunch while labels in CSV file?

- data = ImageDataBunch.from_csv(path, ds_tfms=tfms, size=28)

How to create DataBunch while labels in dataframe?

- data = ImageDataBunch.from_df(path, df, ds_tfms=tfms, size=24)

How to create DataBunch while labels in filename?

- data = ImageDataBunch.from_name_re(path, fn_paths, pat=pat, ds_tfms=tfms, size=24)

How to create DataBunch while labels in filename using function?

- data = ImageDataBunch.from_name_func(path, fn_paths, ds_tfms=tfms, size=24, label_func = lambda x: '3' if '/3/' in str(x) else '7')

How to create DataBunch while labels in a list?

- labels = [('3' if '/3/' in str(x) else '7') for x in fn_paths]

- data = ImageDataBunch.from_lists(path, fn_paths, labels=labels, ds_tfms=tfms, size=24)

如何最大化利用fastai文档

95:10-97:28

How to make the most out of documents

- To experiment the doc notebook

- How do I do better?

关于fastai与多GPU和3D数据的问题

97:28-98:09

QA on fastai with multi-GPU, 3D data

一些有趣的项目

98:09-end

An interesting and inspiring project

how to transform mouse moment into images

then train it with CNN

第二课 创造你的数据集

如何使用论坛与参与贡献

how to use forum and contribute to fastai

start - 4:26

how to use forum and contribute to fastai

resources

* How to contribute to fastai - Part 1 (2019)

* Doc Maintenance | fastai

where is the most important information at forum?

- official updates and resources

- start from here

how not be intimidated by the overwhelming forum

- click summary button

如何重返工作

How to return to work?

- with [kaggle](https://course.fast.ai/update_kaggle.html)

- click into kernels

- with your local workplace

- `git pull`

- `condo update conda` outside conda environment

- `conda install -c fastai fastai`

学员第一周的成果

What students have done after the first week?

4:26-12:56

What students have done after the first week

- use NN to clear whatapp downloaded images

- use NN to beat the state of art on recognize background noise

- new state of art performance on a language DHCD recognition

- turn point mutation of tumor into images and beat the sate of art

- automatically playing science communication games with transfer learning and fastai

- James Delinger: do useful things without reading math equations (greek)

- Daniel R. Armstrong: want to contribute to the library, step by step, you will get there

- project to classify zucchinis (39 images) and cucumbers (47 images)

- use PCA to create a hairless classifier for dogs and cats

- classifier for new and old special buses

- models classify 110 cities from satellite images

- models to classify complete and incomplete construction sites

课程结构和教学哲学

What is the course structure and teaching philosophy

12:56 - 16:20

What is the course structure and teaching philosophy

* recursive learning in curriculum

* Perkins’s theory (chinese version)

* code first

* whole game with videos

* concepts not details

* keep moving forward

如何创造属于你的图片分类数据集

How to create your own dataset for classifier

16:20-23:47

How to create your own dataset for classifier

inspired by PyImageSearch, great resources

project to classify teddy bear, grizzly bear and black bear

search “teddy bear” in google image

- ctrl+shift+j or cmd+opt+j

paste the codes and save image urls into a file in your directory

how to create three set of folders experimentally

- create variables for a folder and url.txt

- create the folder path

- download the images into the folder

- do it three times for three kinds of bears

How to verify images that are problematic with `verify_images’?

如何从单一图片文件夹中创造DataBunch

How to create DataBunch from a single fold of images?

23:47-25:42

How to create DataBunch from a single fold of images

- how to set the training set from the single folder

- how to split into a validation set from the single folder

- why set random seed before creating DataBunch?

如何检验图片,标注,数据集的大小

How to check images, labels, and sizes of train and validation set

25:42-26:49

How to check images, labels, and sizes of train and validation set

* How to display images from a batch

* How to check labels and classes

* How to count the size of train_ds and valid_ds?

如何训练和保存模型

How to train and save the model

26:49-27:41

How to train and save the model

- how to create a CNN model with ResNet34 and plot error-rate

- how to train the model for 4 epochs

- how to save the trained model

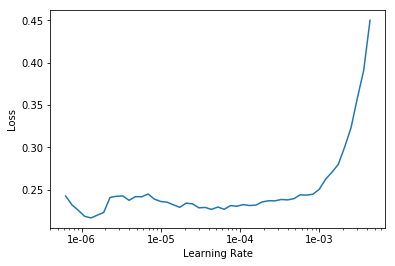

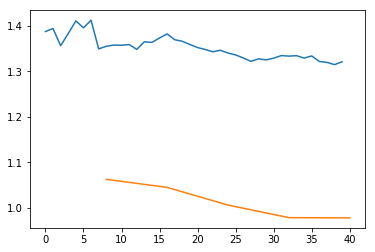

如何寻找最优学习率

如何从图中读取最优学习率区间

27:36-29:39

视频节点

- 怎样的下坡才是真正有意义的最优学习率区间?

- “bumpy"起伏不平的不好,”平滑坡陡”更好?

- 主要靠实验来积累感官经验,构造良好直觉

- 怎么选择的 (3e-5, 3e-4)?

- 确定好了3e-5后,通常选择1e-4或3e-4

- 依旧是依靠实验和经验累积

如何解读模型

How to interpret the model

29:39-29:57

How to interpret the model

how to read most confused matrix?

噪音数据和模型输出

Noisy data and model output

29:57-31:31

Noisy data and model output

What does noisy data mean?

- such as mislabelled data

What problem noisy data could cause model to have?

- unlikely, some data are predicted correctly with high confidence

- these data are likely to be mislabelled

Solution approach

- joint domain expert and machine automation

如何用widget清理数据中的噪音

How to clean up noisy data with widget?

31:31-35:32

How to clean up noisy data with widget

How to work with widget to clean mislabelled data manually?

如何为Nb创造一个widget

How to build a ipywidget for your notebook

35:12-37:37

How to build a ipywidget for your notebook

how to read the source code of the widget?

how to build a tool for notebook experimenter?

Exciting to create tools for fellow practitioners

encouraged to dig into the ipywidget docs

not a production web app

什么是偏差噪音

What is biased noise?

37:35-38:32

What is biased noise?

* most time after remove mislabelled data, model improved only a little

* it is normal as model can handle some level of noise itself

* what is toxic is biased noise, not randomly noisy data

如何将模型植入APP

How to put model into production web app?

38:32-45:50

How to put model into production web app

* why to run production on CPU not GPU?

* the time difference between CPU web app vs GPU server is 0.2 vs 0.01s

* how to prepare your model for production use?

* it is very easy and free to use with some instruction on course wiki

* try to make all your classifier into web apps

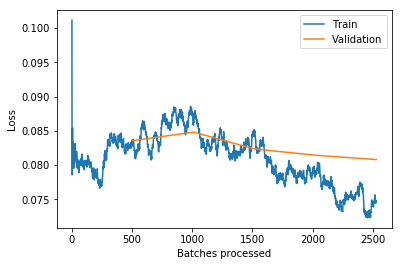

99%的时间里我们只需调控学习率和训练次数

99% of time what we need to finetune is lr and epochs for CV

46:05-53:09

99% of time what we need to finetune is lr and epochs for CV

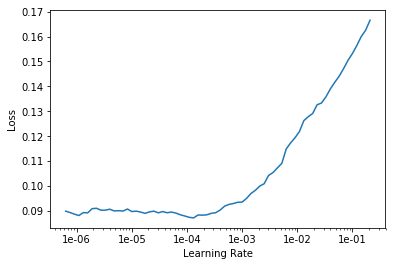

experiment what happen when lr is very high

- no way to undo it, has to recreate model

experiment what happen when lr is too low

- loss down very slow

validation loss is lower than training loss

- lr is too low

- too few epochs

too many epochs

- overfitting - to learn specific images of teddy bears

- signal - loss goes down but goes up again

- but it is difficult to make our model to overfit

图片和图片识别背后的数学

what is the math behind an image and its classification?

53:09-62:15

what is the math behind an image and its classification

what is the math behind an image and its classification?

what is behind learn.predict source

what does np.argmax do

what is error_rate source code?

what is behind accuracy function?

which dataset does metric apply to?

doc is not just nice printing of ?, because it may has examples

why use the 3 of 3e-5 often?

线性函数,数组乘法与神经网络的关系

what is linear function, and how matrix multiplication fit in?

62:15- 68:23

what is linear function, and how matrix multiplication fit in

* KhanAcademy for basics and advanced math

* to replace b with a_2*x_2

* there are lots of examples (x1, y1), (x2, y2), …

* Rachel’s best linear algebra course

* vectorization, dot product, matrix product to avoid loop and speed up

* matrix multiplication in visualization

关于数据大小,不对称数据,模型结构,参数的问题

QA on data size, unbalanced data, model framework and weights

68:32-74:14

QA on data size, unbalanced data, model framework and weights

How do we know we don’t have enough data

* lr is good, can’t be a little higher or lower

* if epochs goes a little bigger then make validation loss worse

* then we may need to get more data

* most time you need less data than you think

How do you deal with unbalanced data?

- do nothing, it always works

What is ResNet34 as function?

- function framework without number or weights

- pretrained model with weights

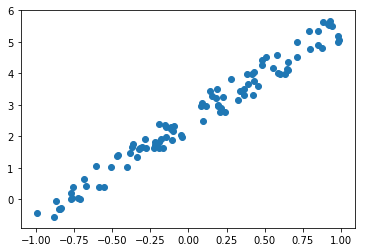

如何手动构建一个简单的神经网络

How to create the simplest NN (tensor, rank)?

74:14-101:10

How to create the simplest NN (tensor, rank)

what is the simplest architecture?

what is SGD?

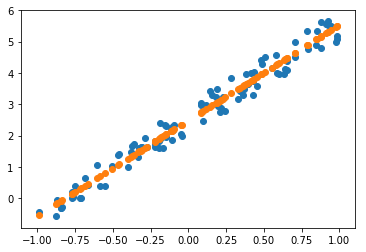

how to generate some data for a simple linear function?

how to use matrix product @ to create the linear architecture?

what is a tensor?

- array

what is a rank?

- rank 1 tensor is a vector

how to create the X features?

how to create the coefficients or the weights?

how to plot the x and y (ignoring x_2 as it is just 1)?

what about matplotlib?

how to create MSE function?

how to do scatter plot?

how to do Gradient Descent?

how to calculate derivative with Pytorch?

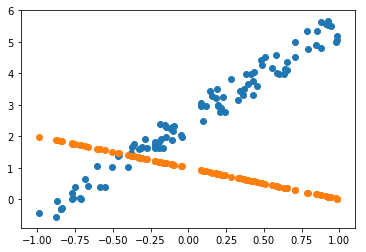

为什么需要学习率

why do we need learning rate at all?

101:10-105:47

why do we need learning rate at all

- derivative tells us direction and how much

- but it may not best reduce the loss

- we need learning rate to help get loss down appropriately

为什么小批量让训练更高效

why mini-batches makes training more efficient?

108:09-109:49

why mini-batches makes training more efficient

新学到的词汇

What are the new vocab learnt?

109:49-111:43

What are the new vocal learnt?

Learning rate

epoch: too many epochs, easily overfit

mini batch: more efficient than full batch training

SGD : GD with mini-batch

Model/Architecture: y = x@a, Resnet34, matrix product

parameters: weights

loss function

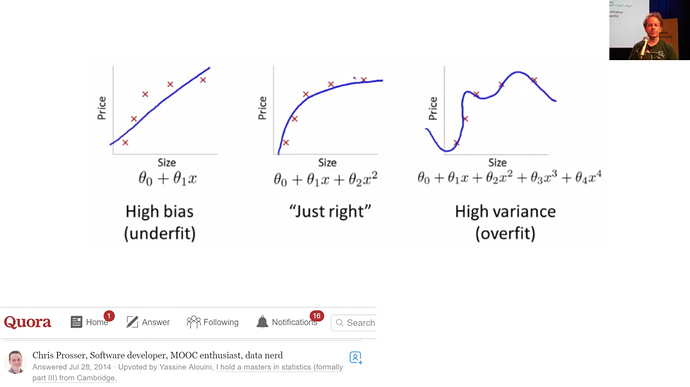

什么是过拟合,正则化与验证集

what is overfitting and regularization and validation set

114:43-end

what is overfitting and regularization and validation set

- what is training dataset on the graph?

- which model/graph is underfitting the training set?

- doing bad, having worse loss

- which model/graph is overfitting the training set?

- doing good, having low loss

- both are different from the right model

- both have bad loss on new/validation dataset

- false assumption

- more parameters -> overfitting

- less parameters -> underfitting

- truth

- overfitting and underfitting -> nothing to do with parameter number

- boss and org

- training set can tell underfitting from overfitting and ok models

- validation set can differ overfitting model from OK model

- use validation set from being sold snake oil

- further study

- Rachel’s blog post

- Rachel’s courses

第一课 你的宠物

本Nb的目的

the purpose of this Nb

In this lesson we will build our first image classifier from scratch, and see if we can achieve world-class results. Let’s dive in!

三行Jupyter notebook魔法代码

three lines of magics

Every notebook starts with the following three lines; they ensure that any edits to libraries you make are reloaded here automatically, and also that any charts or images displayed are shown in this notebook.

%reload_ext autoreload

%autoreload 2

%matplotlib inline

fastai如何使用import

how fastai designs import

We import all the necessary packages. We are going to work with the fastai V1 library which sits on top of Pytorch 1.0. The fastai library provides many useful functions that enable us to quickly and easily build neural networks and train our models.

如何快速调用我们所需的一切

import everything we need

from fastai.vision import *

from fastai.metrics import error_rate

如何解决内存不够问题?

how to handle out of memory problem?

If you’re using a computer with an unusually small GPU, you may get an out of memory error when running this notebook. If this happens, click Kernel->Restart, uncomment the 2nd line below to use a smaller batch size (you’ll learn all about what this means during the course), and try again.

设置小批量大小

set batch_size

bs = 64

# bs = 16 # uncomment this line if you run out of memory even after clicking Kernel->Restart

Looking at the data

关于Pets数据集

What Pets dataset is about?

We are going to use the Oxford-IIIT Pet Dataset by O. M. Parkhi et al., 2012 which features 12 cat breeds and 25 dogs breeds. Our model will need to learn to differentiate between these 37 distinct categories. According to their paper, the best accuracy they could get in 2012 was 59.21%, using a complex model that was specific to pet detection, with separate “Image”, “Head”, and “Body” models for the pet photos. Let’s see how accurate we can be using deep learning!

We are going to use the untar_data function to which we must pass a URL as an argument and which will download and extract the data.

如何查看函数文档帮助

How to get docs

help(untar_data)

Help on function untar_data in module fastai.datasets:

untar_data(url: str, fname: Union[pathlib.Path, str] = None, dest: Union[pathlib.Path, str] = None, data=True, force_download=False) -> pathlib.Path

Download `url` to `fname` if it doesn't exist, and un-tgz to folder `dest`.

fastai如何下载数据集

how fastai get dataset

path = untar_data(URLs.PETS); path

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet')

如何快捷查看文件夹内容

how to see inside a folder

path.ls()

[PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images'),

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/annotations')]

如何快速查看子文件夹

how to build path to sub-folders

path_anno = path/'annotations'

path_img = path/'images'

怎样才算查看数据

what does it mean to look at the data

The first thing we do when we approach a problem is to take a look at the data. We always need to understand very well what the problem is and what the data looks like before we can figure out how to solve it. Taking a look at the data means understanding how the data directories are structured, what the labels are and what some sample images look like.

处理数据集的难点是获取标注

getting labels is the key of handling dataset

The main difference between the handling of image classification datasets is the way labels are stored. In this particular dataset, labels are stored in the filenames themselves. We will need to extract them to be able to classify the images into the correct categories. Fortunately, the fastai library has a handy function made exactly for this, ImageDataBunch.from_name_re gets the labels from the filenames using a regular expression.

如何将文件夹中文件转化为文件地址列表

turn files inside a folder into a list of path objects

fnames = get_image_files(path_img)

fnames[:5]

[PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images/saint_bernard_188.jpg'),

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images/staffordshire_bull_terrier_114.jpg'),

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images/Persian_144.jpg'),

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images/Maine_Coon_268.jpg'),

PosixPath('/home/ubuntu/.fastai/data/oxford-iiit-pet/images/newfoundland_95.jpg')]

如何确保验证集一致性?

how to make sure the same validation set?

np.random.seed(2)

pat = r'/([^/]+)_\d+.jpg$'

如何从regular expression 创建ImageDataBunch

how to create an ImageDataBunch from re

data = ImageDataBunch.from_name_re(path_img,

fnames,

pat,

ds_tfms=get_transforms(),

size=224,

bs=bs

).normalize(imagenet_stats)

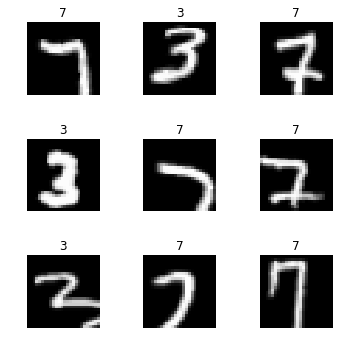

打印图片和标注

print out images with labels

data.show_batch(rows=3, figsize=(7,6))

打印类别和c

print out all classes and c

print(data.classes)

len(data.classes),data.c

['Abyssinian', 'Bengal', 'Birman', 'Bombay', 'British_Shorthair', 'Egyptian_Mau', 'Maine_Coon', 'Persian', 'Ragdoll', 'Russian_Blue', 'Siamese', 'Sphynx', 'american_bulldog', 'american_pit_bull_terrier', 'basset_hound', 'beagle', 'boxer', 'chihuahua', 'english_cocker_spaniel', 'english_setter', 'german_shorthaired', 'great_pyrenees', 'havanese', 'japanese_chin', 'keeshond', 'leonberger', 'miniature_pinscher', 'newfoundland', 'pomeranian', 'pug', 'saint_bernard', 'samoyed', 'scottish_terrier', 'shiba_inu', 'staffordshire_bull_terrier', 'wheaten_terrier', 'yorkshire_terrier']

(37, 37)

Training: resnet34

迁移学习大概模样?

what is transfer learning like?

Now we will start training our model. We will use a convolutional neural network backbone and a fully connected head with a single hidden layer as a classifier. Don’t know what these things mean? Not to worry, we will dive deeper in the coming lessons. For the moment you need to know that we are building a model which will take images as input and will output the predicted probability for each of the categories (in this case, it will have 37 outputs).

We will train for 4 epochs (4 cycles through all our data).

如何做CNN迁移学习

how to create a CNN model as transfer learning

learn = create_cnn(data, models.resnet34, metrics=error_rate)

如何查看模型结构

how to see the structure of model

learn.model

Sequential(

(0): Sequential(

(0): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3), bias=False)

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU(inplace)

(3): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)

(4): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(1): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(2): BasicBlock(

(conv1): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(64, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(5): Sequential(

(0): BasicBlock(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(2): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(3): BasicBlock(

(conv1): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(6): Sequential(

(0): BasicBlock(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(2): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(3): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(4): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(5): BasicBlock(

(conv1): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(7): Sequential(

(0): BasicBlock(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(downsample): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2), bias=False)

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(1): BasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(2): BasicBlock(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(relu): ReLU(inplace)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

)

(1): Sequential(

(0): AdaptiveConcatPool2d(

(ap): AdaptiveAvgPool2d(output_size=1)

(mp): AdaptiveMaxPool2d(output_size=1)

)

(1): Flatten()

(2): BatchNorm1d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(3): Dropout(p=0.25)

(4): Linear(in_features=1024, out_features=512, bias=True)

(5): ReLU(inplace)

(6): BatchNorm1d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(7): Dropout(p=0.5)

(8): Linear(in_features=512, out_features=37, bias=True)

)

)

如何用最优默认值训练模型

how to fit the model with the best default setting

learn.fit_one_cycle(4)

Total time: 01:46

| epoch | train_loss | valid_loss | error_rate |

|---|---|---|---|

| 1 | 1.409939 | 0.357608 | 0.102165 |

| 2 | 0.539408 | 0.242496 | 0.073072 |

| 3 | 0.340212 | 0.221338 | 0.066306 |

| 4 | 0.261859 | 0.216619 | 0.071042 |

如何保存模型

how to save a model

learn.save('stage-1')

Results

如何判断模型是否工作正常?

how do we know our model is working correctly or reasonably or not?

Let’s see what results we have got.

We will first see which were the categories that the model most confused with one another. We will try to see if what the model predicted was reasonable or not. In this case the mistakes look reasonable (none of the mistakes seems obviously naive). This is an indicator that our classifier is working correctly.

confusion matrix能告诉我们什么?

what can confusion matrix tell us?

Furthermore, when we plot the confusion matrix, we can see that the distribution is heavily skewed: the model makes the same mistakes over and over again but it rarely confuses other categories. This suggests that it just finds it difficult to distinguish some specific categories between each other; this is normal behaviour.

如何获取最大损失值对应照片的序号和损失值

how to access the idx and losses of the images with the top losses

interp = ClassificationInterpretation.from_learner(learn)

losses,idxs = interp.top_losses()

len(data.valid_ds)==len(losses)==len(idxs)

True

如何查看漂亮的docs

how to print out docs nicely

doc(interp.plot_top_losses)

如何画confusion matrix

how to plot confusion matrix

interp.plot_confusion_matrix(figsize=(12,12), dpi=60)

如何打印出最易出错的类别和它们的计数

how to print out the most confused categories and count errors

interp.most_confused(min_val=2)

[('British_Shorthair', 'Russian_Blue', 5),

('Ragdoll', 'Birman', 5),

('staffordshire_bull_terrier', 'american_pit_bull_terrier', 5),

('Birman', 'Ragdoll', 3),

('Birman', 'Siamese', 3),

('Persian', 'Maine_Coon', 3),

('Persian', 'Ragdoll', 3),

('Russian_Blue', 'British_Shorthair', 3),

('american_bulldog', 'american_pit_bull_terrier', 3),

('american_pit_bull_terrier', 'staffordshire_bull_terrier', 3),

('chihuahua', 'miniature_pinscher', 3)]

Unfreezing, fine-tuning, and learning rates

什么时候解冻模型?

when to unfreeze the model?

Since our model is working as we expect it to, we will unfreeze our model and train some more.

如何解冻模型?

how to unfreeze the model?

learn.unfreeze()

如何训练一次

how to fit for one epoch

learn.fit_one_cycle(1)

Total time: 00:26

| epoch | train_loss | valid_loss | error_rate |

|---|---|---|---|

| 1 | 0.558166 | 0.314579 | 0.101489 |

如何保存模型

how to save the model

learn.load('stage-1');

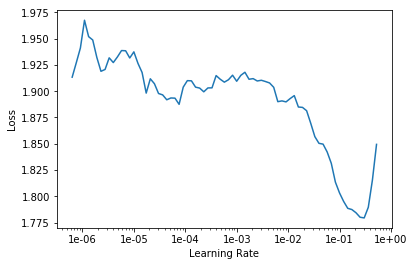

如何在一个范围里探索学习率对损失值影响?

how to explore lr within a range for lower loss?

learn.lr_find()

LR Finder is complete, type {learner_name}.recorder.plot() to see the graph.

如何对学习率损失值作图以及解读最佳区间?

how to plot the loss-lr graph and read the best range?

learn.recorder.plot()

如何解冻模型并用学习率区间训练数次

how to unfreeze model and fit with a specific range of lr with epochs

learn.unfreeze()

learn.fit_one_cycle(2, max_lr=slice(1e-6,1e-4))

Total time: 00:53

| epoch | train_loss | valid_loss | error_rate |

|---|---|---|---|

| 1 | 0.242544 | 0.208489 | 0.067659 |

| 2 | 0.206940 | 0.204482 | 0.062246 |

That’s a pretty accurate model!

Training: resnet50

what is the difference between resnet34 and resnet50

what is the difference between resnet34 and resnet50

Now we will train in the same way as before but with one caveat: instead of using resnet34 as our backbone we will use resnet50 (resnet34 is a 34 layer residual network while resnet50 has 50 layers. It will be explained later in the course and you can learn the details in the resnet paper).

why use larger model and image to train with smaller batch size?

why use larger model and image to train with smaller batch size?

Basically, resnet50 usually performs better because it is a deeper network with more parameters. Let’s see if we can achieve a higher performance here. To help it along, let’s us use larger images too, since that way the network can see more detail. We reduce the batch size a bit since otherwise this larger network will require more GPU memory.

how to create an ImageDatabunch with re and setting image size and batch size?

how to create an ImageDatabunch with re and setting image size and batch size?

data = ImageDataBunch.from_name_re(path_img,

fnames,

pat,

ds_tfms=get_transforms(),

size=299,

bs=bs//2).normalize(imagenet_stats)

how to create an CNN model with this data?

how to create an CNN model with this data?

learn = create_cnn(data, models.resnet50, metrics=error_rate)

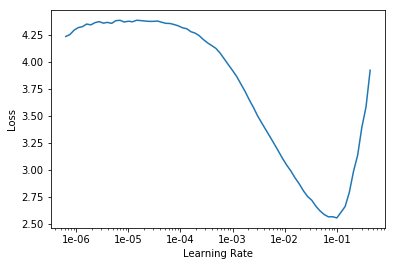

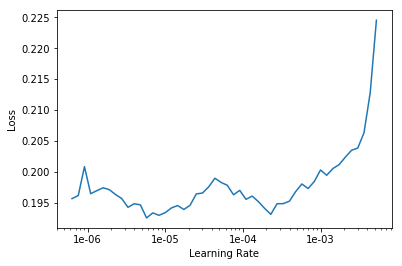

find and plot the loss-lr relation

find and plot the loss-lr relation

learn.lr_find()

learn.recorder.plot()

LR Finder complete, type {learner_name}.recorder.plot() to see the graph.

how to fit the model 8 epochs

how to fit the model 8 epochs

learn.fit_one_cycle(8)

Total time: 06:59

epoch train_loss valid_loss error_rate

1 0.548006 0.268912 0.076455 (00:57)

2 0.365533 0.193667 0.064953 (00:51)

3 0.336032 0.211020 0.073072 (00:51)

4 0.263173 0.212025 0.060893 (00:51)

5 0.217016 0.183195 0.063599 (00:51)

6 0.161002 0.167274 0.048038 (00:51)

7 0.086668 0.143490 0.044655 (00:51)

8 0.082288 0.154927 0.046008 (00:51)

how to save the model with a different name

how to save the model with a different name

learn.save('stage-1-50')

It’s astonishing that it’s possible to recognize pet breeds so accurately! Let’s see if full fine-tuning helps:

how to unfreeze and fit with a specific range for 3 epochs

how to unfreeze and fit with a specific range for 3 epochs

learn.unfreeze()

learn.fit_one_cycle(3, max_lr=slice(1e-6,1e-4))

Total time: 03:27

epoch train_loss valid_loss error_rate

1 0.097319 0.155017 0.048038 (01:10)

2 0.074885 0.144853 0.044655 (01:08)

3 0.063509 0.144917 0.043978 (01:08)

how to go back to previous model

how to go back to previous model

learn.load('stage-1-50');

how to get classification model interpretor

how to get classification model interpretor

interp = ClassificationInterpretation.from_learner(learn)

how to print out the most confused categories with minimum count

how to print out the most confused categories with minimum count

interp.most_confused(min_val=2)

[('american_pit_bull_terrier', 'staffordshire_bull_terrier', 6),

('Bengal', 'Egyptian_Mau', 5),

('Bengal', 'Abyssinian', 4),

('boxer', 'american_bulldog', 4),

('Ragdoll', 'Birman', 4),

('Egyptian_Mau', 'Bengal', 3)]

Other data formats

how to get MNIST_SAMPLE dataset

how to get MNIST_SAMPLE dataset

path = untar_data(URLs.MNIST_SAMPLE); path

PosixPath('/home/ubuntu/course-v3/nbs/dl1/data/mnist_sample')

how to set flip false for transformation

how to set flip false for transformation

tfms = get_transforms(do_flip=False)

how to create ImageDataBunch from folders

how to create ImageDataBunch from folders

data = ImageDataBunch.from_folder(path, ds_tfms=tfms, size=26)

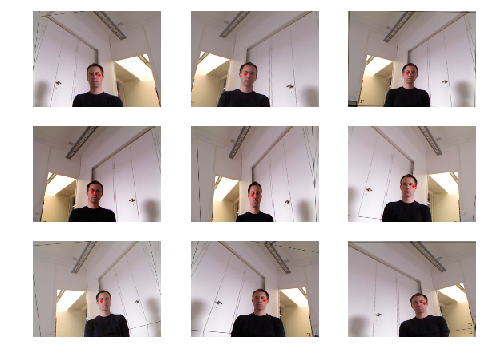

how to print out image examples from a batch

how to print out image examples from a batch

data.show_batch(rows=3, figsize=(5,5))

how to create a cnn with resnet18 and accuracy as metrics

how to create a cnn with resnet18 and accuracy as metrics

learn = create_cnn(data, models.resnet18, metrics=accuracy)

how to fit 2 epocs

how to fit 2 epocs

learn.fit(2)

Total time: 00:23

epoch train_loss valid_loss accuracy

1 0.116117 0.029745 0.991168 (00:12)

2 0.056860 0.015974 0.994603 (00:10)

how to read a csv with pd

how to read a csv with pd

df = pd.read_csv(path/'labels.csv')

how to read first 5 lines

how to read first 5 lines

df.head()

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| name | label | |

|---|---|---|

| 0 | train/3/7463.png | 0 |

| 1 | train/3/21102.png | 0 |

| 2 | train/3/31559.png | 0 |

| 3 | train/3/46882.png | 0 |

| 4 | train/3/26209.png | 0 |

how to create ImageDataBunch with csv

how to create ImageDataBunch with csv

data = ImageDataBunch.from_csv(path, ds_tfms=tfms, size=28)

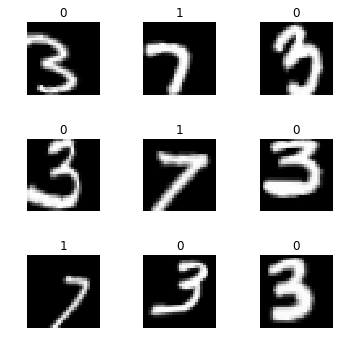

how to print out images from a batch and classes

how to print out images from a batch and classes

data.show_batch(rows=3, figsize=(5,5))

data.classes

[0, 1]

how to create ImageDataBunch from df

how to create ImageDataBunch from df

data = ImageDataBunch.from_df(path, df, ds_tfms=tfms, size=24)

data.classes

[0, 1]

how to create file path object into a list from df

how to create file path object into a list from df

fn_paths = [path/name for name in df['name']]; fn_paths[:2]

[PosixPath('/home/ubuntu/course-v3/nbs/dl1/data/mnist_sample/train/3/7463.png'),

PosixPath('/home/ubuntu/course-v3/nbs/dl1/data/mnist_sample/train/3/21102.png')]

how to create ImageDataBunch from re

how to create ImageDataBunch from re

pat = r"/(\d)/\d+\.png$"

data = ImageDataBunch.from_name_re(path, fn_paths, pat=pat, ds_tfms=tfms, size=24)

data.classes

['3', '7']

how to create ImageDataBunch from name function with file path list

how to create ImageDataBunch from name function with file path list

data = ImageDataBunch.from_name_func(path, fn_paths, ds_tfms=tfms, size=24,

label_func = lambda x: '3' if '/3/' in str(x) else '7')

data.classes

['3', '7']

how to create a list of labels from list of file path

how to create a list of labels from list of file path

labels = [('3' if '/3/' in str(x) else '7') for x in fn_paths]

labels[:5]

['3', '3', '3', '3', '3']

how to create an ImageDataBunch from list

how to create an ImageDataBunch from list

data = ImageDataBunch.from_lists(path, fn_paths, labels=labels, ds_tfms=tfms, size=24)

data.classes

['3', '7']

如何创建属于你自己的图片数据集

Creating your own dataset from Google Images

Nb的目的

Nb的目的

by: Francisco Ingham and Jeremy Howard. Inspired by Adrian Rosebrock

In this tutorial we will see how to easily create an image dataset through Google Images. Note: You will have to repeat these steps for any new category you want to Google (e.g once for dogs and once for cats).

仅需的library

仅需的library

from fastai.vision import *

Get a list of URLs

如何精确搜索

如何精确搜索

Search and scroll

Go to Google Images and search for the images you are interested in. The more specific you are in your Google Search, the better the results and the less manual pruning you will have to do.

Scroll down until you’ve seen all the images you want to download, or until you see a button that says ‘Show more results’. All the images you scrolled past are now available to download. To get more, click on the button, and continue scrolling. The maximum number of images Google Images shows is 700.

It is a good idea to put things you want to exclude into the search query, for instance if you are searching for the Eurasian wolf, “canis lupus lupus”, it might be a good idea to exclude other variants:

"canis lupus lupus" -dog -arctos -familiaris -baileyi -occidentalis

You can also limit your results to show only photos by clicking on Tools and selecting Photos from the Type dropdown.

如何下载图片的链接

如何下载图片的链接

Download into file

Now you must run some Javascript code in your browser which will save the URLs of all the images you want for you dataset.

Press CtrlShiftJ in Windows/Linux and CmdOptJ in Mac, and a small window the javascript ‘Console’ will appear. That is where you will paste the JavaScript commands.

You will need to get the urls of each of the images. You can do this by running the following commands:

urls = Array.from(document.querySelectorAll('.rg_di .rg_meta')).map(el=>JSON.parse(el.textContent).ou);

window.open('data:text/csv;charset=utf-8,' + escape(urls.join('\n')));

创建文件夹并上传链接文本到云端

创建文件夹并上传链接文本到云端

Create directory and upload urls file into your server

Choose an appropriate name for your labeled images. You can run these steps multiple times to create different labels.

一个类别,一个文件夹,一个链接文本

folder = 'black'

file = 'urls_black.txt'

folder = 'teddys'

file = 'urls_teddys.txt'

folder = 'grizzly'

file = 'urls_grizzly.txt'

You will need to run this cell once per each category.

下面这个Cell,每个类别运行一次

创建子文件夹

创建子文件夹

path = Path('data/bears')

dest = path/folder

dest.mkdir(parents=True, exist_ok=True)

查看文件夹内部

查看文件夹内部

path.ls()

[PosixPath('data/bears/urls_teddy.txt'),

PosixPath('data/bears/black'),

PosixPath('data/bears/urls_grizzly.txt'),

PosixPath('data/bears/urls_black.txt')]

Finally, upload your urls file. You just need to press ‘Upload’ in your working directory and select your file, then click ‘Upload’ for each of the displayed files.

通过云端的Nb’upload’来上传

Download images

如何下载图片并设置下载数量上限

如何下载图片并设置下载数量上限

Now you will need to download your images from their respective urls.

fast.ai has a function that allows you to do just that. You just have to specify the urls filename as well as the destination folder and this function will download and save all images that can be opened. If they have some problem in being opened, they will not be saved.

Let’s download our images! Notice you can choose a maximum number of images to be downloaded. In this case we will not download all the urls.

You will need to run this line once for every category.

classes = ['teddys','grizzly','black']

download_images(path/file, dest, max_pics=200)

下载出问题的处理方法

下载出问题的处理方法

# If you have problems download, try with `max_workers=0` to see exceptions:

download_images(path/file, dest, max_pics=20, max_workers=0)

如何删除无法打开的图片

如何删除无法打开的图片

Then we can remove any images that can’t be opened:

for c in classes:

print(c)

verify_images(path/c, delete=True, max_size=500)

View data

从一个文件夹生成DataBunch

从一个文件夹生成DataBunch

np.random.seed(42)

data = ImageDataBunch.from_folder(path, train=".", valid_pct=0.2,

ds_tfms=get_transforms(), size=224, num_workers=4).normalize(imagenet_stats)

用CSV文件协助生成DataBunch

用CSV文件协助生成DataBunch

# If you already cleaned your data, run this cell instead of the one before

# np.random.seed(42)

# data = ImageDataBunch.from_csv(".", folder=".", valid_pct=0.2, csv_labels='cleaned.csv',

# ds_tfms=get_transforms(), size=224, num_workers=4).normalize(imagenet_stats)

Good! Let’s take a look at some of our pictures then.

查看类别

查看类别

data.classes

['black', 'grizzly', 'teddys']

查看类别,训练集和验证集的数量

查看类别,训练集和验证集的数量

data.classes, data.c, len(data.train_ds), len(data.valid_ds)

(['black', 'grizzly', 'teddys'], 3, 448, 111)

Train model

创建基于Resnet34的CNN模型

创建基于Resnet34的CNN模型

learn = create_cnn(data, models.resnet34, metrics=error_rate)

用默认参数训练4次

用默认参数训练4次

learn.fit_one_cycle(4)

保存模型

保存模型

learn.save('stage-1')

解冻模型

解冻模型

learn.unfreeze()

当前寻找最优学习率

当前寻找最优学习率

learn.lr_find()

对学习率和损失值作图

对学习率和损失值作图

learn.recorder.plot()

用学习率区间训练2次

用学习率区间训练2次

learn.fit_one_cycle(2, max_lr=slice(3e-5,3e-4))

保存模型

保存模型

learn.save('stage-2')

Interpretation 解读

加载模型

加载模型

learn.load('stage-2');

生成分类器解读器

生成分类器解读器

interp = ClassificationInterpretation.from_learner(learn)

对confusion matrix 作图

对confusion matrix 作图

interp.plot_confusion_matrix()

Cleaning Up

调用widget

调用widget

Some of our top losses aren’t due to bad performance by our model. There are images in our data set that shouldn’t be.

有些高损失值是因为错误标注造成的。

Using the ImageCleaner widget from fastai.widgets we can prune our top losses, removing photos that don’t belong.

ImageCleaner可以帮助找出和清除这些图片

from fastai.widgets import *

如何获取高损失值图片的图片数据和序号

如何获取高损失值图片的图片数据和序号

First we need to get the file paths from our top_losses. We can do this with .from_toplosses. We then feed the top losses indexes and corresponding dataset to ImageCleaner.

Notice that the widget will not delete images directly from disk but it will create a new csv file cleaned.csv from where you can create a new ImageDataBunch with the corrected labels to continue training your model.

ds, idxs = DatasetFormatter().from_toplosses(learn, ds_type=DatasetType.Valid)

用`ImageCleaner`生成这些图片以便清除

用ImageCleaner生成这些图片以便清除

ImageCleaner(ds, idxs, path)

'No images to show :)'

Flag photos for deletion by clicking ‘Delete’. Then click ‘Next Batch’ to delete flagged photos and keep the rest in that row. ImageCleaner will show you a new row of images until there are no more to show. In this case, the widget will show you images until there are none left from top_losses.ImageCleaner(ds, idxs)

找出相似图片的图片数据和序号

找出相似图片的图片数据和序号

You can also find duplicates in your dataset and delete them! To do this, you need to run .from_similars to get the potential duplicates’ ids and then run ImageCleaner with duplicates=True. The API works in a similar way as with misclassified images: just choose the ones you want to delete and click ‘Next Batch’ until there are no more images left.

ds, idxs = DatasetFormatter().from_similars(learn, ds_type=DatasetType.Valid)

清除相似图片

清除相似图片

ImageCleaner(ds, idxs, path, duplicates=True)

'No images to show :)'

记住用新生成的CSV来生成DataBunch(不含清除的图片)

记住用新生成的CSV来生成DataBunch(不含清除的图片)

Remember to recreate your ImageDataBunch from your cleaned.csv to include the changes you made in your data!

Putting your model in production 创建网页 APP

为量产生成模型包

为量产生成模型包

First thing first, let’s export the content of our Learner object for production:

learn.export()

This will create a file named ‘export.pkl’ in the directory where we were working that contains everything we need to deploy our model (the model, the weights but also some metadata like the classes or the transforms/normalization used).

使用CPU来运行模型

使用CPU来运行模型

You probably want to use CPU for inference, except at massive scale (and you almost certainly don’t need to train in real-time). If you don’t have a GPU that happens automatically. You can test your model on CPU like so:

defaults.device = torch.device('cpu')

打开一张图片

打开一张图片

img = open_image(path/'black'/'00000021.jpg')

img

如何将export.pkl生成模型

如何将export.pkl生成模型

We create our Learner in production enviromnent like this, jsut make sure that path contains the file ‘export.pkl’ from before.

learn = load_learner(path)

如何用模型来预测(生成预测类别,类别序号,预测值)

如何用模型来预测(生成预测类别,类别序号,预测值)

pred_class,pred_idx,outputs = learn.predict(img)

pred_class

Category black

Starlette核心代码

Starlette核心代码

So you might create a route something like this (thanks to Simon Willison for the structure of this code):

@app.route("/classify-url", methods=["GET"])

async def classify_url(request):

bytes = await get_bytes(request.query_params["url"])

img = open_image(BytesIO(bytes))

_,_,losses = learner.predict(img)

return JSONResponse({

"predictions": sorted(

zip(cat_learner.data.classes, map(float, losses)),

key=lambda p: p[1],

reverse=True

)

})

(This example is for the Starlette web app toolkit.)

Things that can go wrong

多数时候我们仅需要调试epochs和学习率

多数时候我们仅需要调试epochs和学习率

- Most of the time things will train fine with the defaults

- There’s not much you really need to tune (despite what you’ve heard!)

- Most likely are

- Learning rate

- Number of epochs

学习率过高会怎样

学习率过高会怎样

Learning rate (LR) too high

learn = create_cnn(data, models.resnet34, metrics=error_rate)

learn.fit_one_cycle(1, max_lr=0.5)

Total time: 00:13

epoch train_loss valid_loss error_rate

1 12.220007 1144188288.000000 0.765957 (00:13)

学习率过低会怎样

学习率过低会怎样

Learning rate (LR) too low

learn = create_cnn(data, models.resnet34, metrics=error_rate)

Previously we had this result:

Total time: 00:57

epoch train_loss valid_loss error_rate

1 1.030236 0.179226 0.028369 (00:14)

2 0.561508 0.055464 0.014184 (00:13)

3 0.396103 0.053801 0.014184 (00:13)

4 0.316883 0.050197 0.021277 (00:15)

learn.fit_one_cycle(5, max_lr=1e-5)

Total time: 01:07

epoch train_loss valid_loss error_rate

1 1.349151 1.062807 0.609929 (00:13)

2 1.373262 1.045115 0.546099 (00:13)

3 1.346169 1.006288 0.468085 (00:13)

4 1.334486 0.978713 0.453901 (00:13)

5 1.320978 0.978108 0.446809 (00:13)

learn.recorder.plot_losses()

As well as taking a really long time, it’s getting too many looks at each image, so may overfit.

训练太少会怎样

训练太少会怎样

Too few epochs

learn = create_cnn(data, models.resnet34, metrics=error_rate, pretrained=False)

learn.fit_one_cycle(1)

Total time: 00:14

epoch train_loss valid_loss error_rate

1 0.602823 0.119616 0.049645 (00:14)

训练太多会怎样

训练太多会怎样

Too many epochs

np.random.seed(42)

data = ImageDataBunch.from_folder(path, train=".", valid_pct=0.9, bs=32,

ds_tfms=get_transforms(do_flip=False, max_rotate=0, max_zoom=1, max_lighting=0, max_warp=0

),size=224, num_workers=4).normalize(imagenet_stats)

learn = create_cnn(data, models.resnet50, metrics=error_rate, ps=0, wd=0)

learn.unfreeze()

learn.fit_one_cycle(40, slice(1e-6,1e-4))

Total time: 06:39

epoch train_loss valid_loss error_rate

1 1.513021 1.041628 0.507326 (00:13)

2 1.290093 0.994758 0.443223 (00:09)

3 1.185764 0.936145 0.410256 (00:09)

4 1.117229 0.838402 0.322344 (00:09)

5 1.022635 0.734872 0.252747 (00:09)

6 0.951374 0.627288 0.192308 (00:10)

7 0.916111 0.558621 0.184982 (00:09)

8 0.839068 0.503755 0.177656 (00:09)

9 0.749610 0.433475 0.144689 (00:09)

10 0.678583 0.367560 0.124542 (00:09)

11 0.615280 0.327029 0.100733 (00:10)

12 0.558776 0.298989 0.095238 (00:09)

13 0.518109 0.266998 0.084249 (00:09)

14 0.476290 0.257858 0.084249 (00:09)

15 0.436865 0.227299 0.067766 (00:09)

16 0.457189 0.236593 0.078755 (00:10)

17 0.420905 0.240185 0.080586 (00:10)

18 0.395686 0.255465 0.082418 (00:09)

19 0.373232 0.263469 0.080586 (00:09)

20 0.348988 0.258300 0.080586 (00:10)

21 0.324616 0.261346 0.080586 (00:09)

22 0.311310 0.236431 0.071429 (00:09)

23 0.328342 0.245841 0.069597 (00:10)

24 0.306411 0.235111 0.064103 (00:10)

25 0.289134 0.227465 0.069597 (00:09)

26 0.284814 0.226022 0.064103 (00:09)

27 0.268398 0.222791 0.067766 (00:09)

28 0.255431 0.227751 0.073260 (00:10)

29 0.240742 0.235949 0.071429 (00:09)

30 0.227140 0.225221 0.075092 (00:09)

31 0.213877 0.214789 0.069597 (00:09)

32 0.201631 0.209382 0.062271 (00:10)

33 0.189988 0.210684 0.065934 (00:09)

34 0.181293 0.214666 0.073260 (00:09)

35 0.184095 0.222575 0.073260 (00:09)

36 0.194615 0.229198 0.076923 (00:10)

37 0.186165 0.218206 0.075092 (00:09)

38 0.176623 0.207198 0.062271 (00:10)

39 0.166854 0.207256 0.065934 (00:10)

40 0.162692 0.206044 0.062271 (00:09)

Lesson 3 multi-label and segmentation

介绍吴恩达和fastai机器学习课程

Intro Andrew Ng and Fastai ML courses

Intro Andrew Ng and Fastai ML courses

- what special about fastai ML course?

- why should take both Ng and fastai ML courses?

用Zeit实现Web App

Deploy your model on Zeit

Deploy your model on Zeit

- just a page instruction

- free and easy

学员第二周项目展示

Student Projects deployed online

3:30-9:20

Student Projects deployed online 170-418

- what car is that by Edward Ross

- build the app help understand the model better

- no need to use mobile NN api

- Healthy or Not!

- [image:104C2ABE-D1CF-440B-A38B-6BE5569D516B-86291-000381CF60EFD424/26B26097-13E0-4526-95CD-64EBD0D52680.png]

- Trinidad and Tobago Hummingbird classifier

- [image:ADB4E47E-BFF5-4065-9471-E6AAE95CF2B8-86291-000381DF9FE0D786/2A47E6C9-ABAB-4FD9-B329-02DDE21DBD83.png]

- Check your mushroom

- [image:A2CAB546-E926-4BEF-B0FE-A97CA8C59C8E-86291-000381EE4799B078/0866584B-7A59-49B8-9718-CA9CC3D1881E.png]

- cousin classifier

- [image:0A3510E0-A979-43EE-A560-AFD9E5D0CD98-86291-000381F869D4F914/CFBB57C7-1ED6-4D19-9D63-2DD2A480C3BD.png]

- emotion detector and classifier

- [image:CDC2A15D-C402-4533-A51C-A572060A2AD6-86291-000382017E9B9B81/9AF78E81-7344-49B1-894A-F81033F7BFD6.png]

- sign language detector

- [image:29705796-EDB2-4EA3-B67F-34E1A272AD0B-86291-0003820E0154E0B5/3106750F-8A8D-4414-9910-780AB4DE4411.png]

- your city detector

- [image:ACB64105-9676-4D10-A7ED-A3CA094E4462-86291-0003821CB4217822/D18076AA-FA7F-4F10-A674-CA84BA810373.png]

- time series classification

- [image:877696BC-C47D-4AB7-AE0D-05205B9BCA85-86291-00038228FFA7EFC0/4738255D-E810-4830-84C7-D34109F22E69.png]

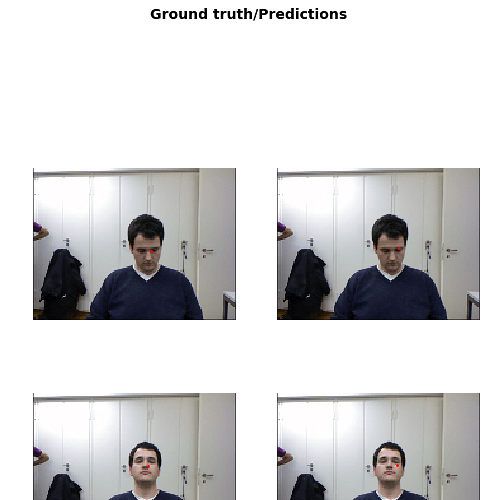

- facial emotion classification

- [image:1647B963-2CAC-406D-8EA5-B8C8E41431D8-86291-00038355D164F364/F9A95BBA-6683-4E15-8757-A798FC5258A0.png]

- tumor sequencing

- [image:D0B6EDB8-9781-4C0E-BE11-AE5E9CF49EDA-86291-0003835CAE96793E/6E587C7F-FD7C-43E8-B06F-EDE585B8007C.png]

- [Face Expression Recognition with fastai v1 – Pierre Guillou – Medium](https://medium.com/@pierre_guillou/face-expression-recognition-with-fastai-v1-dc4cf6b141a3)

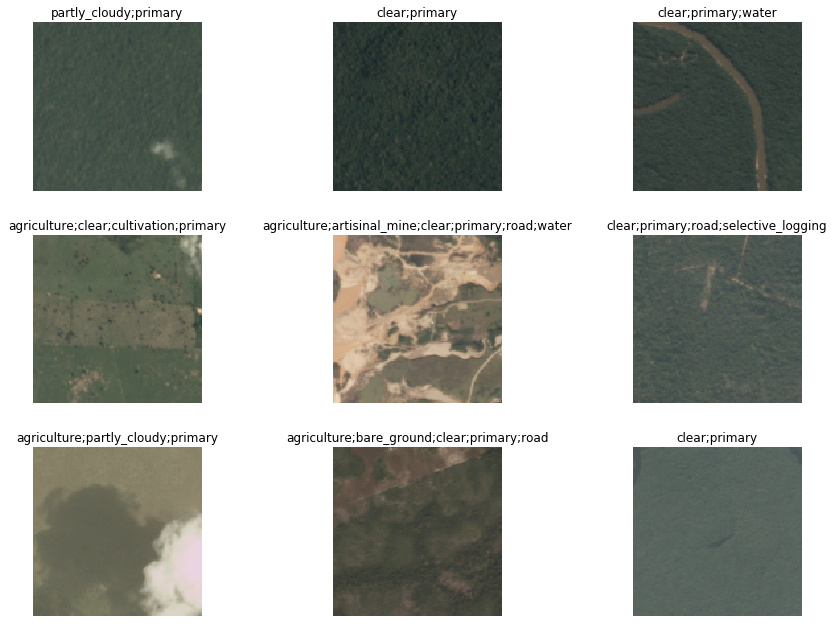

介绍卫星图片集

Introduction to Satellite Imaging dataset

Introduction to Satellite Imaging dataset 9:20-11:02 418-500

9:20-11:02

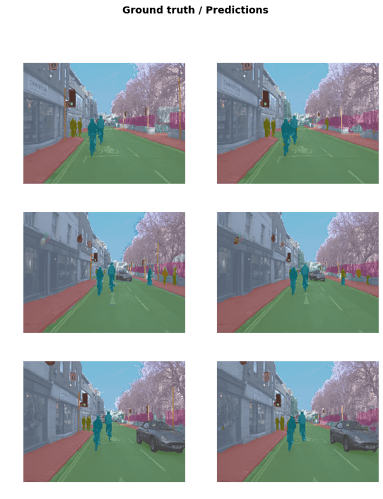

- check out the image examples and labels

- what is multi-label classification?

如何下载kaggle 数据集

how to download dataset from Kaggle

11:00-14:56

How to download dataset from Kaggle

- What is Kaggle and why it is good?

- How to download dataset from Kaggle?

- How to comment, reverse comment?

- use the notebook to guide the process of downloading

- how to download with 7zip format?

- how to unzip 7zip file?

介绍data block api

Introduction to data block API

14:56-18:28

Introduction to data block API

- Note the dataset is images with multiple labels

- how to read csv with pandas?

- what data object we use for modeling?

- previously what was the trickiest step of deep learning?

- how to create more flexible ways to create your DataBunch, instead of factory method?

- What is data block api and how does it work in general?

介绍 Dataset, DataLoader, DataBunch

Introduction to Dataset, DataLoader, DataBunch

18:30-24:00

Introduction to Dataset, DataLoader, DataBunch

- What is Dataset class?

- what does __getitem__ and __len__ do?

- How to use DataLoader to handle mini-batch?

- How to validate model with DataBunch?

- How to link all the above together through data block api?

用api Nb学习data block 用法

Explore data block api notebook

Explore data block api notebook

24:00-30:20

- use data block api notebook to play with the functions and tiny version of dataset

- a lot of skills to dig into (a lot of questions can be further created)

如何做transformation

How to do transforms

30:11-33:50

How to do transforms

- when and how to pick on flip_vert?

- how to experiment to find out the best values for other parameters?

- what does max_warp do?

- when and how to use it for different dataset?

- building a model procedure is the same as usual

如何设计你的metrics

How to create your own version of metrics

How to create your own version of metrics

30:11-41:08

* what does we use metrics for?

* how to create the accuracy required by Kaggle?

* how does accuracy in fastai source code?

* what does data.c mean?

* why a threshold is needed for satellite dataset accuracy?

* how to create a special version of an accuracy function with specific arg values using partial?

问答:纠错数据,api风格,视频截取

QA on corrected data, data api style, video frames

QA on corrected data, data api style, video frames

42-48:56

* should we record the error from app?

* how to do finetuning with the corrected dataset?

* how we set the learning rate with the corrected dataset?

* should data block api be in certain order?

* where does the idea of data block come from?

* how to dig into the details of data block source code?

* what software to pull frames? (web api, opencv)

如何读取学习率作图

How to pick learning rate carefully?

48:37-50:27

How to pick learning rate carefully

* How to read the lr for fine-tuning?

* How to read the lr for full training?

* What is discriminative learning rate?

如何改进卫星图模型

How to further improve Satellite Imaging model performance

How to further improve CamVid model performance

50:27-56:32

why use smaller images than Kaggle provided for training?

why then larger images to train the model again can avoid overfitting and improve model?

How do we make use the larger images and train model?

- how to change the data with large images?

- how to put the new data into the learner previously trained?

- how to freeze most of the layers of the model and only train the last few layers?

- how to find the best learning rate?

- how to train the model 5 times?

- evetually we move up to top 10%

- How to further improve the performance?

- how to unfreeze to train all the layers?

- how to pick the learning rate to train properly?

- to get into top 20

How to actually do Kaggle competition?

介绍camvid数据集

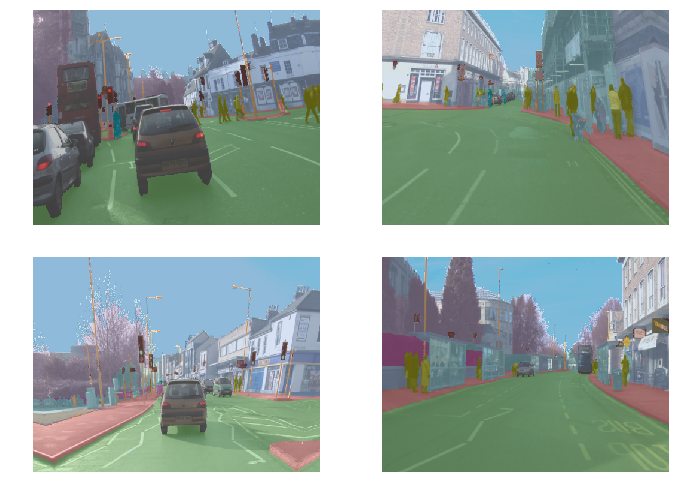

Introducing Camvid dataset

56:24-60:25

Introducing Camvid dataset

* what kind of problem is segmentation?

* What kind of dataset needed for segmentation?

* What industries have such segmentation problems?

* How to cite the datasets to get them credits?

问答:如何解读合适的学习率

QA How to find a specific lr number or range

60:23-63:06

QA How to find a specific lr number or range

- still a bit more artisanal than expected

- require certain experiment

- bottom point value not good

- try numbers x10 smaller and a few more around

- maybe someone will create an auto learning rate finder

如何做图片区域隔离

How to do segmentation modeling

63:06-69:50

How to do segmentation modeling

- How to get data?

- How to take a look at the data?

- how to extract labels for the data?

- How to open image and segmentation image?

- how to create DataBunch and how to set validation dataset?

- how to pick and use classes names?

- how to do transformation for Camvid dataset?

- how to choose batch size?

- how convenient to do show batch for Camvid dataset?

- How to create a learner

- how to find the learning rate

- how to start training, unfreeze and train more

问答:无监督学习和不同图片尺寸训练

QA unsupervised learning and different sized dataset training

69:55-72:32

QA unsupervised learning and different sized dataset training

* can we do unsupervised learning do segmentation?

* cons of unsupervised learning for segmentation

* should we make smaller size dataset to do training?

* great idea and great trick to improve you model

问答:像素隔离所需的准确度算法

QA what kind of accuracy do we use for pixel segmentation?

72:35-75:03

what kind of accuracy do we use for pixel segmentation

why we use acc_camvid rather than accuracy?

what are void pixels?

what are the basic skills you need to create such metrics?

问答:当训练损失值高于验证损失值时怎么办

QA what to do when training loss higher than validation loss?

75:03-76:21