I have a few questions from the class –

net = nn.Sequential( nn.Linear(28*28, 10), nn.LogSoftmax() )

In last non-linear layer, why did we use logsoftmax, not softmax? Weren’t we exponentiating outputs from 2nd last layer so as to make them all +ve ? Why back to log after doing [exp]/[sum of exp].

n.Parameter(torch.randn(*dims)/dims[0])

What is the reason of dividing by dims[0]. I tried and it doesn’t work if we don’t divide by dims[0]. By it doesn’t work, I mean fit() gives loss = nans and very bad accuracy.

Thanks

I just posted the video.

The loss functions in pytorch generally assume you have LogSoftmax, for computational efficiency reasons: Does NLLLoss handle Log-Softmax and Softmax in the same way? - PyTorch Forums

This is He initialization (Initialization Of Deep Networks Case of Rectifiers | DeepGrid) . Although I may have forgotten a sqrt there…

Without careful initialization you’ll get gradient explosion. We discuss this in the DL course.

Helpful links. Thanks

Blogpost on Broadcasting in Pytorch

hi there,

I have a question pertaining to optimizer.zero_grad() . I have gone couple of times over the section where it is explained why do we have to call this function.

I still don’t understand it .

From pytorch forum , i understand unless for the special cases where one wants to simulate bigger batches by accumulating the gradients , one has to invoke optimizer.zero_grad() to clear the grandients for the next batch.

Would like to understand Jeremy explanation though.

The title changed “with” for “in” and the link got broken.

New one.

https://jidindinesh.github.io/2019-02-08-Broadcasting-In-PyTorch

On the next video (lesson 10) around minute 25-26 he explains again why we need to clear the weights and bias.

Hope it helps.

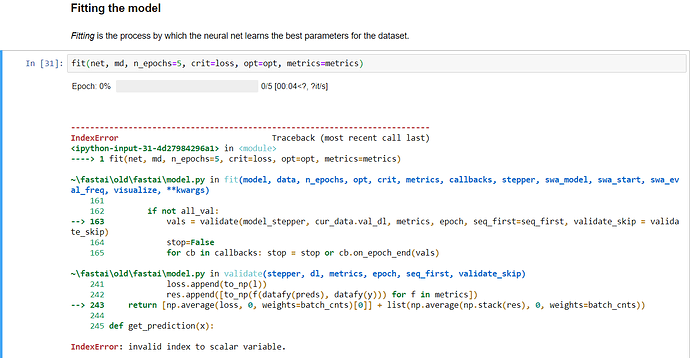

facing the same issue as @hamzautd7