Hi everyone, after completing lecture one, I decided to build a fire image classifier.

It’s a nice experience knowing that with little efforts I train and build a fire image classifier notebook. Is it a fire image or not? | Kaggle

Hi everyone, after completing lecture one, I decided to build a fire image classifier.

It’s a nice experience knowing that with little efforts I train and build a fire image classifier notebook. Is it a fire image or not? | Kaggle

Hi all, noob here.

After completing Lecture 1, I tried to build an animal classifier. Here it is - Animal Detector | Kaggle

Hi everyone, After completing the first lesson of Practical Deep Learning for Coders Part 1, I managed to build a model, which classifies if the given image is a Sine Curve or a Cosine Curve. To my surprise, the model does a good job of identifying the type of curve with around 98% accuracy!

Link to the Kaggle notebook: Graph Classifier | Kaggle

These are the set of images, which I have used.

Here is the result.

@UnNerd_2000

If you’re worried about the second image, where it says that the probability of being a sine curve is 1%, don’t be because it is a simple error in your code.

What FastAI does is that it returns a list of probabilities corresponding to every label that you classify between. So according to your code, the model is returning the probability of being a cosine curve. It will be fixed if you change probs[0]:4f to probs[1]:4f.

Great model, btw!

Thanks @utkrsh

I didn’t understand why we are using an iterable object for storing the accuracy but I never gave it a thought. Thank you for the clarification.

Here’s an idea if you wanna try- classify all the 6 trig curves and later from lesson 2 deploy it into an app.

Hi @theaeonwanderer , well, I am thinking the same thing. I have tried to classify some other curves as well but in some cases the model didn’t do a great job. Anyways, I will tweaking things a little bit for a better result.

Yeah, sure! But although keep in mind that if it doesn’t work it is ok! You will learn how to tweak them later. Move on to the next lessons.

One key point I heard Jeremy say was, our models work well on the datasets that are similar to the ones that were used in pre-training the original model. In our case, we are using (fine-tuning) resnet34 right?! So this resnet34 was pre-trained on some specific set of datasets- massively diverse of course which is the ImageNet dataset. This contains mostly images of real life objects- bikes, mountains, oranges, puppies, cars, etc. So when we fine-tune it on our dataset it works really well if our dataset is similar to what was used in pre-training it.

I am not sure if resnet34 can classify all the curves pretty accurately. Although, it might! There’s only one way to find- to try, if it doesn’t work. Move one and come back that’s it!

Hey everyone,

I’m sharing my Kaggle notebook here

the idea is to face emotion recognition to classify multiple emotions like anger, surprise, sad, happy, etc.

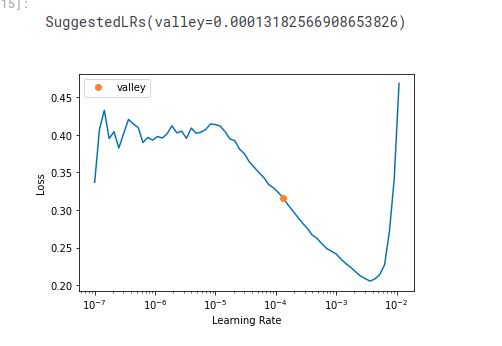

however I see the model converge not that good it seems to me overfitting or not learning at all I added a confusion matrix and tried to use as well to find the best learning rate seems that not working as well even unfreeze the the original resnet18 model weights didn’t help that match,

appreciate your feedback.

I attempted to build a quite similar classifier to yours that could identify whether a baby is crying or smiling. I had the same issue as you; my error rate was around 0.50. My metric improved only after I used more data. Specifically, I downloaded 400 images for each category. I chose this number because I saw that someone else who also built a humor classifier used the same number of images (you can find it here). After that, my error rate was around 0.20 with 5 epochs.

Maybe it can also help to clean your data. For example, in my dataset, there were images of a toy called ‘Cry Babies’ that I needed to remove, since I wanted my model to recognize facial expressions of real babies.

I think that our models could achieve better results if we use a face detector to crop the region of interest. Once we have only the image of the face, we can apply our face emotion classifier to predict its humor. I have not tried this yet, though

Hi all,

I wanted to share my first model. It will predict what type of person you are based on your Subaru. Posted here: Subaru - a Hugging Face Space by tjeagle

Hi team,

I just finished working through lesson 1 and am excited to show my results. Having spent my whole life in (or working for) the Navy. I decided to train the model to classify what type a ship an image is ie; warship, submarine, cargo, tanker, cruise, tug boat.

https://www.kaggle.com/code/guybrushthreepwood69/what-type-of-ship

Hey @karen, seems you’re correct, i think the issue related to data itself as you mentioned I’m going to update it ASAP, to see how this affect the loss, thanks for your help, BTW your notebook looks interesting though.

Just started the book and excited to learn, but really disappointed how much of the code in the chapter 1 jupyter notebook doesn’t execute as-is in colab or paperspace. That is a pet peeve of mine (when book examples don’t actually work) and doesn’t bode well for the rest of the course. Overall it seems to be well written though, so hopefully the example code gets better. If not, I’m sure I will learn enough to do it on my own in spite of the bad examples. ![]() Worst case I bought a $40 paperweight!

Worst case I bought a $40 paperweight!

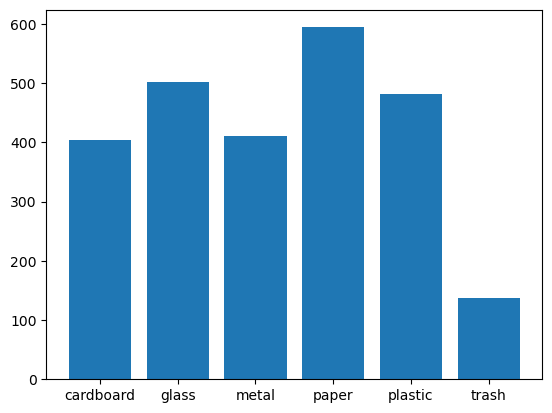

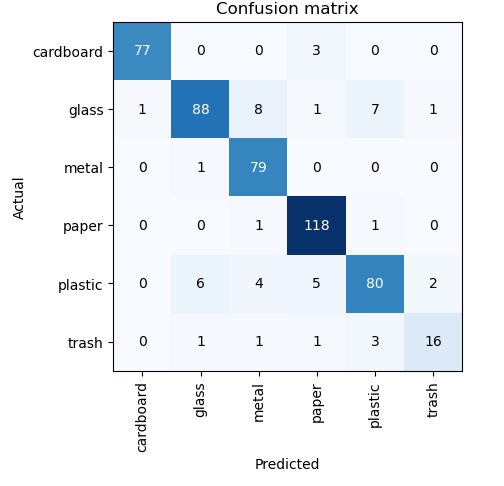

Hello! Below is my work about the multiclassification problem of the garabage.

Dataset was used from the Kaggle Dataset.

The goal of the exercise was to based on the image, classify trash to the following classes:

First, I had to create the dataset based on the path of the kaggle:

The count of the following classes:

So, as we can see, the only imbalanced class is the trash.

path = os.path.join('/kaggle/input/garbage-classification/Garbage classification/Garbage classification')

labels = ['cardboard', 'glass', 'metal', 'paper', 'plastic', 'trash']

Some examples of the images:

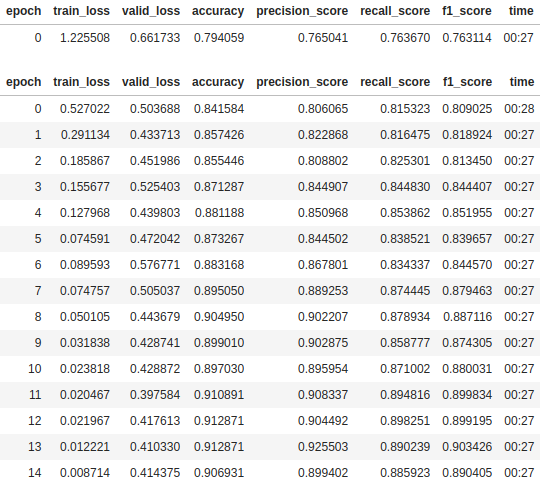

I used transfer learning technique with the usage of the resnet50 model to predict garbages.

Hyper paramters were:

I’ve splitted the dataset into train and validation by 8:2 ratio.

Two of the loss function were used:

FocalLossFlat performs really well, when the dataset is imbalanced,CrossEntropyLossFlat the industrial standard in the multiclassification problems.#Training ResNet50, No. epochs: 20, Learning Rate: 0.003, Loss: Cross Entropy Loss

Confusion Matrix:

You can find my notebook under here: Notebook URL

Hi all,

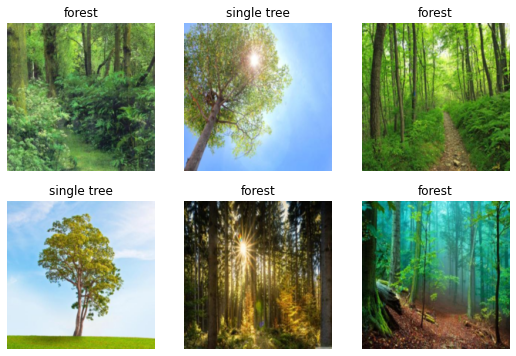

Just finished lesson one and though of the idiom “cannot see the forest for the trees”. If you haven’t heard it before basically means a person cannot see the big picture because the focus is too much on the details.

I used the ‘Is it a bird?’ notebook a reference to start. I had to change the keyword from ‘tree’ to ‘single tree’ as too many forest like photos came up for that search. Overall, is an accurate model and can successfully ‘see the forest from the trees’.

link to github: GitHub - dave-hay/fastai

Hi everyone,

I added a notebook for chest x-Ray image classification based on lesson 1:

Hi friends, I’m so new to the field of deep learning but the passion I’ve got for this is really huge and on fire.

I’m really grateful to Sir @jeremy for this SOTA show of love to all of us (me specifically) for making such volume of profound yet simply communicated teaching available for free and very accessible. You and the entire team at fastai are so amazing individuals.

After completing the first session of the course, I applied what I learnt to create a bunch of computer vision classification models all available here → computer vision classification models with fastai

I’m currently building out more computer vision classification models based off of the teachings from the first session; while experimenting and tweaking some stuff, I observed that while training the model with the number of epochs set to 8, the error_rate remained constant from the third epoch upwards. I believe this is the reason Sir @jeremy did set the number of epochs to 3.

I so much enjoy this and all… I’m so grateful Sir @jeremy, God bless you and your team.

Deep Thanks!

Currently, I’m reading chapter one of the book on GitHub while building the other computer vision models I wrote down.

Thanks! ![]()

Hello All,

After completing chapters 1 and 2, I wanted to make something that would categorize images of plastic into recycling codes. Sometimes I can’t read (or find!) the little code symbol on the container. I thought a model could help me sort my recycling, but it didn’t quite work as expected.

Here’s the Kaggle notebook I made to categorize plastics by code.

I exported the model to huggingface and made a simple JavaScript app to use my on my phone for figuring out the plastic code.

As I used the app, I found that had really made something that categorizes different container shapes and doesn’t really do a good job of figuring out the plastic type!

This was a good way to learn the importance of training data. It would be nice to give in-app feedback about how the model is (or isn’t) working and use the feedback to improve the model.

I’m excited to see how the rest of the course goes.

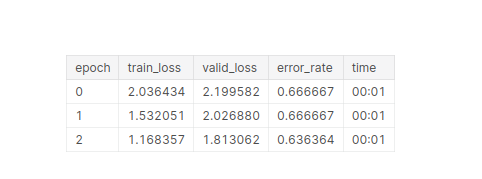

i had a question shouldn’t the error rate decrease after each epoch.

what am i missing here?