Good work! I have two questions:

- Is there a reason you are using stereo and not mono files?

- Can we even use transforms on spectrograms since they distort time/frequency connection that is a core feature of a spectrogram?

Good work! I have two questions:

Thanks! I’m just using the files as presented from the data source, I honestly hadn’t thought about stereo vs. mono. It would be interesting to see if converting the stereo samples to mono would make a difference — my hunch would be that would reveal more about the recording equipment than the actual sound.

As for transforms - I’ve done some experimentation based on what @MicPie and @MadeUpMasters suggested, and it seems that using limited transforms is better than all or none. See this response: Share your work here ✅

Cheers! Per Share your work here ✅ it seems that df_tfms=get_transforms(do_flip=False, max_rotate=0.), resize_method=ResizeMethod.SQUISH produced the best results for me. Better than just using None.

Thanks for this input! I tried out your suggestions, here’s the updated notebook. It looks like using the pre-trained resnet weights definitely does improve the predictions, pretty substantially! And it also seems that using limited transforms is better than none. I haven’t yet done a side by side comparison of normalising with itself vs. against imagenet; I don’t quite understand yet what that step is actually doing or how it’s used, so I have no intuition of why it would or wouldn’t work.

Let me know if you think I did something wrong there! I’ve just taken the most naive approach, and I know nothing about audio processing, so I’m probably making some terrible assumptions  Thanks for the help!

Thanks for the help!

Can you reproduce the same results on consecutive model runs? I am struggling because I am getting different results every time I run the model. I was told to use random seed, but I am wondering does it actually affect how model generalizes, since we want it to generalize well for the new data.

Not exactly!  I probably shouldn’t be making so many assumptions without running the model several times and taking averages.

I probably shouldn’t be making so many assumptions without running the model several times and taking averages.

I don’t know much about that yet & would also love to know more… I know the results can change depending on which data is in your validation set, i.e. if you’re trying to isolate the effects of certain parameters, your validation set should stay the same between runs so you’re testing yourself on the same data. But I also wonder whether that would produce a “good” model if you’re basically overfitting to a specific validation set in that case.

I also know the outputs can vary depending on the initial weights and learning steps, but I don’t have an intuition of how much variance is typical, i.e. whether it’s normal for the same model trained on the same data to vary 1% or 10% between runs.

Hey, I checked out your notebook and you’re completely right. I’m pretty new to audio and it looks like I must have generalized my findings way too broadly, sorry about that. I am doing speech data on spectrograms that look very different from yours, and I’ve consistently found improvements when turning transfer learning off, but your post has encouraged me to go back and play around some more.

About transformations I haven’t experimented enough to really say what I said. I should have done more experimentation on the few types and ranges of transformations that might make sense for spectrograms, so I’ll be messing with that more in the future as well.

Are you using melspectrograms or raw spectrograms? Are your y-axis in log scale? Those are things that are essential in speech but I’m unsure how they affect general sound/noise. Let me know if you know what they are and how to implement them and if not I can point you in the right direction. Cheers.

[ 25/03/2019 - EDIT with link to part 2 ]

Hi. I just published my medium post + jupyter notebook about the MURA competition (see my post here).

I used the standard fastai way of images classification (cf lesson 1 and 2).

Feedbacks welcome

Part 2 of our journey in Deep Learning for medical images with the fastai framework on the MURA dataset. We got a better kappa score but we need radiologists to go even further. Thanks to jeremy for his encouragement to persevere in our research

No need to apologise at all, thanks for the suggestions, it’s always good to get input and try. I don’t have a lot of experience in this field but I have enough to know that tweaking things can make a big difference in unexpected ways, so it seems pretty much anything is worth a try

I’ve just finessed the notebook and re-run it. With the limited transforms & resize method, not normalising to the resnet weights, and using transfer learning from resnet50, the model is up to ~86% accuracy. A big jump from 79% which I already thought was pretty good.

Thanks to all for the suggestions into what to tweak!

Edit to add, I’m just watching the week 3 video and Jeremy addresses this question directly in the lecture, around the 1:46 mark. He explains that if you’re using pretrained imagenet, you should always use the imagenet stats to normalise, as otherwise you’ll be normalising out the interesting features of your images which imagenet has learned. I see how this makes sense in the example of real-world things in imagenet, but I’m not sure whether this makes sense for ‘synthetic’ images like spectrographs or other image encodings of non-image data. I can imagine what he would suggest though - try it out  I’ll add some extra to the notebook & compare normalising with imagenet stats vs. not, and report back…

I’ll add some extra to the notebook & compare normalising with imagenet stats vs. not, and report back…

Edit to the edit: I added another training phase to that notebook, normalising the data by imagenet’s stats. The result was still pretty good (0.8458 accuracy) but not as good as the self-normed version (0.8646). So it looks like, without really knowing whether this is definitive, it is in fact better to normalise to the dataset itself in the instance of using transfer learning from resnet (trained on real-world images) when trying to classify synthetic images.

Edit 3: I didn’t actually answer your questions! For the spectrograms I’m just using whatever SoX spits out, I don’t know if that’s a melspectrogram or a ‘raw’ one. The y axis seems to be on a linear scale. I’ve seen some things on here that you & others have posted about creating spectrograms and I think I would like to reengineer this “system” to do it all in python without the SoX step. This notebook about speech and this one about composers look good for that. Anyway. I think I’ll jump over to the audio-specific thread!

Just finished Kaggle’s Microsoft Malware Prediction and posted it on linkedin. This was a very humbling competition for me and I am happy for all the lessons I learned.

Or medium if you prefer

https://medium.com/p/22e0fe8c80c8/edit

I took Rachel and Jeremy’s advice and started blogging. Here is my first guide on how to best make use of fastai’s built in parallel function to speed up your code. I hope this helps someone. Feedback, especially critical, is appreciated!

Hi,

This is Sarvesh Dubey. Actually, I and my team had been working on a project which had to classify the severity of Alzheimer in Brain Scan. So we had first made a custom Dataset on the classes of Alzheimer severity. Initially, we were using Pytorch for the coding part and had applied Transfer Learning through Pytorch and could get a maximum accuracy of 75.6 %. But after that, we started seeing about FastAI and built a very much accurate model of 90% using Densenet161 and probably this classifier has not been built yet and with this accuracy

( Don’t know exactly). We are thinking of writing a research paper regarding this. As it’s a custom Dataset.

Now thinking of going for production for this model

@at98 I am not an expert as well  Actually, PyTorch scheduler is nothing more than a kind of callback with some specific methods. Therefore, I don’t think there should be any different from the implementation of

Actually, PyTorch scheduler is nothing more than a kind of callback with some specific methods. Therefore, I don’t think there should be any different from the implementation of fastai. (I believe that the later is even more robust and flexible).

Also, I think that having > 3 channels in the input is a problem of architecture, and not the library itself. Like, most of the networks are pre-trained on the ImageNet where you have 3 channels only. Therefore, to support a higher number of channels, one should replace the input layer in the created model with something that accepts a required number of channels.

Of course, I would be glad if you would like to share any generic snippets or code you use for your projects. I guess that could be a good idea to gather interesting scripts and solutions from DL practitioners.

Hello everyone,

I was having a very difficult time organizing my butterfly images in Desktop. So, I trained a Fastai butterfly image classifier which reached a high accuracy of 95.2% with Resnet50. After training, I classified the images in the unclassified folder and used a few python file operations to move the correctly classified images to their respective class directories. This small task has saved me immense time in organizing my butterfly images.

I am very happy to share my workflow via Medium article as given below.

Classifying and organizing butterfly images in the desktop using FastAI and Python

Thank you @jeremy and @rachel for making my photo organizing work pretty easy with your fastai library and lucid lectures.

Cool to see someone do the equivalent of “whip up a shell script” but “whip up a deep neural net plus a shell script” to solve a problem like this, nice!

Hi, I tried playing around with the notebook from lesson 1 as Jeremy said in course so I created mine.

I had some struggle but I want some feedback on what was right and what was wrong,… ? and Thank you

Github repo link

Couldn’t resist following up one more time, as I made one other minor tweak to the sound effect classifier model - changing the weight decay to 0.1 - and got the accuracy up to 0.906015! So, there you go - iterate, iterate, iterate…

I was working on the pet adoption kaggle problem and got some surprising results running a classifier on the image. Wrote a blog post here: eager for any feedback:

Hi everyone!

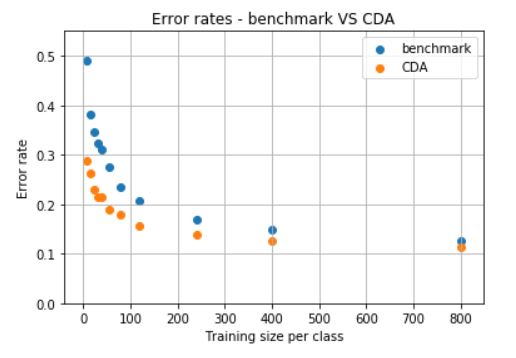

I continued working on something I shared above, a combinatorial data augmentation (CDA) technique. Now adapted to the multi-class case, and tested it again on the Fashion MNIST dataset (10 classes). I used its 60,000 image dataset for testing (instead of training), and its 10,000 images dataset for training, since the goal of the technique is to be used precisely when the number of images is low.

As an example, we may want to build a classifier when we have 8 images per class for training, and 2 images per class for validation (a total of 100 images since there are 10 classes). The benchmark is to do transfer learning with resnet34, based on the 80 training images, and save the best model based on error rate on the 20 validation images.

The CDA technique would instead grab the 80 training images, such as:

and generate more than 100,000 collages such as:

These collages are generated by picking 2 random classes, and then 9 random images within the selected 2 classes, and placing the images in a 3x3 array. The label of the collage is the class that appears the most among the 9 images that make up the collage.

The total number of possible collages is 45*16^9>10^{12}, so one can really have a large number of collages if desired, even with only 8 training images per class.

With the generate collages one can do transfer learning on resnet34 (a few epochs, tuning more than just the last layer, until error rate on a validation set with 20% of the collages is low e.g., 1%). And follow this with another transfer learning now with the original 80 training images (just as in the benchmark, but starting from the network trained on the collages rather than on resnet34).

The error rate with CDA on the 60K images used for testing is significantly lower than the benchmark. The following shows these error for different number of images per class on the training set (starting at 8 images per class, as exemplified above).

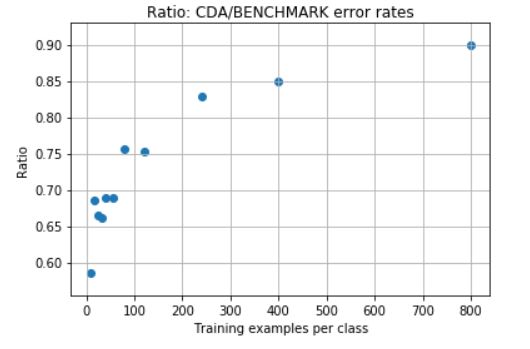

And their ratios are:

We can also see in the confusion matrices (benchmark vs CDA), how the performance improves with CDA.

Benchmark:

CDA:

Notebooks and readme: https://github.com/martin-merener/deep_learning/tree/master/combinatorial_data_augmentation

Hello

After having explored different applications of Deep Learning through Fastai, I wanted to make sure I understood the basics of Deep Learning by building a simple neural network from scratch which could recognize handwritten digits. Though not completely from scratch as there is still so much I could learn about programming  . Therefore, I have used basic Python and some PyTorch.

. Therefore, I have used basic Python and some PyTorch.

As someone without a math/CS background, this was also the opportunity for me to get more acquainted with math functions and formulas as I tried to explain in my own words, concepts like Softmax and Cross Entropy functions.

Feedback is appreciated, especially to correct any “math non-sense”

Thanks, you guys have a nice weekend !