Hi everyone!

I’m currently working on a project to segment & classify the condition of buildings seen on hi-res aerial (drone) imagery taken over Zanzibar island, Tanzania. As a step in the workflow, I trained a classifier based on lesson1 notebook to distinguish between 4 types/conditions of buildings on a variety of images (different sizes, ratios, blurriness):

“Complete”, “Incomplete”, “Foundation”, and “Empty” (no building in image)

Using resnet50 pretrained backbone, this achieved 93% accuracy on the 4 classes. Performance is probably even better than the stated number because looking at predictions with highest losses, they’re either mislabeled or so small/ambiguous in appearance that I’m not able to tell what class they should be in either:

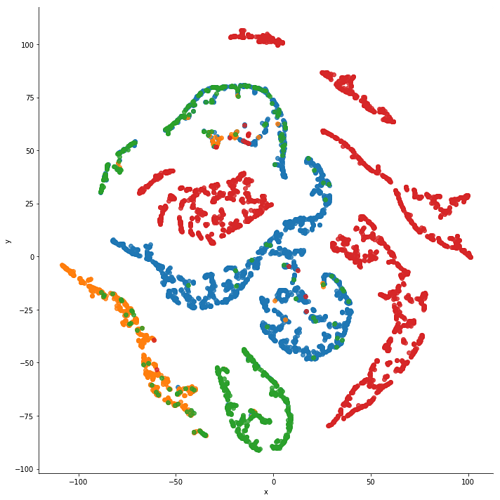

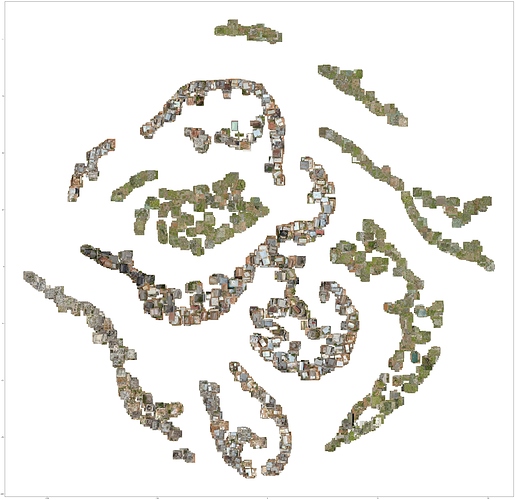

I also used the excellent t-SNE notebook/code from @KarlH (thanks! his original post is in this thread here) to visualize how the model is grouping representations. Very helpful diagnostics to understand what is very clearly separated (“Empty” images) and what characteristics make classification more erroneous (visual features like partially roofless rooms of buildings that confuse between “Incomplete” and “Complete”).

Look forward to exploring more how to use these techniques to diagnose model errors and improve training with less data (i.e. selectively train in later cycles on harder data that’s more similar to what the model is struggling on):

Here is my notebook: https://nbviewer.jupyter.org/gist/daveluo/8e9d60e597303b42dc36f926a3ece466

In it, I show training on resnet34 and resnet50, loading & predicting on a new external set of test images, packaging up the test predictions in pandas to csv file, and t-SNE visualization.

I load my train and validation data (data.train_ds & data.valid_ds) differently than what’s shown in the lesson by peeling the onion a few layers and using ImageClassificationDataset() instead of ImageDataBunch.from_name_re(). I did this to directly define which image and corresponding label files go into validation vs training. Because I’m working with geospatial image tiles that come from larger grids that are adjacent or sometimes overlapping, there’s the risk of data leakage if I’m not careful about keeping data from different grids cleanly and consistently separated. Defining exactly what files go into train/val also lets me do some hacky stuff to balance my classes: training on a half of the majority class for a cycle and then redefining the dataset with the other half of that class for another cycle of training. I’m sure there is a more elegant way to do this…still looking into it.

I mentioned upfront that this is a segmentation + classification task. The segmentation part I started working on first using the older v0.7 of fastai library so there’s some major duct-taping of workflows and data processing going on. I’m looking forward to updating the segmentation work to Fastai v1 and sharing it with everyone!

Here’s a preview of what the end product (segment + polygonize + classify) currently looks like:

(green = “Completed”, yellow = “Incomplete”, red = “Foundation”)

Dave