Hello everyone

I recently wrote a medium article on building an Image Similarity search model using Fastai, Pytorch Hooks and Spotify’s Annoy.

Results of this project was simply outstanding and I was blown away by how easily we can implement this.

Here is one base image for which we need to find similar images:

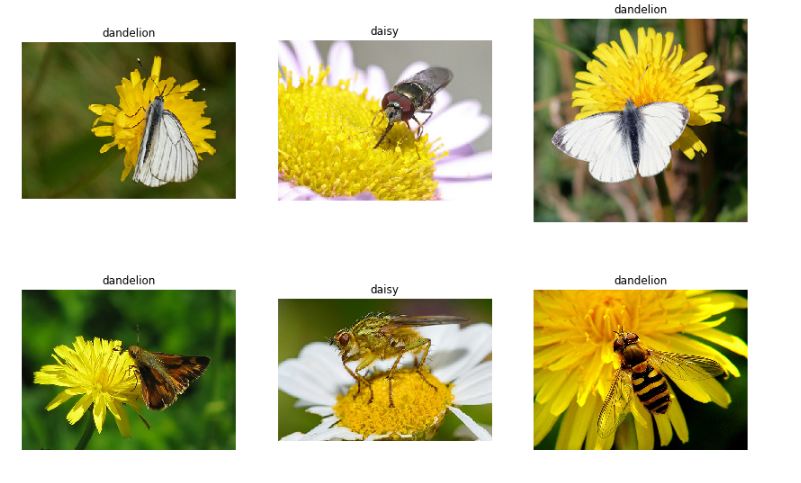

and the model returned following images which it thought are similar to the base image:

Please read through the article wherein I posted the link for Kaggle Kernel as well.

BR

Abhik