Hey! ![]()

Using paperspace I put together a repository that I think might be a fun way for practicing what we learned in the walk-thru today.

It provides you with a starter code to submit to the Paddy Doctor community competition that is currently underway.

If you are interested, I suggest you use your git-fu and fork the repo to store your work! This way, you can practice using git AND can also potentially contribute to my repo, if that is something you would like ![]()

Git is covered, but now for kaggle. Here are the steps to install and configure kaggle cli on paperspace (and do everything all the way to submitting to the competition)

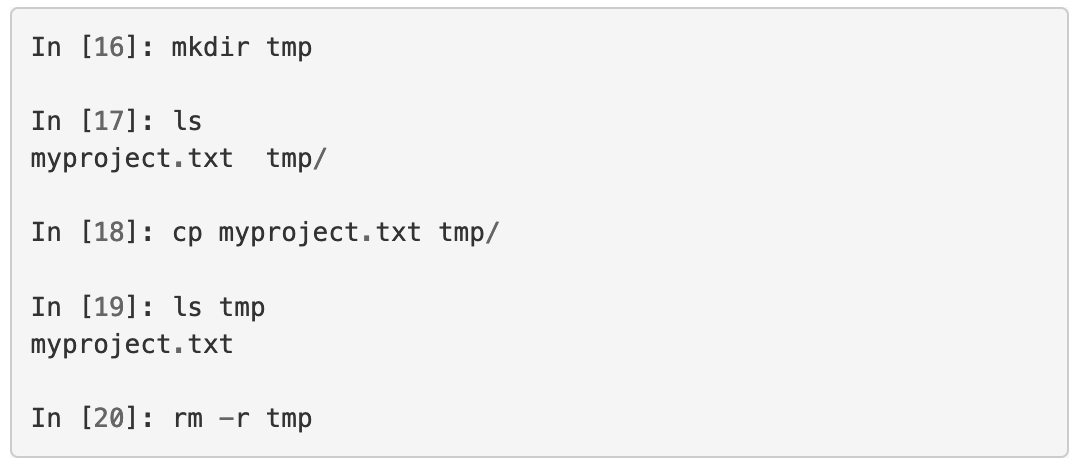

pip install --user kaggle- I have no clue why I get this warning on papersace? But with our

PATHmodification skills we can fix this! (anyhow, we need to “fix it” by modifying pre-run.sh, and notbash.localso that jupyter notebook can see it as well!)

error:WARNING: The script slugify is installed in '/root/.local/bin' which is not on PATH.

fix: run from console or editpre-run.shmanually

echo 'export PATH="~/.local/bin:$PATH"' >> /storage/pre-run.sh - Now this next part is tricky. You need to go onto kaggle, generate an API token under your account, and copy it to

~/.kaggle/kaggle.jsonon paperspace (you might need to create thekaggle.jsonfile, essentially, it is just a text file, if you will have issues with this please post in the thread below) - Finally, fork my repo from here.

- Run the notebook and submit to kaggle!

Working on the competition can be a fun way to learn and to get started with Kaggle ![]() It is a community competition, so there are no prizes or points, but the excitement of climbing up the leaderboard and trying out new things can certainly be there!

It is a community competition, so there are no prizes or points, but the excitement of climbing up the leaderboard and trying out new things can certainly be there!

There are a lot of things one could try on this dataset:

- To what accuracy can you train the current model? (resnet34)

- Can you find a different architecture that will work better?

- How do you pick a good learning rate? Will the learning rate differ if you use SGD vs Adam?

- What augmentations can you use to improve results?

- Can you experiment with different image sizes? How does that affect results?

- Can you combine predictions from two archs?

- Can you train with lr decaying? Say for each subsequent epoch take 0.9 of previous lr.

- Can you reduce the learning rate if you don’t see improvements for x number of epochs?

- Can you stop training if the model starts to overfit? (early stopping)

- How do you hook up the model to weights & biases?

- How do you train with parameter sweep (to find good hyperparams) using w&b?

- Can you leverage fastai functionality (low level functions) to create a PyTorch dataset and feed it to the fastai learner?

- Can you use lower level fastai functionality to arrive at fastai Dataloaders (how to construct dataloaders without using

ImageDataloadersorImageBlock)?

The list of questions could go on, and on, and on ![]()

In the notebook I tried to leave clues how I went around getting answers to some of my questions when I worked on the NB.

Maybe, if there would be interest, we could work together towards answering the questions from my list above? And Jeremy could comment on some things we could do better?

I am not so much interested in what the answers are, but what would be the method of arriving at them. For instance, I genuinely didn’t know where to look for the mapping from idxs to classes (this simply slipped out of my mind). I guess the answer probably is that I should re-read the dev nbs for fastai? I jumped around in the code but didn’t manage to steer myself to where this is defined.

I am not sure, but maybe some of the questions are not really be suited to part 1 of the course ![]()

Anyhow, maybe someone will find this useful ![]()