A digital signature would go a long way toward helping to sift real from the fake, but if only verified digital signature content were taken seriously, I wonder how that might affect legitimate uses of anonymous posting, like whistleblowing. No easy answers, I suppose.

Good question! Also what would happen with google search mechanism as these AI generated texts starts to fill significant parts of the internet ? It is like an infection. Will we get to point that the whole internet will be bugged ?

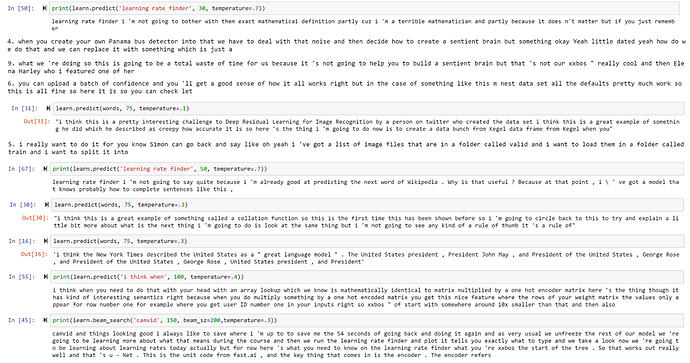

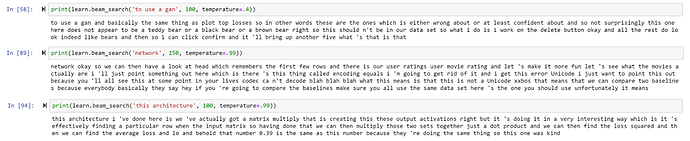

kind of hard to see, but seems fitting to show some examples of a language model of jeremy i did. super cool cuz i can i have jeremy talk about whatever i want but also scary because i had no idea what i was doing

Thank you for asking the question, Jeremy, and thank you for the answer, Rachel.

Do you know where I can get a BOT like you!

I can pay!

mrfabuluous1

Does the relative online permanence of such “bad content” (for lack of a more nuanced term) impact how you think about culpability? E.g. is a transient “mistake” made by a software agent better or worse in any way?

With the task and mission of Inbox-Zero-OCD

That is, indeed, the metric I must optimize.

What do you think about the Milgram Experiment

This was my job, my assignment to follow un-ethical behaivor

How about an ethical issue bounty program, just like the bug bounty program some companies have?

I was only following orders wasn’t a great defense at Nuremberg.

How about blatantly unethical, militarized software like Stuxxnet?

Can you talk about how making this tech ubiquitous and accessible is more the solution than the problem. There is an argument that limiting access is necessary.

There are a bunch of solutions, including bug bounties, if companies want to be proactive with ethics in AI. The challenge really is companies putting money where their PR is!

Ultimately, AI or any other technology are developed or implemented by companies for financial advantages, ie more profit…

Maybe the best way to incentivize ethical behavior is to tie financial or reputational risk to good behavior - in some ways, similar to how companies are now investing in cybersecurity because they don’t want to become the next Equifax…

Can grassroots campaigns help in better ethical behavior with regards to their use of AI?

Ha ha. Or governments for control. Not necessarily only profit motives. “Power” plays a huge factor too.

Pretty sad thing I observed from a brilliant female co-worker:

When she was prepping for a public speaking gig for our customer conference, she was practicing making her voice deeper to “hack” the voice gender bias you spoke of.

How do you balance the need for transparency/model explainability to build trust and the risk it creates for adversarial attack by using the explanations to misuse the model?

How can one resolve ethical trade-offs related to AI?

How to Make Tech Interviews a Little Less Awful by Rachel

(Will add to wiki after the lecture)