Has anyone used “activation maps” kind of concept to tabular data? A way in which we can find out which variable in the table was responsible for activating a class. That can be super interesting for interpretability which a lot of businesses look for.

Not sure if you want something more advanced. But if you want something very basic, try https://playground.tensorflow.org/

Yes exactly.

No, our ULMFiT algorithm easily beat all 1D-CNN classifiers. The paper you link to is not current.

Be careful not to confuse output activation functions with between-layer functions. The thing I drew was for output - where we certainly don’t want N(0,1).

As we discussion, for between-layer activation functions we generally use ReLU. And we let batchnorm handle the distribution for us.

Yes, normally I add a little to the max and min for this reason.

Yes, you can add a preprocessor to any ItemList. We’ve only just added this functionality, so if you’re interested in trying it, please open a topic with some details and sample code on #fastai-users and at-mention @sgugger and I.

Thanks for your response @jeremy. However, the talk of “target values” means that they were writing precisely about output activation layers, not between-layer functions.

Let me rephrase my question: Looks like they’re saying to scale the output activation function so it extends beyond the target output values (0 to 1, or 0 to 5). Is that something you recommend as well?

Yes I mentioned that here:

Is it possible to learn word or sentence embedding using lamguage model trained on wiki?

i was thinking about language model and how it was able to predict next word.Now idea that struck me was will it be possible to get a score for sentence out of model for use in sentence comparison.

ideally

sentence[w1…wn] ->language model-> wn+1

and

sentence[w1…wn] ->language model-> classifier+sigmoid ->0,1

could it be something like

sentence[w1…wn] ->language model-> +??? -> sentence representation[1212,1521515,0212,451]

I know this is advanced topic and i found discussion going on in advanced forum on same here but just wanted to ask is it worth pursuing?

Is the label_delim parameter in TextDataBunch functional? I get an error trying

data_clas = TextDataBunch.from_df(path, train_df=df_trn, valid_df=df_val,

vocab=data_lm.vocab,

text_cols='Narrative',

label_cols='Contributing Factors / Situations',

label_delim='|',

bs=bs)

Error: iterator should return strings, not float (did you open the file in text mode?)

I also get an error when the delimiter is two character, e.g. '; ’

I’m wondering about this myself. I’m setting batch size values as I load my data_lm previously created with default values but the GPU load seems to remain the same no matter what.

Yes, normally I add a little to the max and min for this reason.

Do you refer to the + self.min_score after passing the result through a sigmoid or to something else done afterwards ? Also I can understand that adding something helps the problem for values close to 5 (as the sigmoid will likely reduce them) but why is it the case for values close to 0 ? In my understanding, we should subtract something, shouldn’t we ?

Jeremy mentioned there is a Chinese language model, (su, zoo?). Where could I find more information about it?

Many thanks~

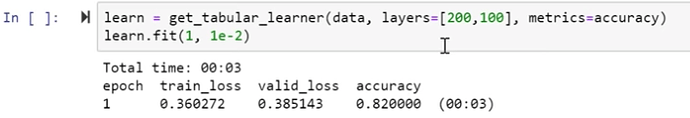

Quick question: why using learn.fit() instead of learn.fit_1_cycle() with tabular data? (cf notebook shown by Jeremy during the live)

Search Model Zoo

While discussing the use of

learn.predict('I liked this movie because ', 100, temperature=1.1, min_p=0.001)

in lesson3-imdb notebook, @jeremy mentioned that “this is not designed to be a good text generation system. There’s lots of tricks that you can use to generate much higher quality text non of which we’re using here.”

What are them tricks?  Where could I find more information about it?

Where could I find more information about it?

BTW, I created RNN based Polish poetry generator and utilized Jeremy’s ideas of adding special tokens for uppercase and capitalized words, also adding a token for line break was a good idea  Many thanks, Jeremy!

Many thanks, Jeremy!

As Polish language is morphologically rich due to cases, gender forms and great vocabulary I’m working with sub-words units - syllables.

I am also interested how we can implement calculating the feature importance for tabular data in fully connected networks

Main one would be ‘beam search’.