Thank you @bencoman for you quick asnwer. For sure. is the first notebook from the book. I checked the dependencies, but it looks right.

The public notebook link: https://nbviewer.org/github/fastai/fastbook/blob/master/01_intro.ipynb

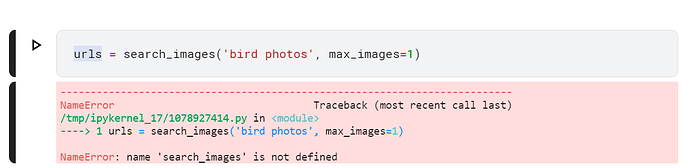

The full exception:

UnsupportedOperation Traceback (most recent call last)

~\AppData\Local\Temp\ipykernel_19780\1814909663.py in

2

3 path = untar_data(URLs.IMDB)

----> 4 dls = TextDataLoaders.from_folder(path)

5 dls.show(max_n=3)

6 learn = text_classifier_learner(dls, AWD_LSTM, drop_mult=0.5, metrics=accuracy)

~\anaconda3\envs\torchCudaEnv\lib\site-packages\fastai\text\data.py in from_folder(cls, path, train, valid, valid_pct, seed, vocab, text_vocab, is_lm, tok_tfm, seq_len, splitter, backwards, **kwargs)

253 if splitter is None:

254 splitter = GrandparentSplitter(train_name=train, valid_name=valid) if valid_pct is None else RandomSplitter(valid_pct, seed=seed)

→ 255 blocks = [TextBlock.from_folder(path, text_vocab, is_lm, seq_len, backwards, tok=tok_tfm)]

256 if not is_lm: blocks.append(CategoryBlock(vocab=vocab))

257 get_items = partial(get_text_files, folders=[train,valid]) if valid_pct is None else get_text_files

~\anaconda3\envs\torchCudaEnv\lib\site-packages\fastai\text\data.py in from_folder(cls, path, vocab, is_lm, seq_len, backwards, min_freq, max_vocab, **kwargs)

240 def from_folder(cls, path, vocab=None, is_lm=False, seq_len=72, backwards=False, min_freq=3, max_vocab=60000, **kwargs):

241 “Build a TextBlock from a path”

→ 242 return cls(Tokenizer.from_folder(path, **kwargs), vocab=vocab, is_lm=is_lm, seq_len=seq_len,

243 backwards=backwards, min_freq=min_freq, max_vocab=max_vocab)

244

~\anaconda3\envs\torchCudaEnv\lib\site-packages\fastai\text\core.py in from_folder(cls, path, tok, rules, **kwargs)

278 def from_folder(cls, path, tok=None, rules=None, **kwargs):

279 path = Path(path)

→ 280 if tok is None: tok = WordTokenizer()

281 output_dir = tokenize_folder(path, tok=tok, rules=rules, **kwargs)

282 res = cls(tok, counter=load_pickle(output_dir/fn_counter_pkl),

~\anaconda3\envs\torchCudaEnv\lib\site-packages\fastai\text\core.py in init(self, lang, special_toks, buf_sz)

114 “Spacy tokenizer for lang”

115 def init(self, lang=‘en’, special_toks=None, buf_sz=5000):

→ 116 import spacy

117 from spacy.symbols import ORTH

118 self.special_toks = ifnone(special_toks, defaults.text_spec_tok)

~\anaconda3\envs\torchCudaEnv\lib\site-packages\spacy_init_.py in

9

10 # These are imported as part of the API

—> 11 from thinc.api import prefer_gpu, require_gpu, require_cpu # noqa: F401

12 from thinc.api import Config

13

~\anaconda3\envs\torchCudaEnv\lib\site-packages\thinc_init_.py in

3

4 from .about import version

----> 5 from .config import registry

~\anaconda3\envs\torchCudaEnv\lib\site-packages\thinc\config.py in

11 from pydantic.main import ModelMetaclass

12 from pydantic.fields import ModelField

—> 13 from wasabi import table

14 import srsly

15 import catalogue

~\anaconda3\envs\torchCudaEnv\lib\site-packages\wasabi_init_.py in

10 from .about import version # noqa

11

—> 12 msg = Printer()

~\anaconda3\envs\torchCudaEnv\lib\site-packages\wasabi\printer.py in init(self, pretty, no_print, colors, icons, line_max, animation, animation_ascii, hide_animation, ignore_warnings, env_prefix, timestamp)

54 self.pretty = pretty and not env_no_pretty

55 self.no_print = no_print

—> 56 self.show_color = supports_ansi() and not env_log_friendly

57 self.hide_animation = hide_animation or env_log_friendly

58 self.ignore_warnings = ignore_warnings

~\anaconda3\envs\torchCudaEnv\lib\site-packages\wasabi\util.py in supports_ansi()

262 if “ANSICON” in os.environ:

263 return True

→ 264 return _windows_console_supports_ansi()

265

266 return True

~\anaconda3\envs\torchCudaEnv\lib\site-packages\wasabi\util.py in _windows_console_supports_ansi()

234 raise ctypes.WinError()

235

→ 236 console = msvcrt.get_osfhandle(sys.stdout.fileno())

237 try:

238 # Try to enable ANSI output support

~\anaconda3\envs\torchCudaEnv\lib\site-packages\ipykernel\iostream.py in fileno(self)

309 return self._original_stdstream_copy

310 else:

→ 311 raise io.UnsupportedOperation(“fileno”)

312

313 def _watch_pipe_fd(self):

UnsupportedOperation: fileno

really thank you in advance.