When thinking of models reverse how you would normally describe them. The body is the bottom and the head is the top. Sorta like a tree and it’s roots, or even a person.

I think of it as a pyramid. Base is the largest (your input image), head is the top

Can you share tips for navigating the fastai code?

Do you have a local checkout of fastai and open notebooks from there? Did you load the generated python code into an IDE? Do you rely on doc() function and links to the source on github?

I tried the last option but I quickly end up with a mess of tabs open to github, dev.fastai and fastcore.fast.ai.

Also I’d like to take an advantage of the the fact that everything is a notebook, but I couldn’t find a good way for navigating through the library, other than grepping for the symbol I want, or matching the doc page with the notebook filename. And again an explosion of open tabs, this time to jupyter notebooks.

https://forums.fast.ai/t/source-code-mid-level-api/65755/181 some discussion over here. VSCode, vim … options

Hi,

I don’t seem to have the questions listed in the Questionnaire section. Can someone please share the link with the correct notebook? ---- I will pick it up from the github Fastbook page.

Check the GitHub repo when in doubt (it has the questions)

Yup. Will pick it up from there. Thanks

If I listened to the stream correctly, @jeremy mentioned the course follows the book.

Does it mean fastbook covers part1-v4 only or does it cover both part1-v4 + part2-v4 (upcoming)?

Also, what do you think of this strategy? Read chapter 2 before lesson2, read chapter 3 before lesson 3, and so on and so forth…

Thanks!

So it seems people are recommending consuming the generated Python code rather than the notebooks/docs. I’ll go back to that then.

Just asked him, here is his answer:

Part 1 and Part 2 will both be from the book, we’ll try to cover all of it time permitting. Part 2 will probably include other parts as well as there’s more chapters we’re still wanting to write (online chapters) and possibly look into Object Detection, audio etc

Thanks Zachary

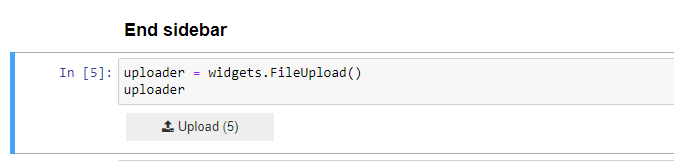

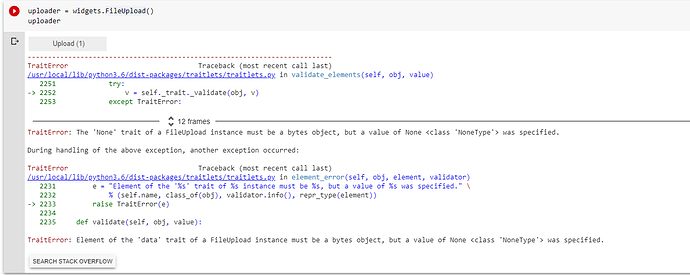

As stated before, widgets will not work in Colab out of the box and requires some intensive converting to bring them over

I guess I shall manually drop images in google collab and then refer them wherever required

It’s recommended if you want to explore the widgets too, use Paperspace (as there’s a free tier now)

Sure. I will look into paperspace. BTW, If I manually drop the image and rerun cells to test if my image is of cat or not, I see below error. Any thoughts?

In colab you can use

from google.colab import files

uploaded = files.upload()

Jeremy suggested Paperspace (It is great) also for the next lesson.

I would recommend to use fastai library for the images.

Follow the documentation

http://dev.fast.ai/data.transforms#get_image_files

http://dev.fast.ai/data.external

IMHO it is well done with examples, and we could contribute

@muellerzr, I have setup account and Paperspace and the widget does not throw error but it does not upload picture that I select by clicking upload. Any thoughts?