Thanks Zachary

As stated before, widgets will not work in Colab out of the box and requires some intensive converting to bring them over

I guess I shall manually drop images in google collab and then refer them wherever required

It’s recommended if you want to explore the widgets too, use Paperspace (as there’s a free tier now)

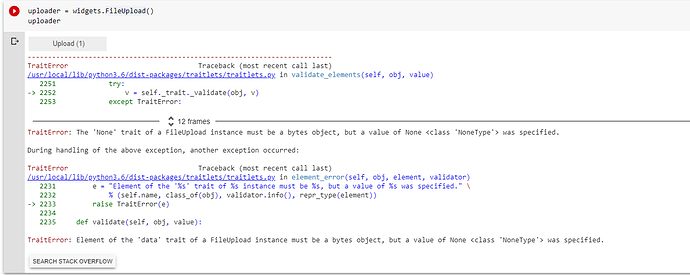

Sure. I will look into paperspace. BTW, If I manually drop the image and rerun cells to test if my image is of cat or not, I see below error. Any thoughts?

In colab you can use

from google.colab import files

uploaded = files.upload()

Jeremy suggested Paperspace (It is great) also for the next lesson.

I would recommend to use fastai library for the images.

Follow the documentation

http://dev.fast.ai/data.transforms#get_image_files

http://dev.fast.ai/data.external

IMHO it is well done with examples, and we could contribute

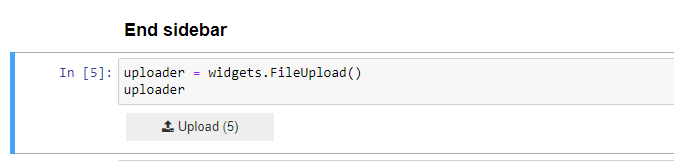

@muellerzr, I have setup account and Paperspace and the widget does not throw error but it does not upload picture that I select by clicking upload. Any thoughts?

It seems working fine from the picture.

add this to check

img = PILImage.create(uploader.data[0])

img

it uses pillow

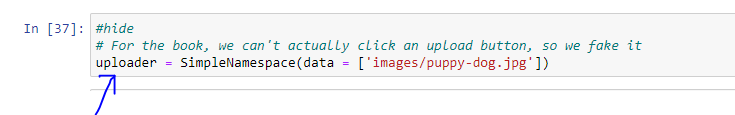

My bad. I was overriting uploader object content by executing below line. We shall either use uploader object or below line. Also, I assumed uploader would upload the selected image in the Images folder, which is not the case.

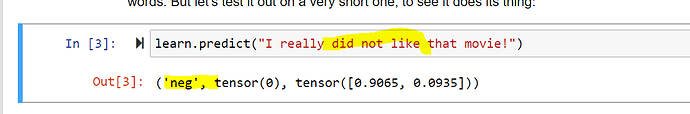

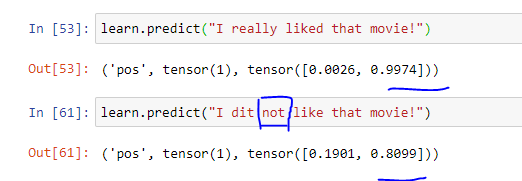

I understand NLP is not much discussed but I was curious that below the architecture from lecture 1 thinks the review is 80% positive. Thoughts?

I tried that and got negative. Do you think it’s because you have a spelling error? (ie, “dit” instead of “did”).

Did you check without the word “really” ? Because the initial one here

did not have “really”. If you do end up trying this out could you see what happens if you also make the error ‘dit’ ![]()

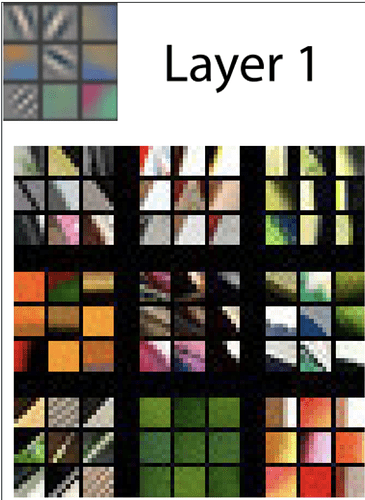

I’m trying to better understand the visualizations in the What our image recognizer learned section of ch1. Each small square of the 9 small squares left of the text “Layer 1”:

- The text says it’s a subset of the features. I thought that "features’ in this case would refer to each pixel of the input. Is this a picture of a subset of weights or features?

- If weights, which subset of weights is it? Is it the same concept as a “filter” for a CNN?

Thanks!

Hey Konstantin,

If you are referring to this sentence “Note that for each layer only a subset of the features are shown; in practice there are thousands across all of the layers.”

I think this is referring to the image below this where there are 3x3 grey squares showing an example of different ‘features’ (straight lines, circles, curved lines etc). The sentence is explaining that this is just a small example and that there would be thousands of such examples for every layer. As it says in the paragraph above, these are visually reconstructed weight pictures of each layer, with each 3x3 being an example of ‘feature’ identified in that layer.

I hope that makes some more sense?

We’ll be covering that in the next lesson.

Added detailed lesson notes. The notes can be accessed with the drive link. At the release of course, I would push the notes to an nbdev repo.

What is the best way to view the notes @kushaj ?

@sylvaint There are different Markdown readers-any one would read these in perfectly.

I am trying to start reading fastai2 code. Someone, posted Jeremy’s instructions on previous class https://www.youtube.com/watch?v=Z0ssNAbe81M&feature=youtu.be&t=3809.

I cloned fastai2 repo, and activated the conda env. Nevertheless, Ctrl-T doesn’t work for me. I can’t search symbols in different files. I am using ms-python.python 2020.3.69010, vscode 1.43.1, ubuntu 18.04.

Any suggestions?