I would like to invite you to an open collaboration on studying animal vocalizations. Why is this important?

If we can show that animals have a language, if we can start getting a glimpse into how they communicate and what they say, that could constitute a pivotal moment for how humanity approaches the nature, how we treat animals.

But arriving at this goal will not happen overnight. The way to it leads through investigating ways of working with animal vocalizations and we are only getting started on this.

My proposition is this - if you would like to help in this endeavor, if you are looking for an interesting project to apply your learnings from the course, please consider joining me in open_collaboration_on_audio_classification.

The above will link to a starter notebook where I walk you through the first dataset we will work on. It is a compilation of 7285 macaque coo calls from 8 individuals. Many believe that being able to identify the speaker is a necessary determinant of a language. Can you train models that will identify which call originated from which individual?

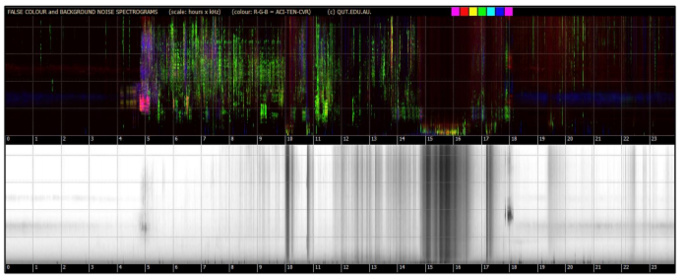

In the notebook, I walk you through all the steps necessary to load the data and train a simple CNN model. With just 16 seconds of fine tuning the pretrained CNN, we get to an error rate of 6.1%! Can you improve on this result? Can you share with others interesting ways of working with the data or some insights into the dataset?

We are only getting started on this work, there is so much that can be done. There is an immense value in publicly available code that researches and students in the field could refer to. Also, I have never attempted an open collaboration like this and so I would really appreciate if you would join forces with me on this.

The dataset in itself is interesting - it could potentially be the MNIST of audio research. You can train on it to great success using any modern GPU. If it proves too easy, I’ll look for the Imagenette and ImageWoof audio equivalents  For now, I feel there is still a lot one could explore here.

For now, I feel there is still a lot one could explore here.

Would be an honor if you joined me on this journey so that we can learn together and maybe help bring a positive change to the world.

I realize decoding animal communication might seem like a very challenging goal. But there are a lot of reasons to be optimistic with regards to the chances. If you would like to learn more about how we plan to tackle this, please check out The Earth Species website. I also invite you to listen to an NPR Invisibilia episode: Two Heartbeats a Minute that aired a couple of days ago.