This post includes:

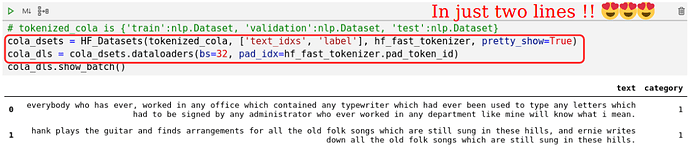

- Integration of fastai and hf/nlp - create fastai

Dataloadersfromnlp.Datasets

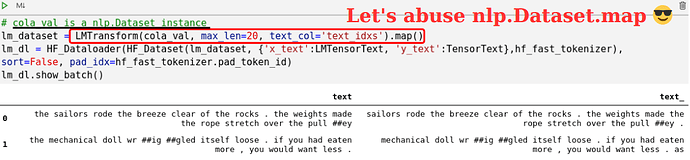

- A method for creating aggregated

nlp.Datasetfor LM, MLM, … from anynlp.Dataset.

and `LMTransform` is just a class with 3 methods

class LMTransform(AggregateTransform):

def __init__(self, hf_dset, max_len, text_col, x_text_col='x_text', y_text_col='y_text', **kwargs):

self.text_col, self.x_text_col, self.y_text_col = text_col, x_text_col, y_text_col

self._max_len = max_len + 1

self.residual_len, self.new_text = self._max_len, []

super().__init__(hf_dset, inp_cols=[text_col], out_cols=[x_text_col, y_text_col], init_attrs=['residual_len', 'new_text'], **kwargs)

def accumulate(self, text): # *inp_cols

"text: a list of indices"

usable_len = len(text)

cursor = 0

while usable_len != 0:

use_len = min(usable_len, self.residual_len)

self.new_text += text[cursor:cursor+use_len]

self.residual_len -= use_len

usable_len -= use_len

cursor += use_len

if self.residual_len == 0:

self.commit_example(self.create_example())

def create_example(self):

# when read all data, the accumulated new_text might be less than two characters.

if len(self.new_text) >= 2:

example = {self.x_text_col:self.new_text[:-1], self.y_text_col:self.new_text[1:]}

else:

example = None # mark "don't commit this"

# reset accumulators

self.new_text = []

self.residual_len = self._max_len

return example

See hf_nlp_aggregation_and_with_fastai.ipynb for full code. Also includes turning a dataset into a dataloader for ELECTRA

“Pretrain MLM and fintune on GLUE with fastai”

This post is actually the 7 th post of the series, and deprecates the the 2nd and 3rd.

-

(deprecated) TextDataLoader - faster/as fast as, but also with sliding window, cache, and progress bar

-

(deprecated) Novel Huggingface/nlp integration: train on and show_batch hf/nlp datasets

Things on the way

- Reproduce ELECTRA (pretraining from the scratch)

- Try to improve GLUE finetuning with ranger, fp16, one_cycle, and recent papers.

- Ensemble

- wnli tricks

I’ll post all updates of this series in my twitter Richard Wang so you won’t miss it.

Totally up to you though, I also the value in keeping separate topics in separate discussions…

Totally up to you though, I also the value in keeping separate topics in separate discussions…