Here are the questions:

- If the dataset for your project is so big and complicated that working with it takes a significant amount of time, what should you do?

Perhaps create the simplest possible dataset that allow for quick and easy prototyping. For example, Jeremy created a “human numbers” dataset.

- Why do we concatenate the documents in our dataset before creating a language model?

To create a continuous stream of input/target words, to be able to split it up in batches of significant size

- To use a standard fully connected network to predict the fourth word given the previous three words, what two tweaks do we need to make?

- Use the same weight matrix for the three layers.

- Use the first word’s embeddings as activations to pass to linear layer, add the second word’s embeddings to the first layer’s output activations, and continues for rest of words.

- How can we share a weight matrix across multiple layers in PyTorch?

Define one layer in the PyTorch model class, and use them multiple times in the

forwardmethod.

- Write a module which predicts the third word given the previous two words of a sentence, without peeking.

Same code as in chapter:

class LMModel1(Module): def __init__(self, vocab_sz, n_hidden): self.i_h = nn.Embedding(vocab_sz, n_hidden) self.h_h = nn.Linear(n_hidden, n_hidden) self.h_o = nn.Linear(n_hidden,vocab_sz) def forward(self, x): h = F.relu(self.h_h(self.i_h(x[:,0]))) h = h + self.i_h(x[:,1]) h = F.relu(self.h_h(h)) h = h + self.i_h(x[:,2]) h = F.relu(self.h_h(h)) return self.h_o(h)

- What is a recurrent neural network?

A refactoring of a multi-layer neural network as a loop.

- What is hidden state?

The activations updated after each RNN step.

- What is the equivalent of hidden state in

LMModel1?

It is also defined as

hinLMModel1.

- To maintain the state in an RNN why is it important to pass the text to the model in order?

Because state is maintained over all batches independent of sequence length, this is only useful if the text is passed in order

- What is an unrolled representation of an RNN?

A representation without loops, depicted as a standard multilayer network

- Why can maintaining the hidden state in an RNN lead to memory and performance problems? How do we fix this problem?

Since the hidden state is maintained through every single call of the model, when performing backpropagation with the model, it has to use the gradients from also all the past calls of the model. This can lead to high memory usage. So therefore after every call, the

detachmethod is called to delete the gradient history of previous calls of the model.

- What is BPTT?

Calculating backpropagation only for the given batch, and therefore only doing backprop for the defined sequence length of the batch.

- Write code to print out the first few batches of the validation set, including converting the token IDs back into English strings, as we showed for batches of IMDb data in Chapter 10.

The following function takes the

DataLoadersobject and thevocabwe created in Chapter 12 and prints the firstn_tokenstokens of the firstn_batchesbatches.def show_valid_batches(dls, vocab, n_batches=3, n_tokens=100): batches = list(dls.valid)[:n_batches] x_y_dict = {0: 'x', 1: 'y'} for i in range(n_batches): for j in range(2): print(f"{x_y_dict[j]}{i}:") count = 0 for k in batches[i][j]: for l in k: print(vocab[l], end=' ') count +=1 print('') if count > n_tokens: count = 0 break print('...') print('\n')Input:

show_valid_batches(dls, vocab, n_batches=2, n_tokens=20)Output:

x0: thousand eighty three . eight thousand eighty four . eight thousand eighty five . eight thousand one hundred eighteen . eight thousand one hundred nineteen . eight thousand one hundred twenty . ... y0: eighty three . eight thousand eighty four . eight thousand eighty five . eight thousand eighty hundred eighteen . eight thousand one hundred nineteen . eight thousand one hundred twenty . eight ... x1: eighty six . eight thousand eighty seven . eight thousand eighty eight . eight thousand eighty eight thousand one hundred twenty one . eight thousand one hundred twenty two . eight thousand ... y1: six . eight thousand eighty seven . eight thousand eighty eight . eight thousand eighty nine thousand one hundred twenty one . eight thousand one hundred twenty two . eight thousand one ...

- What does the

ModelResetercallback do? Why do we need it?

It resets the hidden state of the model before every epoch and before every validation run.

- What are the downsides of predicting just one output word for each three input words?

There are words in between that are not being predicted and that is extra information for training the model that is not being used. To solve this, we apply the output layer to every hidden state produced to predict three output words for the three input words (offset by one).

- Why do we need a custom loss function for

LMModel4?

CrossEntropyLoss expects flattened tensors

- Why is the training of

LMModel4unstable?

Because this network is effectively very deep and this can lead to very small or very large gradients that don’t train well

- In the unrolled representation, we can see that a recurrent neural network actually has many layers. So why do we need to stack RNNs to get better results?

Because only one weight matrix is really being used. So multiple layers can improve this.

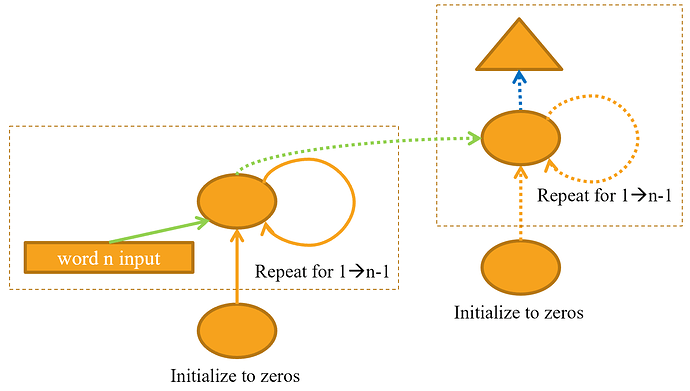

- Draw a representation of a stacked (multilayer) RNN.

- Why should we get better results in an RNN if we call

detachless often? Why might this not happen in practice with a simple RNN? - Why can a deep network result in very large or very small activations? Why does this matter?

Numbers that are just slightly higher or lower than one can lead to the explosion or disappearance of numbers after repeated multiplications. In deep networks, we have repeated matrix multiplications, so this is a big problem.

- In a computer’s floating point representation of numbers, which numbers are the most precise?

Small numbers, that are not too close to zero however

- Why do vanishing gradients prevent training?

Gradients that are zero can’t contribute to training because they don’t change any weights

- Why does it help to have two hidden states in the LSTM architecture? What is the purpose of each one?

a. One state remembers what happened earlier in the sentence

b. The other predicts the next token

- What are these two states called in an LSTM?

a. Cell state (long short-term memory)

b. Hidden state (predict next token)

- What is tanh, and how is it related to sigmoid?

It’s just a sigmoid function rescaled to the range of -1 to 1

- What is the purpose of this code in

LSTMCell?:h = torch.stack([h, input], dim=1)

This should actually be

torch.cat([h, input], dim=1). It joins the hidden state and the new input.

- What does

chunkto in PyTorch?

Splits a tensor in equal sizes

- Study the refactored version of

LSTMCellcarefully to ensure you understand how and why it does the same thing as the non-refactored version. - Why can we use a higher learning rate for

LMModel6?

Because LSTM provides a partial solution to exploding/vanishing gradients (?)

- What are the three regularisation techniques used in an AWD-LSTM model?

- Dropout

- Activation regularization

- Temporal activation regularization

- What is dropout?

Deleting activations at random

- Why do we scale the weights with dropout? Is this applied during training, inference, or both?

a. The scale changes if we sum up activations, it makes a difference if all activations are present or they are dropped with probability p. To correct the scale, a division by (1-p) is applied.

b. In the implementation in the book, it is applied during training

c. It should be possible in both ways

- What is the purpose of this line from

Dropout?:if not self.training: return x

When not in training mode, don’t apply dropout

- Experiment with

bernoulli_to understand how it works. - How do you set your model in training mode in PyTorch? In evaluation mode?

a.

Module.train(), Module.eval()

- Write the equation for activation regularization (in maths or code, as you prefer). How is it different to weight decay?

loss += alpha * activations.pow(2).mean()

It is different by not decreasing the weights but the activations

- Write the equation for temporal activation regularization (in maths or code, as you prefer). Why wouldn’t we use this for computer vision problems?

TAR focuses on making the activations of consecutive tokens to be similar:

loss += beta * (activations[:,1:] - activations[:,:-1]).pow(2).mean()In computer vision we want the activations of consecutive layers to learn to detect different features in an image. In order to achieve that the activations might in fact have to differ quite a lot between layers. Thus, using TAR to force consecutive layers in a computer vision model to have similar activations might actually be quite detrimental.

- What is “weight tying” in a language model?

Weights of input-to-hidden layer is the same of weights of hidden-to-output layer is the same. This basically means we assume that the mapping from English words to activations