Actually the transforms probably shouldn’t be removed at the end of training since that’s why you have an error: your model is still in half precision but the input you feed it isn’t. @jeremy let me know if you feel differently but we should still be using half precision for inference, no?

A post was merged into an existing topic: Fastai v1 install issues thread

Hi all and @jeremy, @sgugger, @stas TextLMDataBunch uses a lot of memory. I think it is a big issue because training on 400 mio word for 4 epochs must generalize better than 16 epoch on 100 mio words.

I believe that what we see is mostly the memory overhead of multiprocessing. Especially in the tokenizer.process_all

I have a 32 GB-RAM machine. The maximum csv file that i can pass to TextLMDataBunch.from.csv is 1.3 GB with about 170 mio words in the trainingset. at 1.4 gb with 180 mio words in the trainingset i get a “broken process” error, because there is not enough memory to pickle the results back to the calling process in tokenizer.process_all.

The setup:

The memory is at 2.2 just before calling TextLMDataBunch.from.csv: os+jupyter+browser

The tokenizer.process_all creates 12 processes with default chunksize=10000

The process breaks around 80% into the tokenization process with the 1.4 GB wiki (the 1.3 GB wiki pass)

What could be done

disclaimer: i do not know the use cases in language-processing well enough to pinpoint the weeknesses in the following but: Having looked through the code i believe that most of the processes could be row-based. If the function _jointext was moved to the tokenization step then the input file could be split in n=n_cpus processes. Each process could read row-wise from a file and write to another file to return the result to the calling process. In this way the multiprocessing memory overhead would be almost zero, because we only pass a filename to each process. Despite the extra serialization i think it would be faster - ie ThreadPool also seriales/pickels the data in order to pass them to the parallel processes and back

What do you think ?

I’ve got more adventures with fp16 on inference time. This time with norm/denorm.

learn.predict(data)

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-42-f62f0b041cab> in <module>()

13 data = data.type(torch.HalfTensor)

14 data = data.cuda()

---> 15 cat, t1, t2 = learn.predict(data)

~/anaconda3/lib/python3.7/site-packages/fastai/basic_train.py in predict(self, item, **kwargs)

249 "Return prect class, label and probabilities for `item`."

250 self.callbacks.append(RecordOnCPU())

--> 251 batch = self.data.one_item(item)

252 res = self.pred_batch(batch=batch)

253 pred = res[0]

~/anaconda3/lib/python3.7/site-packages/fastai/basic_data.py in one_item(self, item, detach, denorm)

147 ds = self.single_ds

148 with ds.set_item(item):

--> 149 return self.one_batch(ds_type=DatasetType.Single, detach=detach, denorm=denorm)

150

151 def show_batch(self, rows:int=5, ds_type:DatasetType=DatasetType.Train, **kwargs)->None:

~/anaconda3/lib/python3.7/site-packages/fastai/basic_data.py in one_batch(self, ds_type, detach, denorm)

134 w = self.num_workers

135 self.num_workers = 0

--> 136 try: x,y = next(iter(dl))

137 finally: self.num_workers = w

138 if detach: x,y = to_detach(x),to_detach(y)

~/anaconda3/lib/python3.7/site-packages/fastai/basic_data.py in __iter__(self)

70 for b in self.dl:

71 #y = b[1][0] if is_listy(b[1]) else b[1] # XXX: Why is this line here?

---> 72 yield self.proc_batch(b)

73

74 @classmethod

~/anaconda3/lib/python3.7/site-packages/fastai/basic_data.py in proc_batch(self, b)

63 "Proces batch `b` of `TensorImage`."

64 b = to_device(b, self.device)

---> 65 for f in listify(self.tfms): b = f(b)

66 return b

67

~/anaconda3/lib/python3.7/site-packages/fastai/vision/data.py in _normalize_batch(b, mean, std, do_x, do_y)

74 x,y = b

75 mean,std = mean.to(x.device),std.to(x.device)

---> 76 if do_x: x = normalize(x,mean,std)

77 if do_y and len(y.shape) == 4: y = normalize(y,mean,std)

78 return x,y

~/anaconda3/lib/python3.7/site-packages/fastai/vision/data.py in normalize(x, mean, std)

64 def normalize(x:TensorImage, mean:FloatTensor,std:FloatTensor)->TensorImage:

65 "Normalize `x` with `mean` and `std`."

---> 66 return (x-mean[...,None,None]) / std[...,None,None]

67

68 def denormalize(x:TensorImage, mean:FloatTensor,std:FloatTensor, do_x:bool=True)->TensorImage:

RuntimeError: expected type torch.cuda.FloatTensor but got torch.cuda.HalfTensor

so I converted the mean and std to the type of input data:

def normalize(x:TensorImage, mean:FloatTensor,std:FloatTensor)->TensorImage:

"Normalize `x` with `mean` and `std`."

mean,std = mean.type(x.type()),std.type(x.type())

return (x-mean[...,None,None]) / std[...,None,None]

def denormalize(x:TensorImage, mean:FloatTensor,std:FloatTensor, do_x:bool=True)->TensorImage:

"Denormalize `x` with `mean` and `std`."

mean,std = mean.type(x.type()),std.type(x.type())

return x.cpu()*std[...,None,None] + mean[...,None,None] if do_x else x.cpu()

and got this:

~/anaconda3/lib/python3.7/site-packages/fastai/vision/data.py in denormalize(x, mean, std, do_x)

70 "Denormalize `x` with `mean` and `std`."

71 mean,std = mean.type(x.type()),std.type(x.type())

---> 72 return x.cpu()*std[...,None,None] + mean[...,None,None] if do_x else x.cpu()

73

74 def _normalize_batch(b:Tuple[Tensor,Tensor], mean:FloatTensor, std:FloatTensor, do_x:bool=True, do_y:bool=False)->Tuple[Tensor,Tensor]:

RuntimeError: "mul" not implemented for 'torch.HalfTensor'

After that I changed denormalize and converted x to the type of mean:

def denormalize(x:TensorImage, mean:FloatTensor,std:FloatTensor, do_x:bool=True)->TensorImage:

"Denormalize `x` with `mean` and `std`."

x = x.type(mean.type())

return x.cpu()*std[...,None,None] + mean[...,None,None] if do_x else x.cpu()

It worked. I’d like to fix it properly, this looks hacky. Any idea how?

I found a way to speed up our test suite, using parallel testing.

pip install pytest-xdist

$ time pytest

real 0m51.069s

$ time pytest -n 6

real 0m26.940s

half the time - not bad!

We just need to fix the temp files creation to use a unique string (pid?), otherwise at times some tests collide in a race condition over the same temp file path.

And if you have a powerful machine, you might be able to crank it up even more. I run out of 8GB CUDA memory when using more than 8 workers.

For those of you who wanted to understand the Pillow-SIMD and libjpeg-turbo story with all of its complexities head to https://docs.fast.ai/performance.html#faster-image-processing, where it’s unraveled for you including installation details with the complex nuances of it.

There is now an automated script that does the performance analysis for you.

It’s discussed in this new thread:

Also note a new dependency: nvidia-ml-py3, so make sure you either install it (pip/conda) or run 'pip install -e .[dev]aftergit pull`.

And with nvidia-ml-py3 you can start tapping into nvidia status from fastai code w/o needing the awkward parsing of nvidia-smi's output.

nvidia-ml-py3 was already on pypi and we added a conda version in the fastai channel.

And it’s super fast!

Hi guys,

i am new to the course an am having issues with gpu management or capacity (which idk).

I outlined the issue here: https://github.com/fastai/fastai/issues/1330

Is it a problem of management? i sure hope so

I have been working on a simple Language Model generator in fast.ai v1

All works quite smoothly and then I hit the learner.predict() and get different text generation each time I call it. Digging into the code, I see how torch.multinomial() is being called to sample from the output probabilities. I also (think) I understand the temperature and min_p parameters which allow you to control how much variation you want in your selection. (for example, if you set temp = 0 , you get a uniform selection of words and a random sample on the output.)

What I don’t see is a configuration that will take the single-best word on each pass through the loop. This could lead to “loops” where you flip-flop predictions but I think that would be good to know about your network. This would also generate the same words in the same order given the same state.

Am I missing something important here about how the text.learner.predict() works? Or a set of parameters that would meet this criteria?

Where would be a good place to discuss test coverage, e.g. as a subtopic in: Dev Projects Index ?

Made a simple test coverage for basic_data.show_batch in test_text_data.py. Motivation initially was I could try to reproduce this bug here: https://forums.fast.ai/t/getting-cuda-oom-on-lesson3-imdb-notebook-with-a-bs-8/30080/14 . But I could already make a PR of the test scripts, even though, there are no asserts: its simply coverage for this method as a regression test that it doesn’t throw exceptions.

After running make coverage, I can see other areas, where test scripts could be useful. Am aware of the general guidelines (https://docs.fast.ai/dev/test.html), but maybe good to have one place to coordinate, set prios and have guidelines when asserts matter (or not)? Might help preventing non - accepted PRs.

Coverage for the sake of coverage is mostly meaningless unless it verifies the workings, but tests for the functionality that hasn’t been tested yet are very welcome.

In general you can just submit such test contributions directly via github, but if you’d like to make a study and perhaps compile a list of functionality that’s untested yet, then yes, making a dedicated to testing thread under https://forums.fast.ai/c/fastai-users/dev-projects and linking to it from Dev Projects would be a very useful contribution, @Benudek. There is one for Documentation improvements so that would be one for Testing Improvements or something like that.

drafted a task wrt testing here: Test Asserts & Coverage and linked it here: Dev Projects Index

Feel free to adjust task & process

4 posts were merged into an existing topic: Improving/Expanding Tests

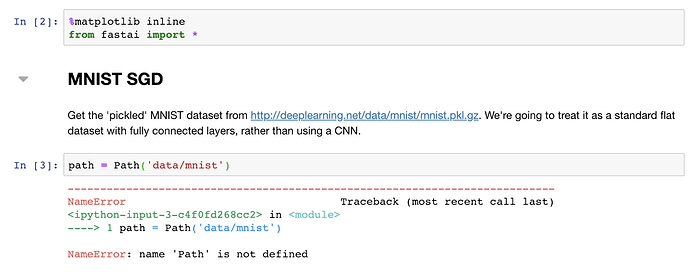

I was going through lesson5-sgd-mnist.ipynb and noticed that pathlib’s Path does not get imported:

I did not think much of it and did

from fastai.vision import *

And got past the error. However, a little later in the same notebook, I am getting this error:

data = DataBunch.create(train_ds, valid_ds, bs=bs)

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

<ipython-input-12-16801c71829f> in <module>

1

----> 2 data = DataBunch.create(train_ds, valid_ds, bs=bs)

~/git/fastai/fastai/basic_data.py in create(cls, train_ds, valid_ds, test_ds, path, bs, num_workers, tfms, device, collate_fn, no_check)

112 collate_fn:Callable=data_collate, no_check:bool=False)->'DataBunch':

113 "Create a `DataBunch` from `train_ds`, `valid_ds` and maybe `test_ds` with a batch size of `bs`."

--> 114 datasets = cls._init_ds(train_ds, valid_ds, test_ds)

115 val_bs = bs

116 dls = [DataLoader(d, b, shuffle=s, drop_last=(s and b>1), num_workers=num_workers) for d,b,s in

~/git/fastai/fastai/basic_data.py in _init_ds(train_ds, valid_ds, test_ds)

102 @staticmethod

103 def _init_ds(train_ds:Dataset, valid_ds:Dataset, test_ds:Optional[Dataset]=None):

--> 104 fix_ds = valid_ds.new(train_ds.x, train_ds.y) # train_ds, but without training tfms

105 datasets = [train_ds,valid_ds,fix_ds]

106 if test_ds is not None: datasets.append(test_ds)

AttributeError: 'TensorDataset' object has no attribute 'new'

I am on commit ce85b4718865f25d0243042c0e4d051929ca3b52 and not seeing anything obvious.

Does this ring a bell to anybody?

we now have a pillow conda package built against a custom build of libjpeg-turbo (and libtiff w/ libjpeg-turbo) in the fastai test channel

I made 3.6 and 3.7 linux builds:

To install:

conda uninstall -y pillow libjpeg-turbo

conda install -c fastai/label/test pillow

This will make your jpeg decompressing much faster (See Performance Improvement Through Faster Software Components).

Note that this is pillow-5.4.0.dev0 build - i.e. don’t use in production w/o testing.

I also uploaded pillow-simd-5.3.0.post0 w/ libjpeg-turbo and built w/ avx2 - only py36.

conda uninstall -y pillow libjpeg-turbo

conda install -c fastai/label/test pillow-simd

note that it’d get overwritten by pillow through update/install of any other package depending on pillow.

Many apologies @hiromi - I made a change in master recently that removes the need for from fastai import * any time you use an application (e.g. from fastai.vision import *). However, if you aren’t using an application, you need from fastai.basics import *. I’ve fixed all the notebooks now (I hope!)

The 2nd bug you came across is because I added a new fix_dl attribute that provides the training dataset, but without shuffling or augmentation. It only works with fastai ItemLists however, not with generic Datasets. So I’ve fixed that now (in master) to skip creating it for generic datasets.

when i make git pull it tries to switch to “https://github.com/fastai/fastai/tree/devforfu-fastai-make-pr-branch-to-python” the new developer branch ?

is this the new dev branch ?

Thank you so much for the detailed explanation!

I was hoping that I can spot what has changed and fix it somehow, but still a bit slow to get my bearings. Maybe next time

Breaking change: In all the text application, batch is now the first dimension (and sequence length the second dimension). It won’t impact you if you’re using the fastai models/databunch but if you were customizing things, they may need to be tweaked.

@piotr.czapla Tagging you as a warning, even if I know you’re not using master.

Thanks for the heads up, @Kaspar. I may have done something wrong while trying to add a change to a PR, I will be extra careful next time. I deleted that branch as it has been merged, so you probably shouldn’t have a problem now.