Thanks will do that.

And yes, I have the stack trace… becuase I saved it later to try to watch on… but u give some hint just with the error.

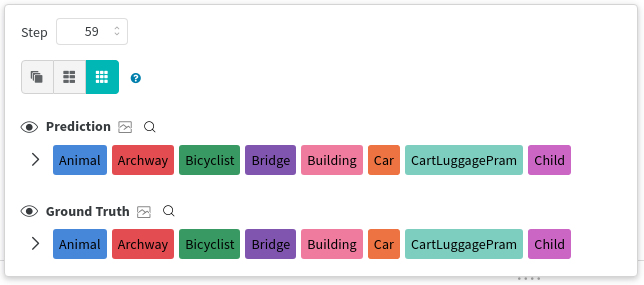

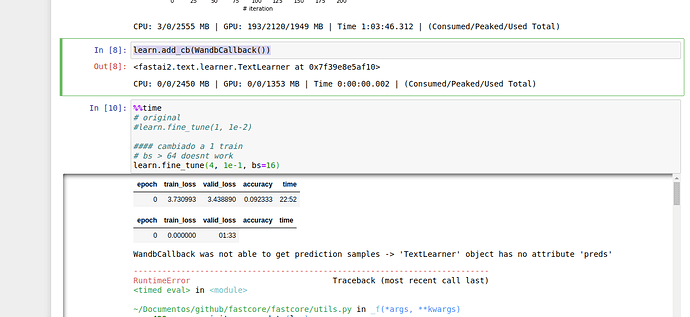

epoch train_loss valid_loss accuracy time

0 3.589976 3.483551 0.098774 18:48

epoch train_loss valid_loss accuracy time

0 0.000000 01:37

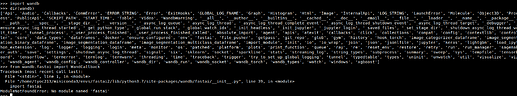

WARNING:wandb.util:requests_with_retry encountered retryable exception: ('Connection aborted.', OSError("(104, 'ECONNRESET')")). args: ('https://api.wandb.ai/files/tyoc213/trec-covid/t3htw2er/file_stream',), kwargs: {'json': {'complete': False, 'failed': False}}

WandbCallback was not able to get prediction samples -> 'TextLearner' object has no attribute 'preds'

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<timed eval> in <module>

~/Documentos/github/fastcore/fastcore/utils.py in _f(*args, **kwargs)

429 init_args.update(log)

430 setattr(inst, 'init_args', init_args)

--> 431 return inst if to_return else f(*args, **kwargs)

432 return _f

433

~/Documentos/github/fastai2/fastai2/callback/schedule.py in fine_tune(self, epochs, base_lr, freeze_epochs, lr_mult, pct_start, div, **kwargs)

163 base_lr /= 2

164 self.unfreeze()

--> 165 self.fit_one_cycle(epochs, slice(base_lr/lr_mult, base_lr), pct_start=pct_start, div=div, **kwargs)

166

167 # Cell

~/Documentos/github/fastcore/fastcore/utils.py in _f(*args, **kwargs)

429 init_args.update(log)

430 setattr(inst, 'init_args', init_args)

--> 431 return inst if to_return else f(*args, **kwargs)

432 return _f

433

~/Documentos/github/fastai2/fastai2/callback/schedule.py in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt)

112 scheds = {'lr': combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

113 'mom': combined_cos(pct_start, *(self.moms if moms is None else moms))}

--> 114 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd)

115

116 # Cell

~/Documentos/github/fastcore/fastcore/utils.py in _f(*args, **kwargs)

429 init_args.update(log)

430 setattr(inst, 'init_args', init_args)

--> 431 return inst if to_return else f(*args, **kwargs)

432 return _f

433

~/Documentos/github/fastai2/fastai2/learner.py in fit(self, n_epoch, lr, wd, cbs, reset_opt)

201 try:

202 self.epoch=epoch; self('begin_epoch')

--> 203 self._do_epoch_train()

204 self._do_epoch_validate()

205 except CancelEpochException: self('after_cancel_epoch')

~/Documentos/github/fastai2/fastai2/learner.py in _do_epoch_train(self)

173 try:

174 self.dl = self.dls.train; self('begin_train')

--> 175 self.all_batches()

176 except CancelTrainException: self('after_cancel_train')

177 finally: self('after_train')

~/Documentos/github/fastai2/fastai2/learner.py in all_batches(self)

151 def all_batches(self):

152 self.n_iter = len(self.dl)

--> 153 for o in enumerate(self.dl): self.one_batch(*o)

154

155 def one_batch(self, i, b):

~/Documentos/github/fastai2/fastai2/learner.py in one_batch(self, i, b)

157 try:

158 self._split(b); self('begin_batch')

--> 159 self.pred = self.model(*self.xb); self('after_pred')

160 if len(self.yb) == 0: return

161 self.loss = self.loss_func(self.pred, *self.yb); self('after_loss')

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

548 result = self._slow_forward(*input, **kwargs)

549 else:

--> 550 result = self.forward(*input, **kwargs)

551 for hook in self._forward_hooks.values():

552 hook_result = hook(self, input, result)

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/container.py in forward(self, input)

98 def forward(self, input):

99 for module in self:

--> 100 input = module(input)

101 return input

102

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

548 result = self._slow_forward(*input, **kwargs)

549 else:

--> 550 result = self.forward(*input, **kwargs)

551 for hook in self._forward_hooks.values():

552 hook_result = hook(self, input, result)

~/Documentos/github/fastai2/fastai2/text/models/core.py in forward(self, input)

79 #Note: this expects that sequence really begins on a round multiple of bptt

80 real_bs = (input[:,i] != self.pad_idx).long().sum()

---> 81 o = self.module(input[:real_bs,i: min(i+self.bptt, sl)])

82 if self.max_len is None or sl-i <= self.max_len:

83 outs.append(o)

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

548 result = self._slow_forward(*input, **kwargs)

549 else:

--> 550 result = self.forward(*input, **kwargs)

551 for hook in self._forward_hooks.values():

552 hook_result = hook(self, input, result)

~/Documentos/github/fastai2/fastai2/text/models/awdlstm.py in forward(self, inp, from_embeds)

105 new_hidden = []

106 for l, (rnn,hid_dp) in enumerate(zip(self.rnns, self.hidden_dps)):

--> 107 output, new_h = rnn(output, self.hidden[l])

108 new_hidden.append(new_h)

109 if l != self.n_layers - 1: output = hid_dp(output)

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/module.py in __call__(self, *input, **kwargs)

548 result = self._slow_forward(*input, **kwargs)

549 else:

--> 550 result = self.forward(*input, **kwargs)

551 for hook in self._forward_hooks.values():

552 hook_result = hook(self, input, result)

~/Documentos/github/fastai2/fastai2/text/models/awdlstm.py in forward(self, *args)

52 #To avoid the warning that comes because the weights aren't flattened.

53 warnings.simplefilter("ignore")

---> 54 return self.module.forward(*args)

55

56 def reset(self):

~/miniconda3/envs/fastai2/lib/python3.7/site-packages/torch/nn/modules/rnn.py in forward(self, input, hx)

568 if batch_sizes is None:

569 result = _VF.lstm(input, hx, self._flat_weights, self.bias, self.num_layers,

--> 570 self.dropout, self.training, self.bidirectional, self.batch_first)

571 else:

572 result = _VF.lstm(input, batch_sizes, hx, self._flat_weights, self.bias,

RuntimeError: CUDA out of memory. Tried to allocate 102.00 MiB (GPU 0; 7.79 GiB total capacity; 5.68 GiB already allocated; 105.19 MiB free; 5.82 GiB reserved in total by PyTorch)

CPU: 15/20/2590 MB | GPU: 5895/110/7767 MB | Time 0:20:43.136 | (Consumed/Peaked/Used Total)

)

)