I am currently attempting to get a own data set for the same purpose by downloading images from google - so I would be happy to take your images into account as well

I think this project is interesting, since a buddy of mine told me of his cat that brought in mice, other rodents, bunnies, once a pheasant and ate it. On the kitchen table.

So locking out this kitty would be benefical - and if he would be okay with the cat bringing in what ever, I would have built something funny.

@bencoman

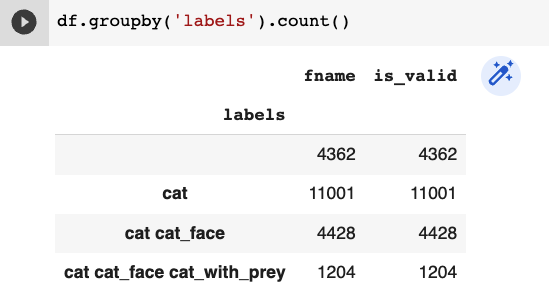

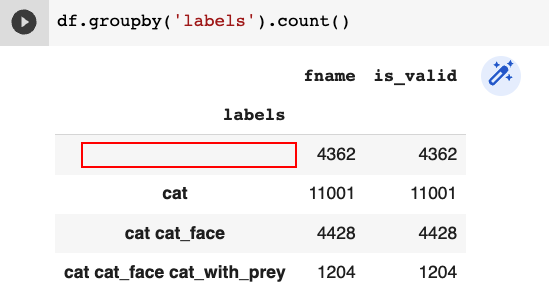

yes, it does; the categoray with the null string will be a fourth category that, in this case, contains images of no cat (or in general, other things than cats, for example racoons, dogs, velociraptors, …).

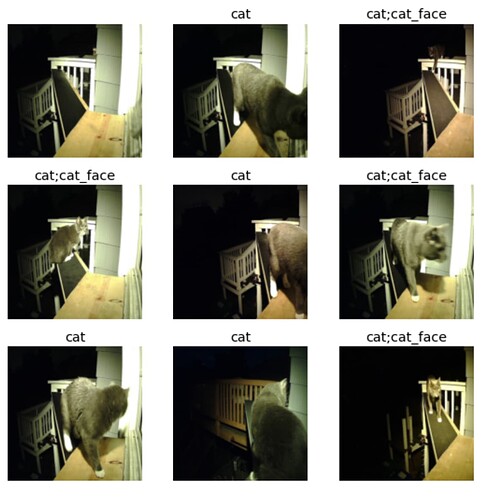

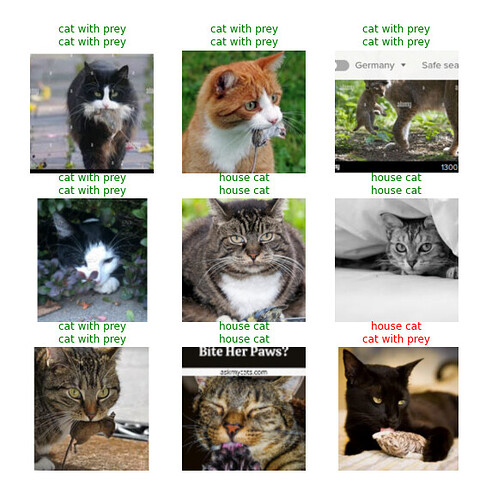

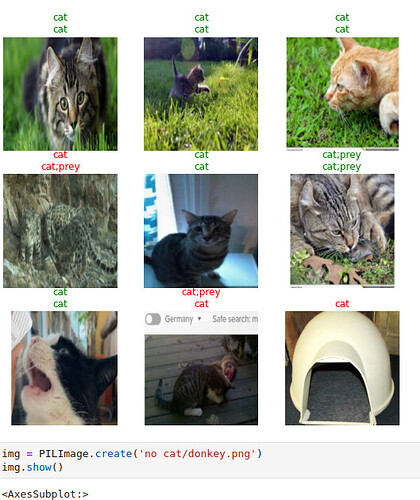

The vocab is just “what can be seen in the image”, or to be more explicit, there are different versions of “what can be seen” - there are, for example, the files that contain not only the label of an object but also the “exact” position as a bounding box. With these, the object recognition engine can be trained and triggered to infer more than one object in an image. If in one of @BenHamm 's images would appear suddenly twelce cats looking intently at the cam, it would still register as “cat cat_face”, the bounding boxes approach would/should yield 12 bounding boxes with “cat cat_face”.

I have to admit that I am not too firm on this approach, by the way!

Now, regarding the last few paragraphs:

I am not quite sure I get what you mean, but I’ll try!

As far as I understand you want to use only three classes, i.e., creating a dataloader containing only the classified images. Then you want to use during training (?) also the images without cats, so actually the class without classes (or the ‘other’ class or ‘none’ class).

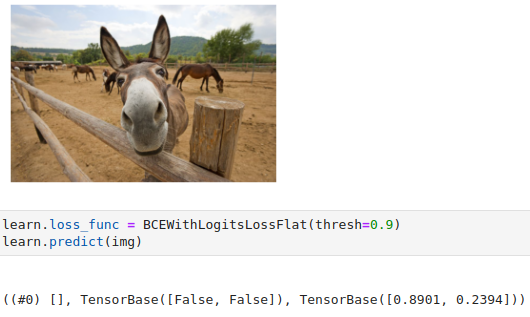

This should yield a model with only three classes, in @BenHamm 's case the ‘cat’, ‘cat cat_face’, and ‘cat cat_face cat_with_prey’ case. The “faulty” images would then be categorized as [0,0,0] since they should be not similar at all.

Did I understand you correctly?

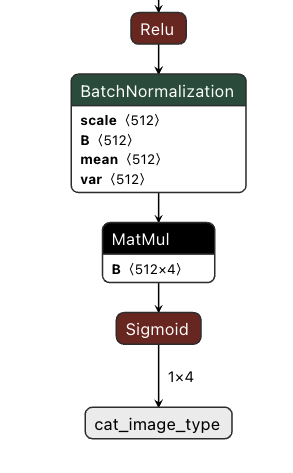

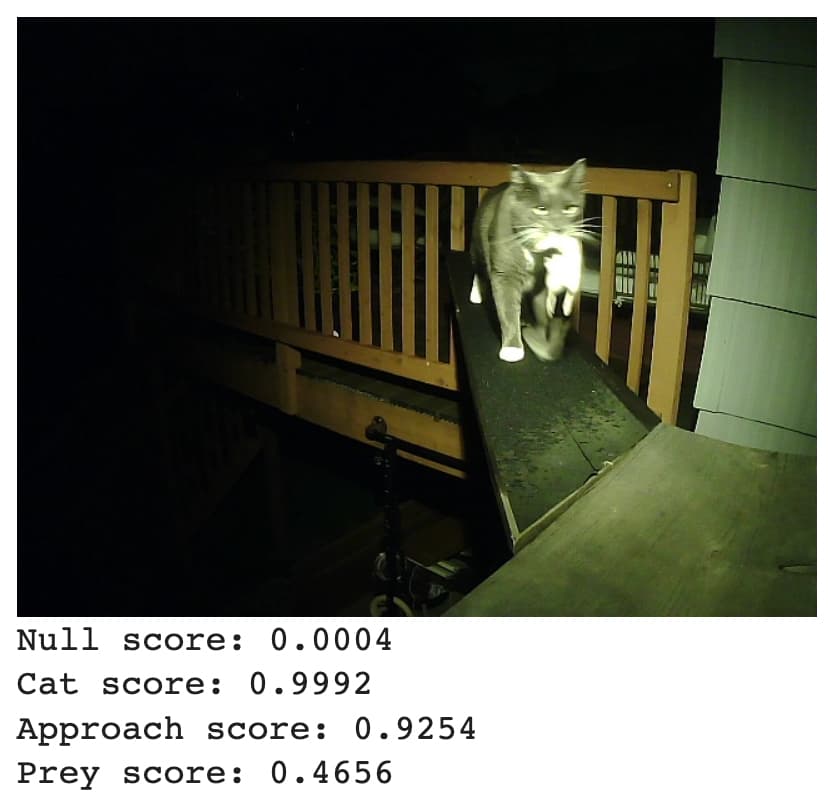

The last part, about MultiCategory, is essentially already used by @BenHamm as you can see in his first post. Here, the difference is, that @benhamm removed the output layer and greps the layer before that. But, if everything works well, he should get, for ‘cat approaching the cat door but having nothing in its fangs’ the results [0, 1, 1, 0], i.e. ‘’=0, ‘cat’=1, ‘cat_face’=1, ‘cat_with_prey’=0 IFF he would be using the last layer.

The MultiCategoryBlock should allow to categorize “Multiple Objects”, ie. a cat and maybe a dog, in an image. So if you have for example the categories: cat, dog, giraffe, whale, rhino and have, in the layer before the result layer, the value [0, 1.34, 0.53, 0.1, 0.55] it might result in [0, 1, 0, 0, 1] which would indicate no cats, a(least one) dog, no giraffe, no whale, a(t least one) rhino!