I do not know if the reasoning below is correct - I am not trying to answer my own question. Would really love if someone could shed some additional light on this and shoot holes into the parts that are not right or provide me with confirmation. I initially set out to write this having only the question but as I started to type and think on this I came up with this reasoning that gives me comfort I somewhat understand what is going on though at the same time I fully expect I could be completely wrong…

In the notebook, it reads:

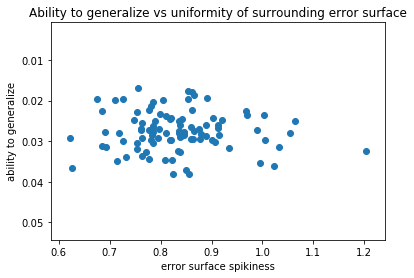

However, we may find ourselves in a part of the weight space that isn’t very resilient - that is, small changes to the weights may result in big changes to the loss. We want to encourage our model to find parts of the weight space that are both accurate and stable.

Why would we care about the resilency of where we are in the weight space - isn’t low loss the only thing that we care about? Low loss == being close to global minimum.

But in very high dimensional space, local minima are not a concern - any good point with low loss is of comparable quality to global minimum and also it is very unlikely to encounter a local minimum as there (nearly) always exists a way out. Though getting out can be slow (the whole sloshing around the bottom of a ravine idea instead of moving downhill).

Thus I make the assumption that cyclical learning rates are not about getting us out of areas with low loss even if they are not a global minimum (we are not searching for it - we will be happy with any good minimum we find), but for getting us out of areas that would be otherwise hard to navigate? We hope our increased learning rate will get us out of that area (multidimensional saddle point its called?) so that we can try a new area sooner thus shortening the training?

The presuposition is that ‘spiky area’, area where small steps in either direction can cause big changes to the loss in either direction, are not very good. A part of the weight space where loss is as low as we can reasonably expect (even though it doesn’t have to be a global minimum) will not be like a well, it will be more like a shallow, flat volcano crater?

With adaptive learning rates we were learning how to navigate the ravines better, but with annealing we just say - let me jump around, if this area is good jumping will not hurt too badly, but if it is bad, by increasing the temperature (or in this case just increasing step size) I can hopefully more quickly get to exploring other areas and via getting out of this not so great area quicker I speed up the training?

Thank you very much!!!

Thank you very much!!! )

)

- of batch normalization and adaptive learning rates (both will be covered very soon in this course I think) continues to amaze me.

- of batch normalization and adaptive learning rates (both will be covered very soon in this course I think) continues to amaze me.