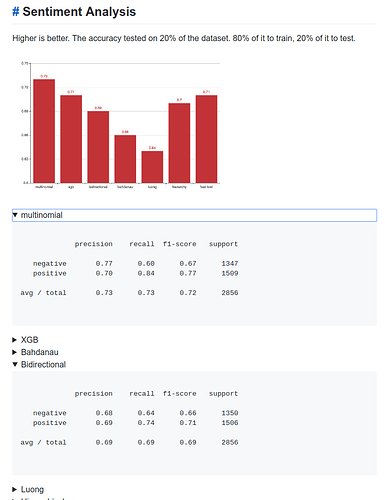

Results:

Language models:

Data set & benchmarks:

I’ve updated the first post of Language Model Zoo, to link to this website. You may want to include the information about Malay that you had there in the first post of this thread.

This are the links posted by @cedirc

@piotr.czapla Thanks for keeping things organized.

@hafidz I am super busy these days. I only managed to have a chance to reply to you today. So, what changed since the first release? Early this week, I updated my GitHub repo for this project:

I added some updates under the " Text Classification" section in the README. Basically, for the downstream task (sentiment analysis), I managed to find some curated Malay corpus dataset from a few academic papers published in Malaysia local universities. Please take a look. I need help for this work.

@cedric awesome repo!

Have you managed to push it a bit further? We managed to beat SOTA on Thai, Polish, German, Indonesian (published yesterday), and possibly Japanese. Malay would fit perfectly!

It would be also interesting to see the accuracy if we make Indonesian text classification using Malay language model or vice versa since Indonesian and Malay have the same root and many words are the same

Hi Piotr,

Thanks! I hope you don’t mind my out-of-fashion response.

No. My hands are tied. Regardless, I plan to finish what I started. My plan is, I will try my best to allocate an hour a day and work on this project for the next 1 week and provide my updates here.

Grats! Nice work.

I hope so ![]()

Thanks.

It seems that they haven’t much standardization in terms of having a fixed corpus (like IMDB dataset) that are evaluated upon by local researchers here.

In such cases, how does one normally benchmark the “state of the art”? @piotr.czapla @cedric

I found the Malay Opinion Corpus (MOC) / Malay Reviews Corpus (MRC) from several academic papers and here’s what they have to say:

The corpus contains 2000 movie reviews collected from different web pages and blogs in Malay; 1000 of them are considered positive reviews, and the other 1000 are considered negative.

Some samples from this dataset:

Sentiment: positive

okla citer ni dua bintang daripada lima bintang,yusry memang improve..but then citer mcm drama sgtla... anyway suka ngan ending dia...lawak! :lol::lol::lol::lol:

crite nie ke, The Amityville Horrormemang beh crite ning... wase nok gi tengok pulok skali gi sound effect dia baguh, jalan crite baguh 9/10 :thmbup: :star: :star: :star: :star: :star:

Manual (by-hand) English translation:

The story is OK 2 out of 5 stars Yusry really improve.. but then the story is like too dramatic...anyway like the ending and his humour :lol::lol::lol::lol:

This movie, The Amityville Horror the story is really the best... Let's go watch this again the sound effect is good, the story line is good 9/10 :thmbup: :star: :star: :star: :star: :star:

Upon taking a closer look at this dataset, here’s my findings:

- Mixed of Malay and English in a sentence

- Use of short form (i.e.: mcm

macam, citer

macam, citer  cerita, gi

cerita, gi  lagi)

lagi) - Colloquial/slang language

- Inconsistent spelling (data is noisy)

- …and many more

I don’t know how the local researchers there manage to work with this dataset.

I don’t think this dataset is suitable for the task. At this point, my earlier assumptions are wrong. ![]() With that, I think your observations are spot on. So, now I don’t have a baseline that I can use for the benchmark.

With that, I think your observations are spot on. So, now I don’t have a baseline that I can use for the benchmark.

What do you think? How should we handle this challenge? Please help!

I believe there might be a few dictionaries we can use to circumvent the short form problems (or build our own). We could also try leveraging this repo (https://github.com/DevconX/Malaya) to help with the data cleaning.

I’m currently still going through some of the local papers in search of a good benchmark for us to use.

In terms of building a language model, Dewan Bahasa & Pustaka (Malaysia’s gov body that coordinates the use of the Malay language & literature) also has a good range of corpus being shared via it’s corpus platform (http://lamanweb.dbp.gov.my/index.php/pages/view/88?mid=63) . However they’re not currently downloadable. Still contemplating whether I should be scraping it. Alternatively we could try scraping Malaysian news portals.

Yeah, those researchers used a few lexicons.

This looks useful.

OK. Hope to hear some good news. At the back of my head, if we still can’t find a good benchmark through the local papers, then it’s up to us to set the baseline. I am not so sure if this is a good idea.

Yes, I created an account and logged in to DBP website and noticed that as well.

Scraping is my last option. Time is not on my side.

I found this benchmark from the DevconX’s Malaya repo:

Direct link: Home · mesolitica/malaya Wiki · GitHub

Also found the notebook for sentiment analysis using Bidirectional RNN

and the dataset that they’ve used:

https://github.com/DevconX/Malaya/blob/master/dataset/sentiment-data-v2.csv

Can we use this dataset for ULMFiT text classification task and benchmark?

Thanks.

I think you are fine with setting your own baseline if there is not enough research. But what would be important is to somehow publish your dataset afterwards, at least that is my plan with Polish sentiment analysis.

But it seems you have found a pretty good dataset with some baseline too! I can’t wait to see the results ![]()

Hi Piotr,

Thank you for your guidance. LM + text classification all done! Please check out the results ![]()