EDIT: I tried using “Using TextClassificationInterpretation throws a cudnn Runtime error” and the forum told Title seems unclear, is it a complete sentence? wat?

I was quite excited by the new TextClassificationInterpretation, as I have been thinking about it for a while, but cannot get it to work.

I have the following installation on a paperspace vm

=== Software ===

python : 3.6.7

fastai : 1.0.46

fastprogress : 0.1.19

torch : 1.0.0

nvidia driver : 410.73

torch cuda : 9.0.176 / is available

torch cudnn : 7401 / is enabled

=== Hardware ===

nvidia gpus : 1

torch devices : 1

- gpu0 : 24449MB | Quadro P6000

=== Environment ===

platform : Linux-4.4.0-128-generic-x86_64-with-debian-stretch-sid

distro : #154-Ubuntu SMP Fri May 25 14:15:18 UTC 2018

conda env : fastai

python : /home/paperspace/anaconda3/envs/fastai/bin/python

sys.path : /home/paperspace/anaconda3/envs/fastai/lib/python36.zip

/home/paperspace/anaconda3/envs/fastai/lib/python3.6

/home/paperspace/anaconda3/envs/fastai/lib/python3.6/lib-dynload

/home/paperspace/anaconda3/envs/fastai/lib/python3.6/site-packages

/home/paperspace/anaconda3/envs/fastai/lib/python3.6/site-packages/IPython/extensions

/home/paperspace/.ipython

I have been developing on a text classification project at work and so far everything has been quite good, with minor hiccups here and there. I don’t think the details are important, but if I try

bs=256

data_clas = TextClasDataBunch.load(path, 'saved_classifier_data', bs=bs)

learn = text_classifier_learner(data_clas, AWD_LSTM, drop_mult=0.7)

learn.load(my_trained_classifier');

ci = TextClassificationInterpretation.from_learner(learn)

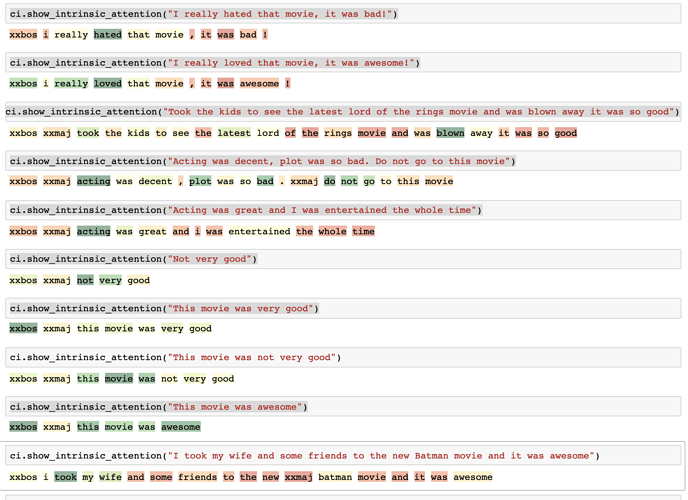

ci.show_intrinsic_attention("Please classify this sentence")

I get the following error

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-10-93405a760cc1> in <module>

----> 1 ci.show_intrinsic_attention("I want this to be classified please")

~/anaconda3/envs/fastai/lib/python3.6/site-packages/fastai/text/models/awd_lstm.py in show_intrinsic_attention(self, text, class_id, **kwargs)

256

257 def show_intrinsic_attention(self, text:str, class_id:int=None, **kwargs)->None:

--> 258 text, attn = self.intrinsic_attention(text, class_id)

259 show_piece_attn(text.text.split(), to_np(attn), **kwargs)

~/anaconda3/envs/fastai/lib/python3.6/site-packages/fastai/text/models/awd_lstm.py in intrinsic_attention(self, text, class_id)

245 cl = self.model[1](self.model[0].module(emb, from_embeddings=True))[0].softmax(dim=-1)

246 if class_id is None: class_id = cl.argmax()

--> 247 cl[0][class_id].backward()

248 attn = emb.grad.squeeze().abs().sum(dim=-1)

249 attn /= attn.max()

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/tensor.py in backward(self, gradient, retain_graph, create_graph)

100 products. Defaults to ``False``.

101 """

--> 102 torch.autograd.backward(self, gradient, retain_graph, create_graph)

103

104 def register_hook(self, hook):

~/anaconda3/envs/fastai/lib/python3.6/site-packages/torch/autograd/__init__.py in backward(tensors, grad_tensors, retain_graph, create_graph, grad_variables)

88 Variable._execution_engine.run_backward(

89 tensors, grad_tensors, retain_graph, create_graph,

---> 90 allow_unreachable=True) # allow_unreachable flag

91

92

RuntimeError: cudnn RNN backward can only be called in training mode

As much as I can tell this problem should have gone away with PyTorch 1, but I am at a loss and don’t understand the code enough to make a guess at what I am doing wrong