Further to @loss4Wang’s good answer, you might want to try…

pred_galaxy,pred_ndx,probs = learn.predict(PILImage.create('galaxy cluster.jpg'))

print(f"This is a: {pred_galaxy}.")

print(f"with a probability of: {probs[pred_ndx]:.4f}")

Further to @loss4Wang’s good answer, you might want to try…

pred_galaxy,pred_ndx,probs = learn.predict(PILImage.create('galaxy cluster.jpg'))

print(f"This is a: {pred_galaxy}.")

print(f"with a probability of: {probs[pred_ndx]:.4f}")

hi everyone!

I used Animals-10 | Kaggle data set to train a model for class 1 homework.

I created a huggingface space to showcase it here: Animal Classifier - a Hugging Face Space by Ersin

thank you so much to everyone who is contributing to fastai!

Ersin

Thank you ![]()

Thank you ![]()

Based off lesson 1 & 2, here’s a sushi type classifier.

huggingface app

kaggle notebook

I just considered 4 types only here: maki, temaki, nigiri, gunkan. Here’s a guide pic:

Finished the first lecture and made a Fighter jet classifier for the Russian Sukhoi Su-35 and the Lockheed Martin F-35. I used these two jets because they look pretty similar to me except for a few details. My mind is absolutely blown by how this model can tell the difference with pretty high accuracy.

Is there any way to know HOW this model is differentiating between these two fighter jets? Can we get a peek into the workings of the model to know which patterns in the image it is observing and using to differentiate between the two? It’s kind of crazy that it can do it so well.

When you come to the lesson, with HuggingFace one rough way is to edit the part fo the image that is processed.

check out this space i built with diffusers and pretrained latent diffusion model.https://huggingface.co/spaces/mipra/chitrakar

It uses hindi language to generate images.

Hi professor and everyone! I would like to share my work regarding the classification of a specific kind of mushrooms the Lactarius. It is my first attempt as a Fastai practitioner and would love some feedback in order to improve. The path is Mushrooms - a Hugging Face Space by Sotiris

Hello, in this publication, I will explain my learning process and experience with my first contact with my own training model. The intention was to create a model that would recognise or be able to discern between two brands of electric guitar. . One was a Fender Telecaster model and the other a Gibson Les Paul model.

The first thing I have done is to prepare the ground to address the issue, to create a model that differentiates two brands-models of electric guitars… To do this, I installed the fastai library for downloading Duck Duck Go images. !pip install -Uqq fastai duckduckgo_search Next I defined the function that will allow me to download 30 images of each of the two search terms: def search_images(term, max_images=30)

from duckduckgo_search import ddg_images

from fastcore.all import *

def search_images(term, max_images=30)

print(f"Searching for '{term}'")

return L(ddg_images(term, max_results=max_images)).itemgot('image')

Let’s search for a picture of an electric guitar and see what kind of result we get. We’ll start by getting the URLs from a search:

#NB: `search_images` depends on duckduckgo.com, which doesn't always return correct responses.

#If you get a JSON error, just try running it again (it may take a couple of tries).

urls = search_images('fender-telecaster', max_images=1)

urls[0]

Once downloaded, let’s take a look at it:

from fastdownload import download_url

dest = 'fender-tele.jpg'

download_url(urls[0], dest, show_progress=False)

from fastai.vision.all import *

im = Image.open(dest)

im.to_thumb(256,256)

Awsome…It’s a nice Fender Telecaster Relic!!!

It seems that the desired object, a Fender Telecaster Relic guitar, which is a wood ageing treatment, has been successfully downloaded. Next we will do the same with the second brand of guitar.

python download_url(search_images('gibson-les paul', max_images=1)[0], 'gibson-l.jpg', show_progress=False)

Image.open('gibson-l.jpg').to_thumb(256,256)

Searching for 'gibson-les Paul

Our searches have come up with the right results. So let’s take a few examples of each of the “Fender” and “Gibson” photos, and save each group of photos in a different folder (I’m also trying to take a range of lighting conditions here).

In this case I got two incorrect pictures, which by means of the code above are automatically unlinked.

To train a model, we will need DataLoaders, which is an object containing a training set (the images used to create a model) and a validation set (the images used to check the accuracy of a model – not used during training). In fastai we can easily create that using a DataBlock, and see sample images from it

dls.show_batch Now we are ready to train our model. The fastest computer vision model used is resnet18. It can be trained in a few minutes, even on a CPU. (On a GPU, it usually takes less than 10 seconds…) fastai comes with a useful fine_tune() method that automatically uses best practices for fine tuning a pre-trained model, so we will use it.

Train Results:

The differences with the training of the first lesson (Is it a bird?) are clear, my model performs clearly worse. While in my model the error rate is 0.060606 starting from an initial 0.545455 in the bird model it is 0.000000. The same could be said for the other parameters. I guess the difference was the pre-trained model that didn’t work so well with my model:

Let’s see what our model thinks about the guitars we downloaded at the beginning, we start first with a Telecaster:

is_tele,_,probs = learn.predict(PILImage.create('fender-tele.jpg'))

print(f"This is a: {is_tele}.")

print(f"Probability it's a tele: {probs[0]:.4f}")

out: This is a: fender-telecaster. Probability it's a tele: 0.9805

The prediction is very close to 1, so it is almost a hundred percent correct guitar picture and model.

Let’s see what happens with a photo of a Gibson guitar.

is_tele,_,probs = learn.predict(PILImage.create('gibson-l.jpg'))

print(f"This is a: {is_tele}.")

print(f"Probability it's a tele: {probs[0]:.4f}")

out: This is a: fender-telecaster. Probability it's a tele: 0.7737

It returns a result that is close to 70% of the prediction. Obviously the model is not working very well, since not being a Fender Telecaster it should be close to 0 % of the predicted result.

Finally, I leave the link to access the colab notebook where all the training code is located.

If anyone can spot what was wrong with the model not working with a picture of the second mark, comments would be appreciated.

We are still learning!

See my colab notebook Overview example article

See my fastpages Overview example article

Built a leafy greens classifier: It classifies between sorrel leaves, fenugreek and amaranth

The intention is to help myself classify and name the right leafy greens as it was very confusing ![]()

I used Fastai to sort Dicom spine X-rays by body part and orientation. I needed to adapt the PILDicom class in order to apply metadata Dicom values for Slope/Intercept and Window Center/Level. Using only 250 sorted images, resnet34, 10 epochs on my MacBook Pro, the model yielded 100% accuracy.

Testing on 300 images showed 3 thoracic spine xrays that mistakenly ended up in the prediction dataset and one frontal LSpine that was only labeled as ‘frontal’ but had no body part designation. All other images were correct on visual validation.

The data came from VinDR-SpineXR. Project notebook and labeled data csv are available here: GitHub - anoukstein/Sort-CSpine-LSpine-Xrays-with-Fastai: Sort CSpine & LSpine Xrays with Fastai using data from VinDr-SpineXR

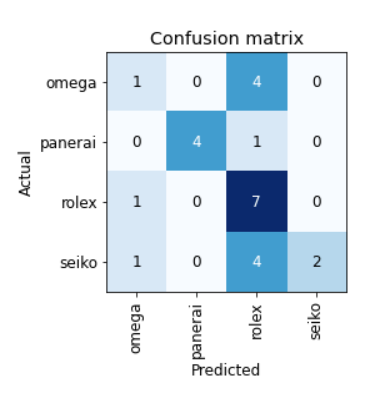

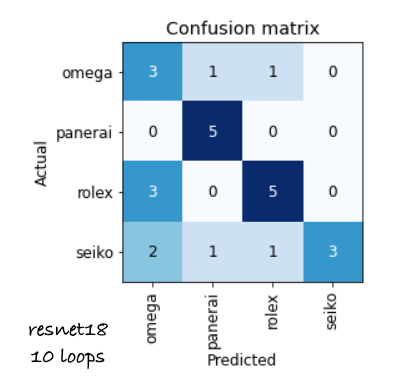

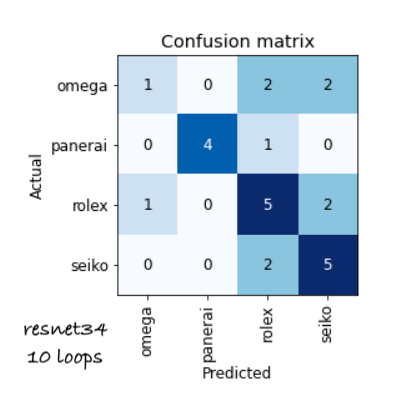

I used Fastai to distinguish amongst 4 types of watches: Rolex, Panerai, Seiko, and Omega. With my model, quite often Omega and Seiko watches were misclassified as a Rolex. Panerai watches were always correctly classified; this seems reasonable since a Panerai has quite a distinctive shape compared to the other three. Rolex watches too were always correctly classified except once (misclassified as a Seiko). I’m a watch guy so I quite enjoyed this experiment!

These results were with resnet18 and 4 training epochs.

With 10 epochs (still resnet18), accuracy went up considerably.

And finally with resnet34 and 10 training epochs, not much difference in accuracy.

I have updated the SSD notebook of 2018 course to make it works with the latest fastai version. SSD Implementation using fastai · GitHub

I will try to create a complete blog post for this later using nbdev.

Hope it helps

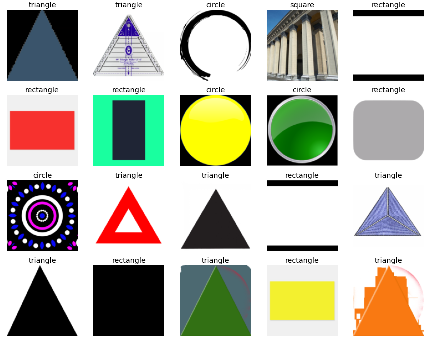

My first model (and webapp) ever: Shapes - a Hugging Face Space by mvda

It detects shapes (squares, rectangles, triangles, circles). The accuracy is not bad, considering the duckduckgo training data:

Trained on about 100 images of each type, after cleaning out roughly 10 bad samples. It struggles with squares. Using resnet18 transfer learning (4 epochs), as in Chapter 2 of the amazing course. ![]() I did not use augmentation, as I thought it may distort the shapes too much.

I did not use augmentation, as I thought it may distort the shapes too much.

Hey hey, didn’t go too far and built a bird species classifier that works surprisingly well.

Found a dataset on kaggle and tried data resizing and augmentation.

Check it out here - Fn - a Hugging Face Space by vladisov ![]()

Is it a wildfire or a forest? My first AI model. I was able to do this in a couple of minutes which is absolutely fascinating. Is it a wildfire? | Kaggle

If anyone else is interested in climate action projects please, feel free to contact me.

I’m currently building a model which is able to detect if a person smiles or not using the tools i learned about in the first 2 weeks (and a little bit more).

Feel free to look at my kaggle notebook ![]()

Smile ![]() or not Smile

or not Smile ![]() notebook

notebook

Hi there! I started tracking my mouse movements and created a crude dataset on my state of mind based on my mouse scrolls - Normal/Browsing, Rest(watching Netflix or listening to Spotify), Stressed(Unable to solve some problem, can’t find what I was looking for etc).

Based on this data, I created a small classifier.

Here is the relevant data - Mouse Movement | Kaggle

I will keep on updating this dataset and see if the results improve.

Best,

Prashant