I can not recreate you results. And on Kaggle there are no so good results either. I believe, there could be some mistakes with loading data or downloading it. From confusion matrix, you can see that validation set has more than 1000 samples, but original data has 624. If you run data.valid_ds, data.train_ds in your notebook you can see something like this:

(DatasetTfm(ImageClassificationDataset of len 624), DatasetTfm(ImageClassificationDataset of len 5232))

Hi Stefano,

thanks! It’s very clear and very useful.

I noticed that you didn’t fine-tune the resnet50 model by calling learn.unfreeze(). Could you please explain why? Did you try but it ‘broke’ the resnet50 weights?

Also, I was wondering: when you don’t call learn.unfreeze(), what does it mean when you specify max_lr=slice(1e-3,1e-2) in learn.fit_one_cycle()? Does it mean that a learning rate of 1e-3 is assigned to the penultimate FC layer (the one with 512 output activations) and 1e-2 is assigned to the very last FC layer (with 10 output activations)?

Hi, @radikubwa and @itsmuriuki made a mosquito species classifier. We haven’t tuned it so much but we are getting an accuracy of ~60. It was struggling because the organisms are from the same genus for instance gambiae species and funestus species. We are still working on improving it. Check it out here https://gist.github.com/itsmuriuki/c67c76b46945e3b4dc8d9756867eb51d.

google-image-download really helped us out for this one.

I’ve started working on Quick Draw competition dataset, here is a notebook with my attempt to train a simple classifier based on resnet34.

Here is a notebook with an analysis of a trained model. Or, I would say the first steps, I have out-of-memory errors (RAM) when trying to use model interpreter class. The training process is implemented as a standalone script in the same folder.

Nothing fancy I would say, the main interesting thing here is that I am generating images on the fly from strokes instead of saving them onto disk. The main reason is to save time because I am training the model on a small subset of the data (340,000 images, 1000 per category), and a huge bunch of images would occupy a lot of space on the disk.

Here is a fragment of the dataset class I’ve created:

@fastai_dataset(F.cross_entropy)

class QuickDraw(Dataset):

img_size = (256, 256)

def __init__(self, root: Path, train: bool=True, take_subset: bool=True,

subset_size: FloatOrInt=1000, bg_color='white',

stroke_color='black', lw=2, use_cache: bool=True):

subfolder = root/('train' if train else 'valid')

cache_file = subfolder.parent / 'cache' / f'{subfolder.name}.feather'

if use_cache and cache_file.exists():

log.info('Reading cached data from %s', cache_file)

# walk around to deal with pd.read_feather nthreads error

cats_df = feather.read_dataframe(cache_file)

else:

log.info('Parsing CSV files from %s...', subfolder)

subset_size = subset_size if take_subset else None

n_jobs = 1 if DEBUG else None

cats_df = read_parallel(subfolder.glob('*.csv'), subset_size, n_jobs)

if train:

cats_df = cats_df.sample(frac=1)

cats_df.reset_index(drop=True, inplace=True)

log.info('Done! Parsed files saved into cache file')

cache_file.parent.mkdir(parents=True, exist_ok=True)

cats_df.to_feather(cache_file)

targets = cats_df.word.values

classes = np.unique(targets)

class2idx = {v: k for k, v in enumerate(classes)}

labels = np.array([class2idx[c] for c in targets])

self.root = root

self.train = train

self.bg_color = bg_color

self.stroke_color = stroke_color

self.lw = lw

self.data = cats_df.points.values

self.classes = classes

self.class2idx = class2idx

self.labels = labels

self._cached_images = {}

def __len__(self):

return len(self.data)

def __getitem__(self, item):

points, target = self.data[item], self.labels[item]

image = self.to_pil_image(points)

return image, target

def to_pil_image(self, points):

canvas = PILImage.new('RGB', self.img_size, color=self.bg_color)

draw = PILDraw.Draw(canvas)

for segment in points.split('|'):

chunks = [int(x) for x in segment.split(',')]

while len(chunks) >= 4:

line, chunks = chunks[:4], chunks[2:]

draw.line(tuple(line), fill=self.stroke_color, width=self.lw)

image = Image(to_tensor(canvas))

return image

I’ve tried to make dataset class compliant with fastai but I am not sure if it works as expected. I guess that some learn methods could fail on my dataset class.

The work is still in progress. Next, I am going to take a bigger subset of data, and probably convert strokes into images files. Do you think that reading files from SSD could be performed faster than generating b/w 256x256 images dynamically?

Also, I am trying to play with iMaterialist Furniture dataset, and getting a lot of image downloading faults. I am using this script to download a single image. It uses concurrent workers, VPN proxy, and requests lib but still has a lot of faults. I guess it is not a big deal to skip some files from the training dataset but would like to have all samples from the test.

For aviation enthusiasts, I’ve created a project, that classifies aircrafts into the civilian, military(manned) and UAV (unmanned) categories, using 2500 images for each category. Was interested in checking on how accurate it could end up being.

I’ve written the following short Medium post describing some of the details. The accompanying notebook can be found at this gist.

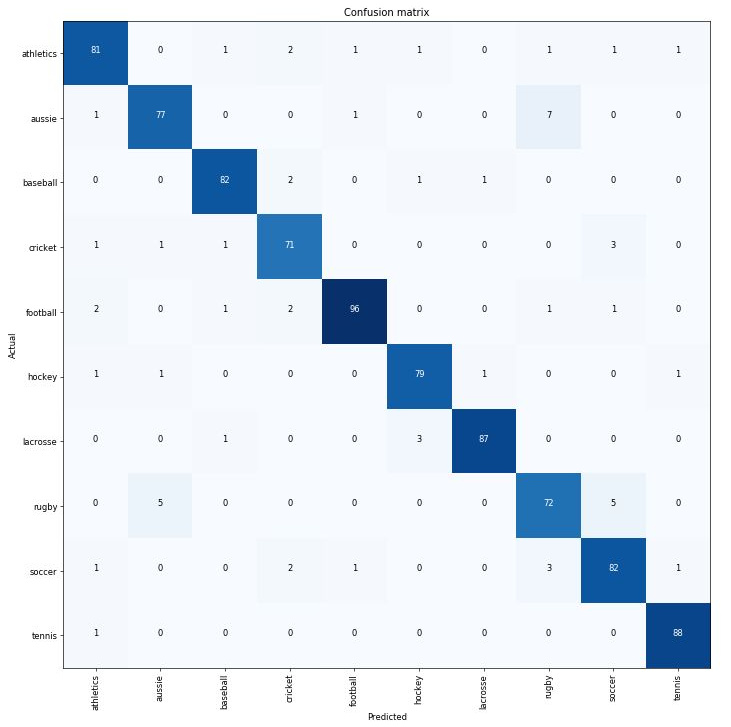

I got to 93% with my sports action classifier. Most of the errors around Rugby and Australian Rules Football and Soccer - not helped by may dataset having a few Gaelic football images too. The code to see the image names was super helpful for finding the obvious wrongly labelled images:

interp.data.valid_ds.x[interp.top_losses(25)[1]]

and I’m sure some are still wrong - probably need to identify the team shorts to differentiate some of the games!

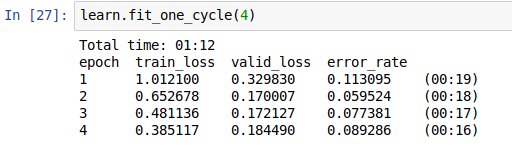

Just used the Lesson 1 flow - with resnet50, 6 cycles and 2 fine tuning cycles.

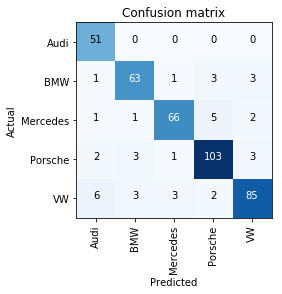

And here is the confusion matrix

I started a blog and wrote my first entry:

- Discussed how I used fastai to create a classifier for 5 blue jay species.

- Gave a friendly explanation of topics like: image augmentations, transfer learning, one-cycle policy.

- Most importantly, I talked about how I almost got intimidated out of beginning to study deep learning.

- And, how I realized first-hand that deep learning’s usefulness and impact is commensurate to how many “real folks” understand it’s a tool they can apply.

Would appreciate if you could take a moment to read and let me know if any feedback/suggestions/questions.

Thanks so much,

-James

(Details if interested: my notebook is here; ResNet50 backbone with around 400 images used for training, curated manually; after 20 epochs of training, 0.05 validation error rate)

That’s really impressive. BTW you may want to add your twitter handle to your medium profile so when it’s shared you get mentioned automatically. Here’s my tweet:

Thanks Jeremy for reading, and really touched that you shared it on Twitter! Also good advice – I just went ahead and added my twitter handle to my medium profile.

Distinguishing Lionel Messi from his almost identical Lookalike using fastai library with only 60 trainig images and getting 92.5% accuracy.

I scraped Leo Messy’s official instagram page and Reza Parastesh’s official instagram page.

From each of them I extracted 50 photos, adding 30 to train folder and 20 to valid folder.

The data is available at this URL.

Here is the Medium post I wrote about it.

Here is the gist:

I’ve tried to fine tune but error_rate didn’t improve, and for my experience when completely unfrozen, the model tends to be “unstable” and hard to train if you’ve “small” training set (probably due to the large number of parameters to learn, even for resnet architecture).

I think that this is the reason why in lesson 1 the model has been unfrozen only after the training it with quite big LR.

I think that when the images you’re classifying are “similar” to the ones in the pre trained model (imagenet in this case), you need to unfreeze only the last layers because the first (at least 50%) are already good enough.

If you’re going to classify completely different images (ie: skin lesions or aerial photos), probably It’s better to unfreeze soon almost all layers.

About that I’ve updated the example to the new “create_cnn” syntax and train again from scratch using an approach similar to lesson 1, but unfreezing ONLY the last 50% of layers. Eventually the error rate increases a bit (22 errors instead of 18 of previous version), but submitting, kaggle gave me the same score…

Probably the differences are related to randomness in train/valid split…

I think so as far as I understand…

I’m not sure that applying transformations would help here. Spectrograms are not photographs of objectc that can usually have different orientations…

@gianferrarif Thanks! Do you know how I can turn off transformations?

How did you rank?

Take a look in something like

tfms = get_transforms(flip_vert=False, do_flip=False, max_zoom=1.0) look at the signature of get_transforms and switch everything off or try passing null

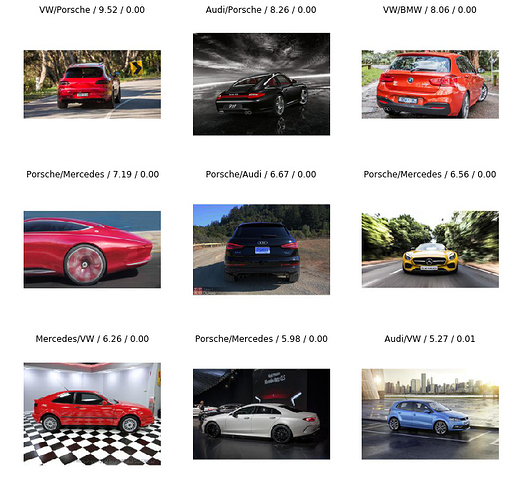

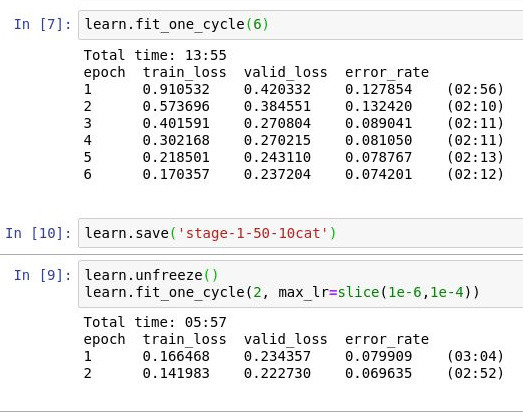

90% accuracy in classifying German cars by brand (Audi, BMW, Mercedes, Porsche and VW).

2600 pics total, 405 validation set.

Both times (original version with no unfreeze and unfreeze 50%):

Rank: 238/1440

Score: Private 0.35969, Public 0.48200 , always using TTA for predictions.

There’s a lot of room for improvement (ie: boosting) and for “tricks” (like using test images), but for now I want to stick to “lesson 1” arguments to understand how far a “default” model can go.

Hi,

Good question I was trying to play with it

look like the most minimal workable transforms what you have to apply

tf = get_transforms()[1][0]

data = ImageDataBunch.from_folder(path, ds_tfms=[tf,tf], size=224)

Cheers

Michal

I just tried classifying Tesla cars using the Resnet34 model. I was curious at how the Resnet34 model will perform given how similar the Model3 and ModelS cars are. I was quite surprised at the results :).

I ignored all the download errors related to content size and all that. Goes to show how robust the model is. I tried to increase the fit_cycle to 8 but started running into CUDA OOM errors. I am using GCP. Anyways great experience. One mistake I noticed is that I failed to change the learning rate interval. I tried to correct this but am landing with CUDA OOM errors. Will keep trying and share the results.

I reused the download_images.ipynb notebook but cleaned up a bit. My results: