Well, it’s better, but you are comparing with a 2009 paper, before the spread of CNNs, which gave a general boost to classification performance. To have an idea of the state-of-the-art, I would search for more recent papers citing this one. E.g., in https://arxiv.org/pdf/1801.06867.pdf 86% is reached, however you have to read details to understand if it is fully comparable with the original paper.

Improved version but not enough.

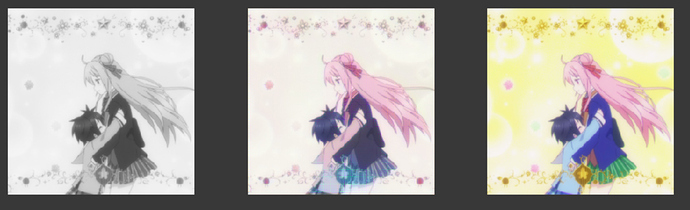

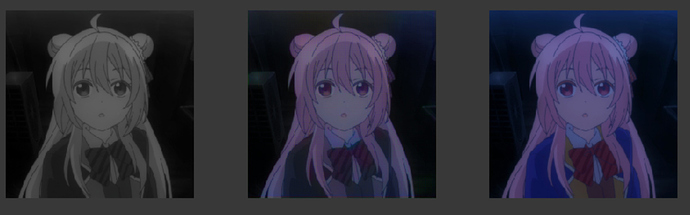

It is recoloring image from black and white.

Input Black and White,----------------------------Output color,----------------------------Correct Color

Below is before imrpoving, so we can see it solved the fuzz eyes problem. (or it can be fixed by training with more epos, I am not sure. If it can, plz tell me because I can only train with very few epos on colab)

In addition, if you look below, it can not recolor those bow-knot and

hair accessories before improvement. Or I don’t call a improvement. It should be a trick from a dum guy.

Before I try to improve it with no large data set it can not color hair accessories, bow-knot, and more. But now it can improve bow-knot. It seems it does not really need to use larger data set to color smaller items. I can select what object to be colored. But it is still unable to recognize many objects other than people.

I think I will stop this project for a monument because I won’t have time for this.

Hi everyone,

Just wanted to share a blog post about an app my team members and I worked on at a hackathon this past weekend. Our project uses fastai to detect whether or not you are getting distracted while studying using your webcam feed.

Thanks for the feedback! I’ll definitely look at the paper you linked. Thanks!

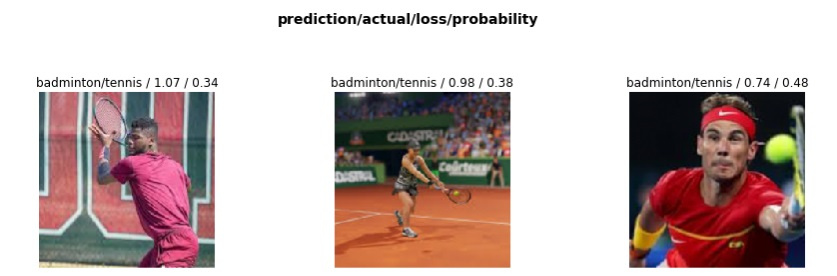

Badminton or Tennis Image Classification with 50 images

Hi Friends!

I have made an image dataset by collecting 50 images each of Tennis & Badminton game in action by downloading images from google and created an image classification model using chapter 1. The accuracy of my classification model is 85%.

The images which it did not predict correctly are as below:

I think accuracy can be increased by increasing the examples in both Tennis & Badminton classes.

The whole notebook can be viewed here https://github.com/raja4net/badminton_tennis/blob/master/badminton_tennis.ipynb

Suggestions and feedback are welcome!

I created a classifier to classify between a passenger aircraft and fighter jet following lesson-2 notebook and deployed it to azure container instance.

http://tarun-ml.eastus.azurecontainer.io:5000/

Dataset : google images

Link to notebook : https://github.com/tarun98601/machine-learning/blob/master/fastai-v3-course/src/notebooks/Copy_of_lesson2_download.ipynb

I trained a model to recognize fields, forests and urban areas on google maps images (you can try it out yourself): blog.predicted.ai

And got a little bit carried away with writing a new tool for creating segmentation datasets: image.predicted.ai

For the Lesson 3 (also covered in 4) IMDB classifier, I took an interesting twist. There is an absurdist twitter account, @dril.

might passive aggressively post "Oh! The website is Bad today" if i dont get some damn likes over here. just constantly treated like a leper

— wint (@dril) January 29, 2020

There is also a parody account which posts tweets generated by a GPT-2 trained on dril’s tweets.

worry not. we have invented a new class of intellectual whose sole job will be to shit while wearing sweatpants

— wint but Al (@dril_gpt2) February 18, 2020

I have made a classifier to tell their tweets apart: dril-vs-dril_gpt2 | Kaggle

Only able to get it to 80% accuracy, and fine tuning does not improve the score, but still, this is pretty good since a human wouldn’t be able to do it this well.

Any tips to improve further?

I am loving this fast ai course by Jermy I have made my own Drone classifier using fast ai and have deployed it on render.Drone Classifier Please take a look int it

My Drone Classifier take in drone image and classifies whether it is

1)Singlerotor Drone

2)Multirotor Drone

3)Fixedwing Drone

And Outputs the class of the Drone

Hi Ungast hope your having a beautiful day!

I had a look at your app and really like the way you have designed an app with a little privacy in mind. It makes a refreshing change from the majority of apps that are in reallity nothing but data gathering apps!

Cheers mrfabulous1

Taking inspiration from the part-1 course I have tried to explain the high level structure of a CNN in a beginner friendly way. I have tried not to delve into all the minute technical details of a CNN. The blog can be found here

Hi sapal6 Hope all is well!

A wonderful clear and concise explanation.

I wish all writing was as clear and concise.

Cheers mrfabulous1

Thanks a lot. I hope that I was able to convey the message in a proper way.

I have created a model using fast ai for plant disease detection. With just fine tuning the learning rate i achieved an accuracy of 99.1 percent while the state of art is 97 %. I did not even use the deeper networks like resnet 50. i just used resent 34.

Are you using the Plant village dataset? Every paper I saw has a 99+% accuracy rate.

Nope it’s the dataset from kaggle. It’s highest accuracy is reported as 97.4 .

Btw do you know how can I get access to plant village dataset I came to know it’s no longer public . I mailed them regarding the dataset but no reply yet .it would be helpful if you can point me to the dataset

You can get it from Kaggle https://www.kaggle.com/emmarex/plantdisease

Is it the complete dataset ??!! I can find only pepper potato and tomato.

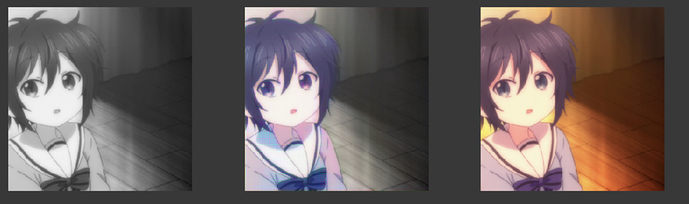

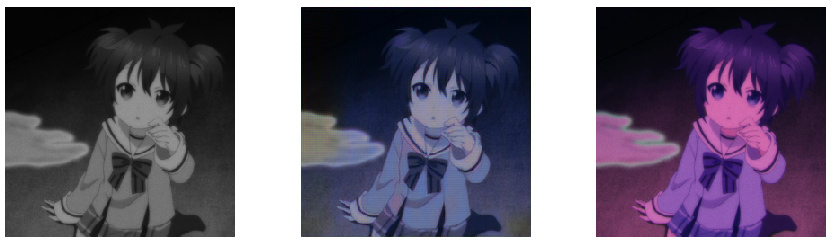

I deleted this post. But I decided that I should still need to post it because it is a pretty interesting project.

It is a recoloring, but the model focus more on recoloring the skin in the style and color we wants. Dataset has only 200 images, so It sometimes changes the color of hair, except it is something like black hair. In fact, the model was trained that can only focus on skin.

Please note that this image are from the validation set, so it should count as an unseen image.

I will post more images processed by this model.

After

Before