I’m 2 weeks into the course and I took part in this online hackathon and with the help of the fastai library I quickly got into the top 2%. Find my article about the same here.

I’m rather humbled by some of the clever uses folk here have managed to build with CNNs right out of the gate. Me? I built a Japanese noodles classifier.

Granted, it’s probably not going to change the world, but it might help you decide on lunch? (within a very limited domain).

Hi everyone,

I am taking the course online and finished lesson 1 the last week. I decided to give it a try in a dataset that collaborators and I compiled of insect eggs to study their evolution, recently published as a preprint and currently in press:

Church SH, Donoughe S, de Medeiros BAS, Extavour CG. 2018 . A database of egg size and shape from more than 6,700 insect species. bioRxiv: 471953. doi:10.1101/471953.

Eggs are probably one of the less studied life stages in insects and it is hard even for insect experts to tell to which kind of bug most eggs belong. We built a visualization of egg shapes and images based on information in the literature (available at https://shchurch.github.io/dataviz/index.html) and now I used the images available there to build a classifier of insect orders. Orders are very large taxonomic groups, so this is something like telling apart a beetle from a moth, or a dragonfly from a cricket, based on the eggs. The images are highly heterogeneous, with some being color photographs, others line drawings and some microscopy. I did not filter the data and really did not expect much, but turns out the classifier could reach almost 80% accuracy just by adapting lesson 1 code for dog and cat breeds. Really exciting, looking forward to learn more.

The notebook can be found here: https://github.com/brunoasm/fastai_course/blob/master/lesson_1/lesson1-homework.ipynb

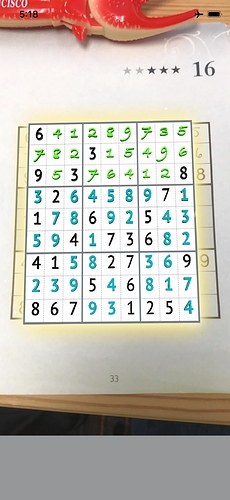

I’ve been working this week on using fast.ai to enhance my app, Magic Sudoku, which scans and solves Sudoku puzzles and displays that solution over the top of the real one in AR.

When I made the app in 2017 (my writeup is on Medium here) I didn’t really understand how the ML part worked – I just bumbled through adapting a Keras MNIST tutorial to train my on my own dataset of scanned puzzles.

Now that I’ve been watching the fast.ai videos, I feel like I have a much better understanding of what’s going on and am starting to customize my model to do new things.

One of the things I’ve wanted to do for a while now is expand my model from 10 classes to 20 to allow it to read (and differentiate between) handwritten and computer digits. This will let me expand the capabilities of the app beyond just solving empty puzzles to letting people scan their completed puzzles to check their work and scan their in-progress puzzles to see if they are on the right track and get hints without having to look at the full solution in the back of the book (spoilers!).

So far the hardest part has been getting my new model to play nicely with Apple’s CoreML and Vision libraries. I finally got that working this weekend though and am finally seeing the results! Here’s a puzzle I scanned with my newly-improved fast.ai-trained model.

Next up on my to-do list is:

- Writing the actual app features that use the new data from the model

- Speeding up inference (right now it’s doing 1 square at a time)

- Better data augmentation (right now it’s not doing any)

- Trying other model architectures (currently using resnet34 starting from pre-trained weights but thinking that may be overkill for such small images)

- And possibly collecting more real-world data depending on how it does with some random guinea pigs’ handwriting (may end up using MNIST data in my model as well but the format is slightly different).

I’m excited to keep working on more projects with fast.ai. I’ve got about a hundred ideas… but I already know the next 2 projects I’m going to be working on this spring!

This is pretty cool!

i note you’re recognising the characters and then using a recursive algorithm to solve the puzzle before projecting the solution via AR. I’m mentally trying to work out whether it’d actually be possible to solve sudoku puzzles with a NN via multi-label classification alone, given enough layers and a gargantuan amount of training data (a crazy idea, but part of me wonders if it’s actually possible).

Hey guys!

I just completed fast.ai lesson 1 and decided to make a car identifying DL model. I used a Kaggle Kernel to train a model from a dataset comprised of google images of 6 different cars (accord, civic, altima, corolla, models, and charger).

Here is my code: https://www.kaggle.com/ashuaib/car-classification-w-fastai

I’m getting about 80% accuracy–is that fine? If not, is it my data? the model? overfitting?

Would appreciate it if someone could check it out and give feedback.

Thanks!

This is really cool! esp. the AR.

Do you mind sharing what you did to take pytorch model to apple .mlmodel? This will be very helpful for others who want to try their app on iOS.

I mostly followed @jalola’s writeup here:

I spent a lot of time experimenting with the steps to make sure they all still applied to PyTorch and fast.ai v1 since the article was written a while ago.

Hangups I had were

-

coremltoolsdoes not work with Python 3.7, I had to re-install everything with 3.6 - I found some stuff indicating that the

AdaptiveMaxPoolstuff had been integrated intoonnx-coremlby now and by re-building some things from source I was able to get the model converted without it complaining about these layers. This turned out to not work in the end (CoreML spat out a cryptic error which appears to mean that it was unable to put the inference ops on the GPU) and I had to start over again. As far as I can tell you do still need to add theMyAdaptive***Pool2dclasses as the writeup describes. - I spent a good deal of time trying to use the CoreML

preprocessing_argsto get my real-world data to match the format the model was trained on. Mostly my problems were due to inexperience (I didn’t realize that I had to call.normalize(imagenet_stats)on my data prior to training so I spent a lot of time trying to match the actual data to something that the model wasn’t matched to at all). In the end, I re-trained my data with normalization and used @jalola’sImageScalelayer at the beginning of the network, then I added the ImageNet RGB bias values in that layer as well. - CoreML initially didn’t recognize my model as accepting an Image so I spent a good deal of time trying to get my data formatted as an

MLMultiArray--> this is very poorly documented and I never did end up getting it to work properly. The solution was to addimage_namesandmode="classifier"like the writeup told me to do (I had skipped that step because I didn’t think it’d be important to get things working… I thought I’d come back and clean things up after I got something working… turns out it was an important step and is actually called out in the writeup; I just glossed over that part the first time). - I had trouble with the

dummy_inputshape. The writeup has a sneaky.unsqueeze(0)slipped into theImageScalefunction. So when I got rid of that to experiment withpreprocessing_argsI had to re-shape thedummy_input. - In v1, the

LogSoftmaxlayer is not actually included in the network so you don’t need to remove the last layer of the Resnet CNN before adding yourSoftmaxlayer.

So the above can basically be summed up as “if you follow the writeup it will go pretty smoothly”. But on the bright side, wrestling with it for a couple of days gave me a lot of understanding about what’s actually going on.

Thanks a lot for your post on this. Does coremltools still work at python 2.7?

I believe so but fastai v1 requires 3.6 or higher.

Ok, I have a discussion with someone here and we think it may be good to have a more update to date tutorial on how put people project on mobile device. Not sure if you are interested. I am not sure when I can come around to do this. I have experience only with Keras model.

Also, take a look at:

and chime in your thinking, since you are mobile app dev.

@kechan - I think that must be the wrong link; doesn’t look related.

The parameter changed recently to ds_tfms - so use ds_tfms=get_transforms() should work.

I used fastai to create a classifier for white blood cell images from a Kaggle dataset. I was able to get about 87% accuracy, which is not bad! However, I am struggling to improve those results so if someone has suggestions please let me know!

Sorry, I switched topic in th middle. I m talking about Gaussian blur as a great data aug when you try to do inference on mobile image input.

Ah, unfortunately I’m not doing any data augmentation yet. I have plenty of blurry data from my actual captures so it probably won’t be something I try in the near term.

Hello,

I created a skin lesion classifier from the ISIC Skin Lesion Challenge data set. I can’t say it’s state of the art but I did get 94% accuracy on a 2,000 image holdout set. I’m really proud of it and how easy it was to get such great results so quickly. I’ll probably come back to this in time to try and improve upon it but I wanted to release it and see if anyone wanted to check it out and give any kind of feedback!

The notebook can be found here: https://github.com/DKilkenny/ISIC-2018-Skin-Lesion-Classification

I have classified the oxford based dataset this time for flower and got less accuracy, I believe my images were blur and data was not prepared correctly. Any thoughts and feedback is recommend.

had a look at your notebook. you could probably get better results by :

- experimenting with the arguments to get-transform (why are you setting filip off ?)

- let the training run for more epochs

After lesson 4, I was really keen to try building my own NLP / tabular / collaborative model.

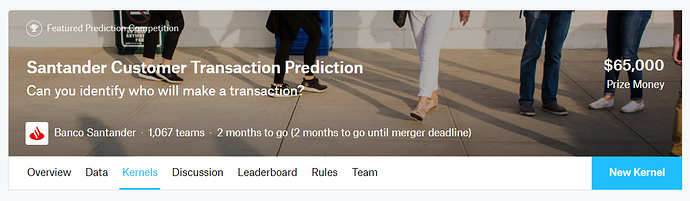

As luck would have it, I got an email from Kaggle today announcing a new competition with tabular data. The competition requires you to use tabular data to predict whether Santander bank customers will buy products in the future. This sounded quite similar to the tabular example from the lecture, so it seemed like a good problem to try out.

Technical details:

- I built a tabular model, which had ~91.6% accuracy on my validation set (training with 4 epochs at a max learning rate of 5e-3)

- One challenge I ran into was that Kaggle scores this competition with an AUC-ROC metric (AUC = area Under Curve, ROC = Receiver Operating Characteristics). I tried to add this metric myself, and did a bit of Googling to try to find usable code, but wasn’t able to get it to work

- I submitted my model to Kaggle with a score of 0.862, which got me to position 678/1067 on the leaderboard. I might come back to this model in the future after I learn some more optimization techniques (e.g. I don’t know exactly what the ‘layers’ input does when you create a TabularLearner, so I just put in the same [200,100] value from the lecture)

- Code for the model is available at GitHub