Based on the crap to no crap GAN of lesson 7, I tried the same approach to add colors to a crap black & white image. First I downloaded high quality images from EyeEm using this approach.

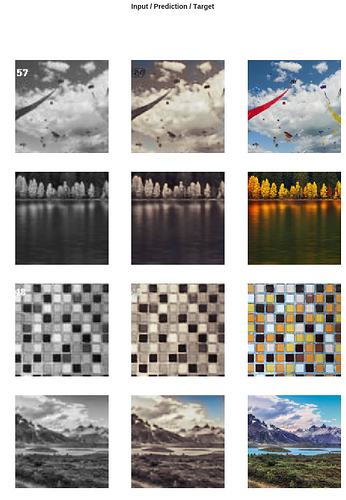

Training the Generator with simple MSE loss function, I got not exciting results (the only thing it learned is sky has to be BLUE  ):

):

Then after adding the Discriminator I and training in a ping-pong fashion, the Generator got better:

Now trying on some test images