I used the CelebA dataset from Kaggle for a regression model.

list_landmarks_align_celeba.csv in the dataset contains image landmarks and their respective coordinates. There are 5 landmarks: left eye, right eye, nose, left mouth, right mouth

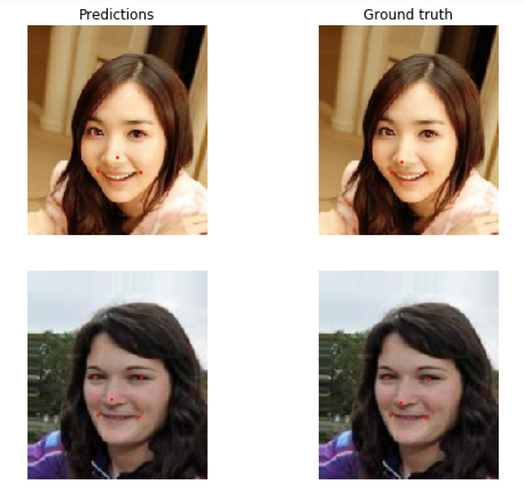

This notebook attempts to make a learner to predict those five points. I used a 50,000 subset of the 200,000 images in this dataset and got good results: