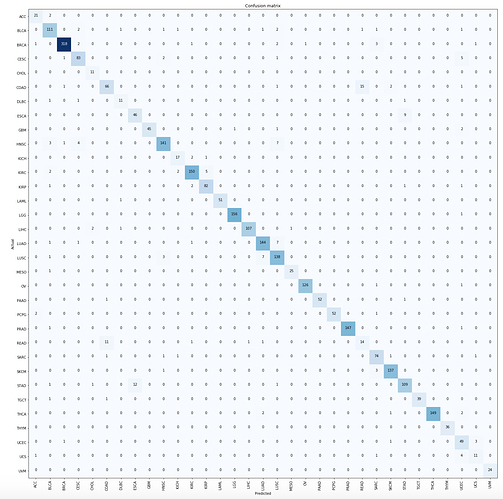

Hi All, used another problem from Cancer Genomics domain – Cancer Type Classification using Gene Expression data – this is my subject matter hence almost the same topic for all of my work  This time, peaked a bit at the structured data documentation and did not convert the data into images (although I am sure we can represent this data as such). Overall accuracy is 93.9%, tiny bit better than the recent paper that addressed this problem.

This time, peaked a bit at the structured data documentation and did not convert the data into images (although I am sure we can represent this data as such). Overall accuracy is 93.9%, tiny bit better than the recent paper that addressed this problem.

Thanks!