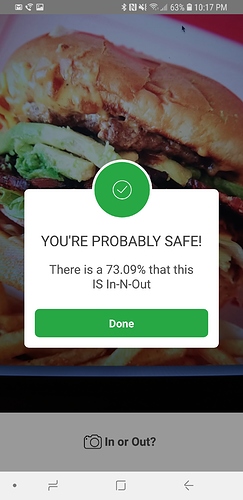

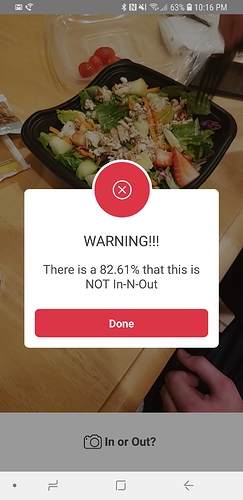

Eating any other burger outside of an In-N-Out burger is almost considered blasphemy for us folks in Southern California. So what would you say if I told you there is an app on the market that could tell you if you have been offered an In-N-Out burger or not an In-N-Out burger?

Wonder no more!

The first public beta of the “In Or Out?” application is now available for Android (download the APK here).

How it works:

- Use your phone to take a picture of your burger and the application will tell whether it’s an In-N-Out, and therefore safe for consumption, or if you should run for the hills.

Some highlights:

- Built using the latest build of fast.ai v1 following the lesson 1 notebook as a template

- Single image predictions served via a dockerized flask API hosted at ZEIT.co (H/T @simonw) using the code described here to run a single uploaded image through my trained model

- Android application developed using React Native

Note: Sometimes the API call times out because it takes ZEIT awhile to wake up my API if it hasn’t been used for a while. But keep trying, and I promise you that eventually it will work

If you give it a try I’d love to know how it worked (or failed).