For lesson 3’s homework had a go at segmentation using the data from the current iMaterialist (Fashion) 2019 at FGVC6 on Kaggle.

I thought I’d have a go at using a Kaggle kernel - here, with more detail, for anyone interested.

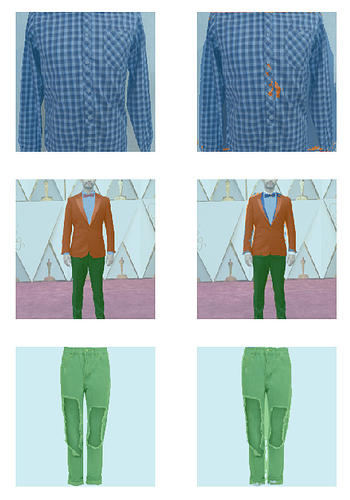

Time, compute and skill limitations meant I had to simplify the task. I used a smaller set of categories, smaller images and just 10% of the training set. Still got what I thought were pretty good results regardless - 88% accuracy (excluding background pixels) and in many cases very reasonable looking results (actual on left, predicted on right).

Really enjoying the practical side of the course, so I’m trying to keep up with doing a personal project for each week.