All -

I wanted to share with you all an Art Project I have been working on: https://www.9gans.com/

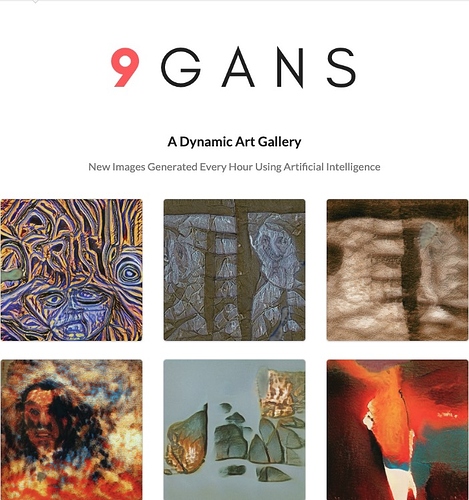

9 GANS is An AI Generated Art Gallery that is refreshed every hour to create a completely new and unique collection of 9 images. Hence the (bad) name. Let me know what you think.