This has been a project I procrastinated on posting, but it is a fun one! Identifying different pieces of cardiac anatomy inside real human hearts. I made a longer post here on medium. (https://medium.com/@erikgaas/teaching-a-neural-network-cardiac-anatomy-f14f91a4c4bf)

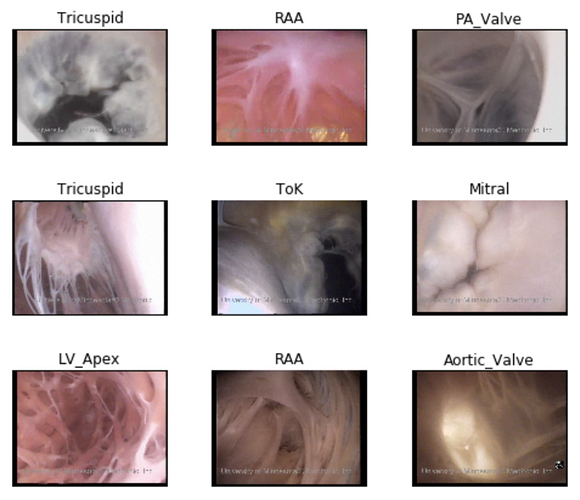

Above are different shots of camera inside a heart perfused with clear liquid. If you want to see videos of these regions in action, visit my lab’s webpage on cardiac anatomy. (http://www.vhlab.umn.edu/atlas/) I scraped the site’s videos and converted them to still frames.

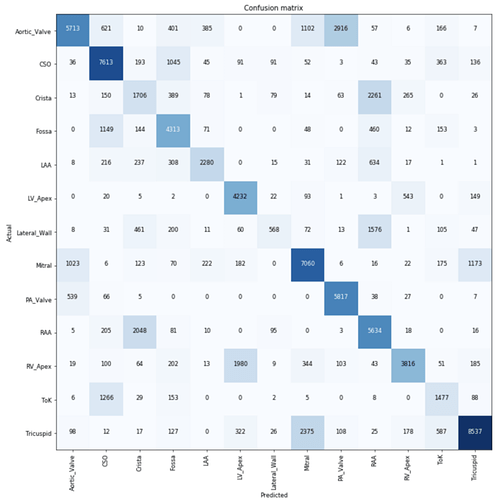

Resnet34 does really well at the problem, and reveals some fun things about our dataset whether it be regions that happen to look similar, or simply are present multiple times in the same image.

Fun stuff! I did this before in keras back in the day. Fastai makes this so much easier and more satisfying.