I am trying to implement a one-cycle scheduler for use at work (where installing fastai isn’t an option) and I just wanted to run by my understanding of the schedule (to clarify that it is correct) as well as ask a few questions I was hoping anyone could help me understand. Thanks in advance!

My understanding: the schedule is separated into two phases, in the first we increase the learning rate linearly from low_lr to max_lr, with max_lr being specified and low_lr being max_lr divided by div_factor. Meanwhile we linearly decrease the momentum from the high to the low value (both specified). In the second phase we decrease the LR from max to low_lr / 10**4 and increase the momentum from low to high value; this along a cosine annealing schedule. The N given in learn.fit_one_cycle(N) specifies the number of epochs the cycle lasts. The pct_start factor determines what fraction of the cycle is spent in phase 1.

My questions:

- When we say ‘momentum’ applied to Adam optimizer, do we mean beta_1 as defined in https://pytorch.org/docs/master/_modules/torch/optim/adam.html#Adam , right?

- I notice the default optimizer to Learner is AdamW (the new weight decay scheduling I’ve been hearing about), but haven’t seen an implementation of it in torch 1.0, also couldnt find anything in the fastai documentation or source code when I searched; can anyone point me in the right direction ?

EDIT: Have found the answer to question 2 in this blog post : http://www.fast.ai/2018/07/02/adam-weight-decay/

Happily the implementation and explanation is clear and concise, recommend the read if you are curious about this.

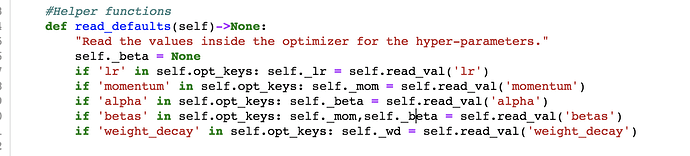

EDIT#2 I have found the answer to question 1 in the source code; in short the answer is yes. The following snipper can be found in the fastai.callback. If ‘betas’ are in the original optimizers param_dict, the first is assigned to self._mom and the second to self._beta. I will leave this post up in case anyone finds it useful!

Very much appreciate any help, thanks!