Hi,

I’m playing with the transforms.py since some time and it is hard to bend it to implement some use cases and it has some glitches that I think could be avoided. Mostly it is hard to get multiple objects augmented at once and it is very hard to undo the transformations for Test Time Augmentation if your labels were transformed.(I’ve mention few use cases at the end of the post)

I would like to suggest an improvement to the current API to allow for a custom function that applies a set of transformations to the output of Dataset.

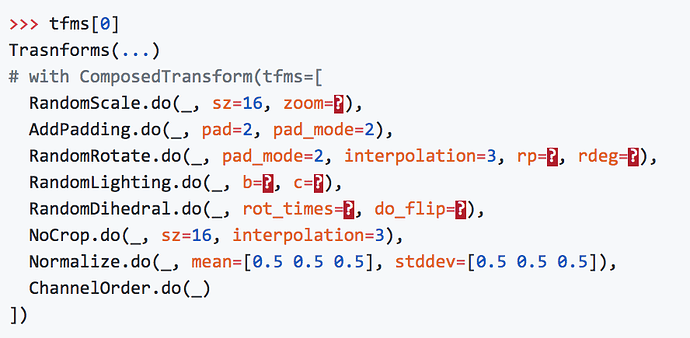

Such function could look as follows:

def mask_n_coord_apply(t, x, labels):

bbs,m = labels

return t(x, TfmType.PIXEL, is_y=False), t(bbs, TfmType.COORD, is_y=True), t(m, TfmType.MASK, is_y=True)

The default function that implements current behavior would look as follow:

def default_apply_transform(self, t, x, y):

if y is None: return t(x, TfmType.PIXEL, is_y=False)

return t(x, TfmType.PIXEL, is_y=False), t(y, self.tfm_y, is_y=True)

The ‘t’ passed to this function would be applying a set of transformation in a deterministic way. ie if such set contains RandomCrop, the cropping box would be fixed during the execution of the apply function.

I’ve made a proposal that reuses current “set_state” to simulate such behavior, and I think I see how we could rewrite the current transformations to get a simpler code and to be able to implement an undo transformation that is needed in TTA.

I’ve presented it as a PR with a working example and with a proposed future API to write transforms.

Jeremy would like to have the conversation take place in the forum so if you are interested in commenting/participating then use this conversation instead of the PR.

Use cases & Glitches:

A

Imagine you want to train yolo / retinanet, and you are given a mask. To avoid issues with bounding box being deformed after rotation the best is to augment the Mask and the Image and then generate a bounding box.

In current API one needs two Datasets to get this working.

Here is how this can be done in proposed solution:

def mask_to_coord_apply(t, im, mask):

im = t(im, TfmType.PIXEL, is_y=False),

mask = t(mask, TfmType.PIXEL, is_y=False),

bb = generate_bb_out_of_multicolor_mask(mask)

return im, (mask, bb)

B

Imagine you want to implement a variation of Unet that predicts Masks and Borders (for easier cutting). It is almost impossible to have Mask and Border transformed together along with the Image. The only way to do this is to write another Dataset and generate a border after all the transformations.

Here is how this can be done in proposed solution:

def mask_n_border_apply(t, im, labels):

mask,borders = labels

im = t(im, TfmType.PIXEL, is_y=False),

mask = t(mask, TfmType.PIXEL, is_y=False),

borders = t(borders, TfmType.PIXEL, is_y=False),

return im, (mask, borders)

C

Imagine you want to predict: masks, bounding boxes and key points (joints, limbs etc), you have your images annotated but I don’t see how you can randomly augment your images in this scenario. Maybe if the key points are set as special pixels on a mask, but then they can be lost in the process.

Here is how this can be done in proposed solution:

def mask_n_border_apply(t, im, labels):

m,bbs,kps = labels

im = t(im, TfmType.PIXEL, is_y=False),

m = t(m, TfmType.PIXEL, is_y=False),

bbs = t(bbs, TfmType.COORD, is_y=False),

kps = t(bbs, TfmType.COORD, is_y=False),

return im, (m,bbs,kps)

D

transform_on_side etc, work only with categorization tasks. For regression, we need to define tfm_y in multiple places.

If we pass TfmType as a parameter to t we won’t need any tfm_y that causes issues.

E

Test TIme Augmentation works wonders but it is very hard to implement for regressive models, with the existing API especially if we use any random transformations.

)

)