I’m interested in using a CNN to predict a scalar value using MSE loss. (Example: a cat’s age from a photo of the cat.)

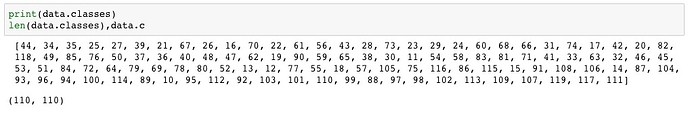

I was hoping to hook into the pre-existing tools, and it seems like modifying the _from_csv or _from_folder might be the right way to go. However, the data loaders seem to pretty strongly be label-oriented. Is creating a scalar learning approach as simple as creating a custom data loader that leaves the scalar value as-is (without making it into a label), then using MSE loss? Or are there more pieces here that assume labels?