Please post your questions about the lesson here. This is a wiki post. Please add any resources or tips that are likely to be helpful to other students.

<<< Wiki: Lesson 8 | Wiki: Lesson 10 >>>

Lesson resources

- Lesson video

- Pandas data processing pipeline, with thanks to @binga

- Lesson notes from @hiromi

- Lesson notes from @timlee

Papers

- Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks

- You Only Look Once: Unified, Real-Time Object Detection

- SSD: Single Shot MultiBox Detector

- Focal Loss for Dense Object Detection

- Speed/accuracy trade-offs for modern convolutional object detectors

- YOLOv3 plus PyTorch implementation

- Understanding the Effective Receptive Field in Deep Convolutional Neural Networks

You should understand these by now

- Pathlib

- JSON

- Dictionary comprehensions - Tutorials

- defaultdict

- How to jump around fastai source - Visual Studio Code / Source Graph / PyCham

- matplotlib object-oriented API - Python Plotting with Matplotlib (Guide)

- lambda functions

- bounding box co-ordinates

- custom head and bounding box regression

- everything in Part1

Timeline

- (0:00:01) Object detection Approach

- (0:00:55) What you should know by now

- (0:01:40) What you should know from Part 1 of the course - model input & model output

- (0:03:00) Working through pascal notebook

- (0:03:20) Data Augmentations

- (0:03:35) Image classifier continuous true explain

- (0:04:40) Create Data augmentations

- (0:05:55) Showing bound boxes on pictures with augmentations

- (0:06:25) why we need to transform the bounding box

- (0:07:15) tfm_y parameter

- (0:09:45) Running summary model

- (0:10:40) Putting 2 pieces together done last time

- (0:10:45) Things needed to train a neural network

- (0:12:00) Creating data by concatenating

- (0:13:40) Using the new datasets created

- (0:14:00) Creating the architecture

- (0:15:50) Creating Loss function

- (0:18:00) BatchNorm before or after ReLU

- (0:19:25) Dropout after BatchNorm

- (0:21:50) Detection accuracy

- (0:22:50) L1 when doing both bounding box and classification at the same time is better

- (0:25:30) Multi-label classification

- (0:26:25) Pandas defaultdict alternative

- (0:27:10) reuse smaller models for pre-trained weights for larger models

- (0:29:15) architecture for going from largest object detector to 16 object detector

- (0:33:48) YOLO, SSD

- (0:35:05) 2x2 Grid

- (0:37:31) Receptive fields

- (0:41:20) Back to Archiecture

- (0:41:40) SSD Head code

- (0:42:40) Research code copy paste problem

- (0:43:00) fast ai style guide

- (0:44:42) Reusing code. Back to SSD code

- (0:45:15) OutConv - 2 conv layers for 2 tasks that we are doing

- (0:47:20) flattening the outputs of convolution

- (0:47:52) Loss function needs explained

- (0:48:36) Difficulty in the matching problem

- (0:49:25) Break and problem statement for matching problem

- (0:50:00) Goal for matching problem with visuals

- (0:51:50) running code of architecture line by line on validation set

- (0:55:00) anchor boxes, prior boxes, default boxes

- (0:55:23) Matching problem

- (0:55:43) jaccard index or jaccard overlap or IOU (Intersection over union)

- (0:57:35) anchors, overlaps

- (1:00:00) Map to ground truth function

- (1:01:50) See the classes for each anchor box should be predicting

- (1:03:15) Seeing the bounding boxes

- (1:04:16) Interpret the activations

- (1:05:36) Binary cross entropy loss

- (1:09:55) SSD loss function

- (1:13:52) Create more anchor boxes

- (1:14:10) Anchor boxes vs bounding boxes

- (1:14:45) Create more anchor boxes

- (1:15:25) Why are we not multiplying categorical loss with constant

- (1:17:20) code for generating more anchor boxes

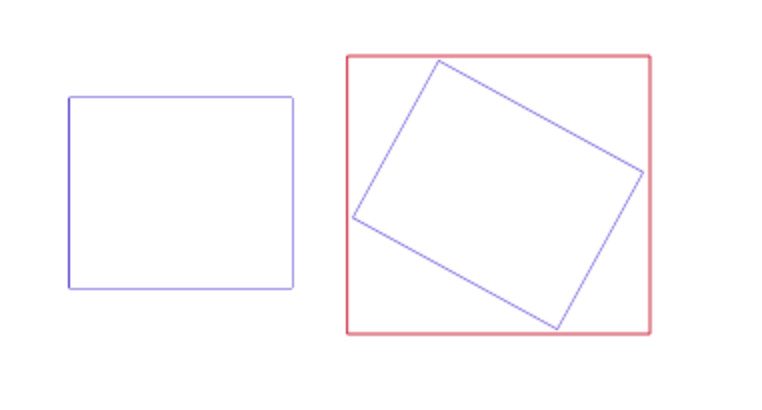

- (1:17:59) Diagram - how object detection maps to neural net approach

- (1:19:50) Rachael - Challenge is making the architecture

- (1:20:15) Jeremy - There are only 2 architectures

- (1:20:35) Rachael - Challenge is with anchor boxes

- (1:20:48) Jermey - Entirely in loss architecture of SSD

- (1:21:08) Forget the architecture, focus on the loss function

- (1:22:16) Matching problem

- (1:23:14) We are using SSD not YOLO so matching problem is easier

- (1:23:49) Easier way would have to teach YOLO then go to SSD

- (1:24:25) Loss function needs to be consistent task

- (1:24:45) Question - 4 by 4 is same as the 16 is a coincidence?

- (1:25:16) Part 2 is going to assume that comfortable with Part 1

- (1:26:41) Explaining multiple anchor boxes is next step from last lesson

- (1:27:46) Code for detection loss function

- (1:28:32) This class is by far going to be the most conceptually challenging

- (1:29:40) For every grid cell different size, orientation, zoom

- (1:30:15) Convolutional layer does not need that many filters

- (1:30:56) Need to know k = No. of zoom by no. of aspect ratios

- (1:31:13) Architecture - Number of stride 2 convolutions

- (1:31:43) We are grab set of outputs from convolutions

- (1:32:20) Concatenate all outputs

- (1:32:53) Criterian

- (1:33:01) Pictures after train - big objects are ok small are not

- (1:33:55) History of Object detection

- (1:34:05) Multibox Method Paper - Matching problem introduce

- (1:34:30) Trying to figure out how to make this better

- (1:34:41) RCNN - 2 stage network - computer vision and deep learning

- (1:36:09) YOLO and SSD - same performance with 1 stage

- (1:37:08) Focal Loss RetinaNet - figured out why mess of boxes is happening

- (1:38:48) Question - 4 by 4 grid of receptive field with 1 anchor box each, why we need more anchor boxes?

- (1:40:38) Focal loss for Dense Object detection

- (1:41:00) Picture of probability of ground truth vs loss

- (1:41:45) Importance of the picture - why the mess was happening

- (1:44:05) Not blue but blue or purple loss

- (1:45:01) Discussing the fantastic paper

- (1:46:15) Cross entropy

- (1:48:09) Dealing with class imbalance

- (1:49:18) Great paper to read how papers should be

- (1:49:45) Focal Loss function code

- (1:51:00) Paper tables for variable values

- (1:52:00) Last step - figure out to pull out interesting parts

- (1:52:48) NMS - Non Maximum suppression copied code

- (1:53:50) Lesson 14 Feature pyramids

- (1:54:15) Deep learning 2 part/complicated to single deep learning

- (1:55:42) SSD paper model description

- (2:01:30) Read back citations

Other resources

Blog posts

- Understanding SSD MultiBox — Real-Time Object Detection In Deep Learning

- Deep Learning for Object Detection: A Comprehensive Review

- The effective receptive field on CNNs

- The Modern History of Object Recognition — Infographic

- SSD Multibox In Plain English

- RetinaNet: how Focal Loss fixes Single-Shot Detection

Videos

Stanford CS231N

- CS231N S2016 L8 — Localization and Detection

- CS231N W2017 L11 — Detection and Segmentation

Coursera Andrew Ng videos:

- Object Detection

- Bounding Box Predictions

- Intersection Over Union

- Non-Max Suppression (NMS)

- Anchor Boxes

- YOLO Algorithm

Other videos

- YOLO CVPR 2016 talk – the idea of using grid cells and treating detection as a regression problem is focused on in more detail.

- YOLOv2 talk – there is some good information in this talk, although some drawn explanations are omitted from the video. What I found interesting was the bit on learning anchor boxes from the dataset. There’s also the crossover with NLP at the end.

- Focal Loss ICCV17 talk

Other Useful Information

- Understanding Anchors - by @hiromi

- Receptive Field Calculator

- A guide to receptive field arithmetic for CNNs

- Conv Artithmetic Tutorial

Frequently sought pieces of information in this thread