I am using an Azure Data Science Virtual Machine which has a P100 GPU.

This is what I am doing:

- Load Densenet201 with precompute=True

- bs = 64 ( I tried 400 | 300 | 200 | 100), the GPU memory used is 4 Gigs of 16 Gigs Available

- sz = 399

I am getting Memory Error, even though I am using just 30% of the total GPU memory available to me. Can anyone help me with this?

Stack Trace:

MemoryError Traceback (most recent call last)

in ()

----> 1 learn = ConvLearner.pretrained(arch, data, precompute=True, ps=0.5, xtra_fc=[10000, 10000])

~/fastai/courses/dl1/fastai/conv_learner.py in pretrained(cls, f, data, ps, xtra_fc, xtra_cut, precompute, **kwargs)

96 def pretrained(cls, f, data, ps=None, xtra_fc=None, xtra_cut=0, precompute=False, **kwargs):

97 models = ConvnetBuilder(f, data.c, data.is_multi, data.is_reg, ps=ps, xtra_fc=xtra_fc, xtra_cut=xtra_cut)

—> 98 return cls(data, models, precompute, **kwargs)

99

100 @property

~/fastai/courses/dl1/fastai/conv_learner.py in init(self, data, models, precompute, **kwargs)

89 elif self.metrics is None:

90 self.metrics = [accuracy_thresh(0.5)] if self.data.is_multi else [accuracy]

—> 91 if precompute: self.save_fc1()

92 self.freeze()

93 self.precompute = precompute

~/fastai/courses/dl1/fastai/conv_learner.py in save_fc1(self)

141 m=self.models.top_model

142 if len(self.activations[0])!=len(self.data.trn_ds):

–> 143 predict_to_bcolz(m, self.data.fix_dl, act)

144 if len(self.activations[1])!=len(self.data.val_ds):

145 predict_to_bcolz(m, self.data.val_dl, val_act)

~/fastai/courses/dl1/fastai/model.py in predict_to_bcolz(m, gen, arr, workers)

11 lock=threading.Lock()

12 m.eval()

—> 13 for x,*_ in tqdm(gen):

14 y = to_np(m(VV(x)).data)

15 with lock:

~/.conda/envs/fastai/lib/python3.6/site-packages/tqdm/_tqdm.py in iter(self)

953 “”", fp_write=getattr(self.fp, ‘write’, sys.stderr.write))

954

–> 955 for obj in iterable:

956 yield obj

957 # Update and possibly print the progressbar.

~/fastai/courses/dl1/fastai/dataset.py in next(self)

306 if self.i>=len(self.dl): raise StopIteration

307 self.i+=1

–> 308 return next(self.it)

309

310 @property

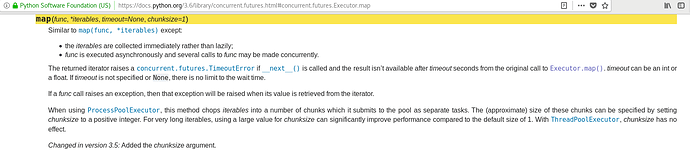

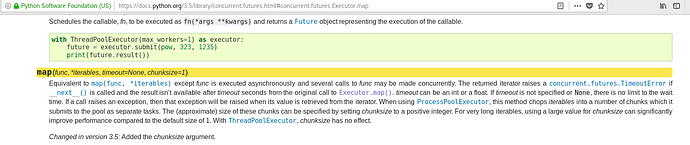

~/fastai/courses/dl1/fastai/dataloader.py in iter(self)

74 with ThreadPoolExecutor(max_workers=self.num_workers) as e:

75 for batch in e.map(self.get_batch, iter(self.batch_sampler)):

—> 76 yield get_tensor(batch, self.pin_memory)

77

~/fastai/courses/dl1/fastai/dataloader.py in get_tensor(batch, pin)

35 return {k: get_tensor(sample, pin) for k, sample in batch.items()}

36 elif isinstance(batch, collections.Sequence):

—> 37 return [get_tensor(sample, pin) for sample in batch]

38 raise TypeError("batch must contain numbers, dicts or lists; found {}"

39 .format(type(batch)))

~/fastai/courses/dl1/fastai/dataloader.py in (.0)

35 return {k: get_tensor(sample, pin) for k, sample in batch.items()}

36 elif isinstance(batch, collections.Sequence):

—> 37 return [get_tensor(sample, pin) for sample in batch]

38 raise TypeError("batch must contain numbers, dicts or lists; found {}"

39 .format(type(batch)))

~/fastai/courses/dl1/fastai/dataloader.py in get_tensor(batch, pin)

29 def get_tensor(batch, pin):

30 if isinstance(batch, (np.ndarray, np.generic)):

—> 31 batch = T(batch).contiguous()

32 return batch.pin_memory() if pin else batch

33 elif isinstance(batch, string_classes): return batch

~/fastai/courses/dl1/fastai/core.py in T(a)

11 if torch.is_tensor(a): res = a

12 else:

—> 13 a = np.array(np.ascontiguousarray(a))

14 if a.dtype in (np.int8, np.int16, np.int32, np.int64):

15 res = torch.LongTensor(a.astype(np.int64))

MemoryError:

Thanks in advance!