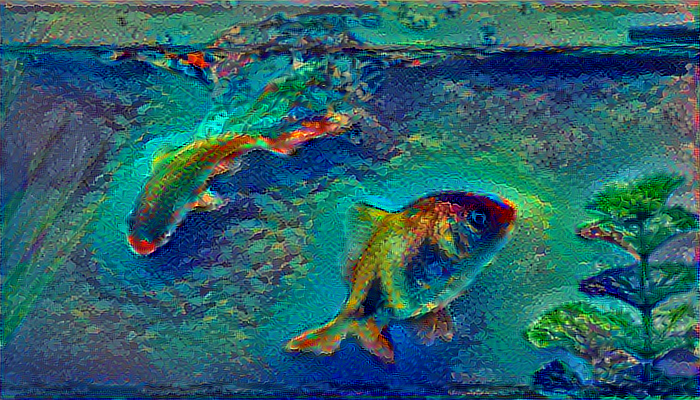

On second thought, blurring it a bit may give a better output.

This is what I got for blurring the original image using a Gaussian Filter (size=2).

It has no much obvious difference other than the region between the fishes showing a broken pattern, which I kind of like.

Yeah I think that pattern is much more interesting.

With all these things to tune, it makes me think that there’s room to create a more interactive web app that let’s the user try out lots of knobs and dials to see what looks good. The trick would be so somehow give a very rapid approximate answer so that the user can try lots of ideas quickly…

Can I also point out a questionable statement in the neural-style notebook:

The key difference is our choice of loss function.

There really is no difference in the loss function. It still is MSE.

I think what would be possibly more clear would be , ‘The key difference is transforming our raw convolutional output using a Gram matrix before we use MSE’.

Just an idea. Thanks.

EDIT! After some further thought, saying that “The” key difference implies that there is only one key difference between the prior content of the notebook and what is to follow. It seems that there are specifically 2 key differences – the introduction of the Gram matrix technique and the method of summing 3 sets of activations (i.e. each of the targs).

That’s just one part of the loss function. The full loss function is the layers of computation to create the activations, the gram matrices, etc. Just because MSE is the bit that we’re handing off to keras’ fit function at the end of all that, doesn’t mean that’s the only thing that’s the loss function…

As I’ll show in the next class, the latter is neither necessary not sufficient for style transfer. The use of the gram matrix (or something similar) is the only necessary piece for making the loss function do style transfer.

After significant experimentation I found much more interesting results when I changed the ratio of style loss to content loss by a few orders of magnitude and allowed the style starting point to slowly converge to the content image.

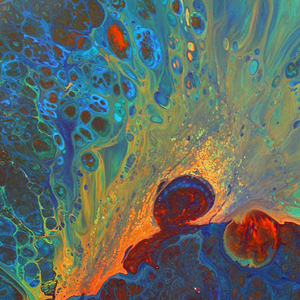

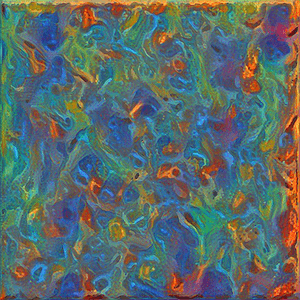

As an example the style layer below (my own artwork):

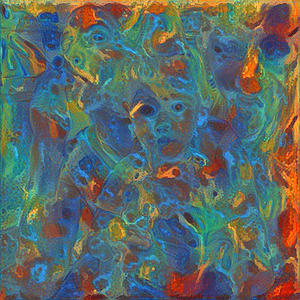

produces results that I would say match the palette but not the style of the image:

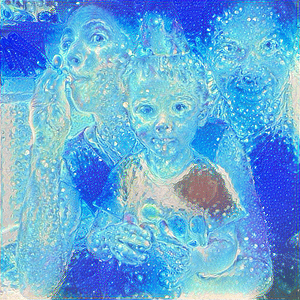

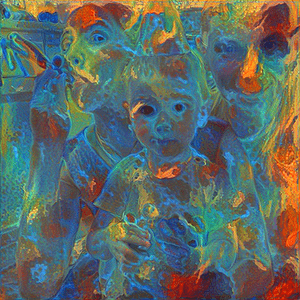

But when I modified the relative weighting of the content to be /2000 rather than /50 the results are (at least to my eye) much more indicative of the original style.

To get the final image I had to train for ~75 epochs, as opposed to the 10 for the first image, and the first few images didn’t really look anything like the content image, but by image 3 or 4 the faces began to emerge.

Here’s my loss function for anyone interested if they want to try it out for themselves:

loss = sum(style_loss(l1[0], l2[0]) for l1,l2 in zip(style_layers, style_targs))

loss += metrics.mse(block4_conv2, content_targ)/1000.

loss += metrics.mse(block3_conv3, second_content_targ)/2000.

I did find that it wasn’t necessary for a number of style images, particularly those that were made up mainly of repeating patterns but for the most styles I consistently got better results with a loss function that focused on style and had a content loss factor that was several orders of magnitude lower than our starting point.

I’ve read another paper that suggests that this ratio could be a parameter of the network, although I’m not sure I understand how that would be possible as my understanding of this space is that we want a fixed loss function and that would change it.

Given that the output depends dramatically on that ratio it would be nice if there were some way of automatically calculating the ratio though.

Here’s another example of the same image for fun:

It’s worth noting that I tried this with much more sparse line drawing style images and the results were terrible, so it could just be that the level of texture and detail dictate the ratio and that could be a reasonable way of calculating it automatically.

Could you explain this bit more?

Sure, i’ll give it a shot… It’s easiest understood when looking at images.

With a content loss to style loss ratio of 1:10 the image generated by the first epoch is:

which already contains a lot of the details and by epoch 10 we have an image that looks like:

If we set the ratio of content to style to 1:100 we have an initial image that looks like our first style image:

and within a few epochs (3 in this case) we begin to see the content details:

and if we continue training for 25 epochs we still end up with an image that converges closer to the content, although it’s a little more interesting and looks like:

If we set our content to style ratio to 1:1000 we have an initial set of images that look nothing like our content image, for example this is epoch 5’s output:

but as we continue training we begin to see the content image begin to appear. Here is epoch 10:

And here is epoch 25:

Training longer (75 epochs) produced the image I posted above.

I found it really interesting how much that ratio impacted the final results.

OK I see what you’re saying I think: set the style/content ratio high, and then train for a larger number of iterations.

Thanks for the explanation.

Exactly. For highly textured and complex images (or at least for my own art) that seems to produce images that are much closer to what I’d call the ‘style’ of the original.

I think what’s happening is that the slope of the content loss results in an image that is a local minima much earlier and that if you want to avoid this and find a more stylistic image you have to decrease the ratio in order to force the search to happen in the style gradients first. At least that’s my intuition.

Conversely if the style image is really simple it seems like a low content to style ratio works better.

I’m still interested in how to do this automatically. I read a paper that talked about the ratio as an input to the net, but i’m not sure that I like that solution both because the loss function becomes a changing one and because the minimum error doesn’t necessarily correspond to the image that’s going to be the right blend of style and content. I think the rule of thumb of low ratios for simple style and high ratios for complex style is a reasonable starting point and could definitely be automated, but there’s probably a more elegant solution.

That would be a very interesting avenue of inquiry… Tricky to know how to proceed, since I’m not even sure how to measure success; it seems like a purely qualitative assessment.

I’m thinking it might be possible to add the difference between the complexity measure of the generated image and the style image to the loss function.

http://vintage.winklerbros.net/Publications/qomex2013si.pdf contains a pretty straightforward complexity measure; essentially the mean pixel value after applying x and y sobel filters to the images.

If I have any time after finishing the lesson 9 homework I might give it a try.

I’m trying to use your code to do a luminance transfer, but I was wondering how you loaded the “_lum.jpg” images into the model. The VGG model expects the input shape to have the three RGB channels, and the lum images have a different shape.

I tried a couple of things, including resaving the image as JPG hoping that would add back the channels, but that didn’t seem to work either.

print("ORIGINAL IMAGE")

img = imread('pictures/400_wedding.jpg')

print(img.shape)

print("CONVERSION TO LUV")

lum = rgb2luv(img)[...,0]

print(lum.shape)

print("SAVE AND LOAD")

imsave('pictures/test.jpg', lum, format='jpeg')

img = imread('pictures/test.jpg')

print(img.shape)

ORIGINAL IMAGE

(400, 400, 3)

CONVERSION TO LUV

(400, 400)

SAVE AND LOAD

(400, 400)

Any pointers on how to get this to work?

Thanks!

Jon

Couple things you can do (posted from phone and not proof read so caveat emptor):

skimage.color.gray2rgb - will work with normal VGG. There are similar functions in PIL and opencv if you’re using those.

np.repeat(np.expand_dims(img, -1), 3, axis=-1) will work too.

Or if you’re going to be working with a lot of grayscale images, you can modify the VGG model. If you sum the weights (+/- biases) along the channel axis of the first conv layer you’ll get the weights for grayscale VGG.

w, = first_conv_layer.get_weights().sum(axis=-1)

Why are we dividing the style image size by 3.5 and in the simpson style image we are dividing it by 2.7? @jeremy

From what I remember, the original Starry Night image was pretty big. Jeremy divided by some number (3.5) that brought it down in size to something closer to the content image. I’m guessing the Simpson style image was also pretty big, but not quite as big, and so it was only divided by 2.7. A heuristic is probably to get the style image close to the resolution of your content image (although, it might be a fun experiment to use style images with very different resolutions than the content image).

Hi @resdntalien, I think the random image that we start with has to be of same size as content image.Because content loss requires both of them to be same shape.But in case of style loss, the random image need not have the same size as style image as long as they are considered from the same layer.This is because we are using gram matrix which will have dimensions of num_filtersxnum_filters

Hi Jeremy, I’m going through the MOOC right now and I’m getting a similar issue but when I remove the /100 it still doesn’t work. My code is here: https://github.com/zaoyang/fast_ai_course/tree/master/part2_orig

https://github.com/zaoyang/fast_ai_course/blob/master/part2_orig/neural-style.ipynb

The only thing I have different is the requirements with Keras and I did some minor tweaks to make it work with the latest Keras. Any idea what’s wrong?

In requirements.txt, it has my environment. I’m tried this locally on Mac and on the AWS server with Ubuntu.

Conda environment:

PIP environment:

Both get this yellow image issues. Is this an environment issue or code issue?

@jeremy The other issues I’m running into is the following. This is happening on both Ubuntu and Mac as well. I didn’t change anything. So I’m not sure if this is an issue with changing from Theano to TF or another issues. Online it says that it’s bad initalizations of shapes but not sure what the issue could be.

indent preformatted text by 4 spaces

---------------------------------------------------------------------------

InvalidArgumentError Traceback (most recent call last)

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in _do_call(self, fn, *args)

1038 try:

-> 1039 return fn(*args)

1040 except errors.OpError as e:

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in _run_fn(session, feed_dict, fetch_list, target_list, options, run_metadata)

1020 feed_dict, fetch_list, target_list,

-> 1021 status, run_metadata)

1022

~/anaconda3/envs/ml_py3/lib/python3.6/contextlib.py in __exit__(self, type, value, traceback)

88 try:

---> 89 next(self.gen)

90 except StopIteration:

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/errors_impl.py in raise_exception_on_not_ok_status()

465 compat.as_text(pywrap_tensorflow.TF_Message(status)),

--> 466 pywrap_tensorflow.TF_GetCode(status))

467 finally:

InvalidArgumentError: Incompatible shapes: [64] vs. [128]

[[Node: gradients_1/add_1_grad/BroadcastGradientArgs = BroadcastGradientArgs[T=DT_INT32, _class=["loc:@add_1"], _device="/job:localhost/replica:0/task:0/cpu:0"](gradients_1/add_1_grad/Shape, gradients_1/add_1_grad/Shape_1)]]

During handling of the above exception, another exception occurred:

InvalidArgumentError Traceback (most recent call last)

<ipython-input-71-d733ab4b440a> in <module>()

----> 1 x = solve_image(evaluator, iterations, x)

<ipython-input-27-99c5991ad031> in solve_image(eval_obj, niter, x)

2 for i in range(niter):

3 x, min_val, info = fmin_l_bfgs_b(eval_obj.loss, x.flatten(),

----> 4 fprime=eval_obj.grads, maxfun=20)

5 x = np.clip(x, -127,127)

6 print('Current loss value:', min_val)

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/scipy/optimize/lbfgsb.py in fmin_l_bfgs_b(func, x0, fprime, args, approx_grad, bounds, m, factr, pgtol, epsilon, iprint, maxfun, maxiter, disp, callback, maxls)

191

192 res = _minimize_lbfgsb(fun, x0, args=args, jac=jac, bounds=bounds,

--> 193 **opts)

194 d = {'grad': res['jac'],

195 'task': res['message'],

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/scipy/optimize/lbfgsb.py in _minimize_lbfgsb(fun, x0, args, jac, bounds, disp, maxcor, ftol, gtol, eps, maxfun, maxiter, iprint, callback, maxls, **unknown_options)

326 # until the completion of the current minimization iteration.

327 # Overwrite f and g:

--> 328 f, g = func_and_grad(x)

329 elif task_str.startswith(b'NEW_X'):

330 # new iteration

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/scipy/optimize/lbfgsb.py in func_and_grad(x)

276 else:

277 def func_and_grad(x):

--> 278 f = fun(x, *args)

279 g = jac(x, *args)

280 return f, g

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/scipy/optimize/optimize.py in function_wrapper(*wrapper_args)

290 def function_wrapper(*wrapper_args):

291 ncalls[0] += 1

--> 292 return function(*(wrapper_args + args))

293

294 return ncalls, function_wrapper

<ipython-input-25-95afa49bd58f> in loss(self, x)

3

4 def loss(self, x):

----> 5 loss_, self.grad_values = self.f([x.reshape(self.shp)])

6 return loss_.astype(np.float64)

7

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/keras/backend/tensorflow_backend.py in __call__(self, inputs)

2227 session = get_session()

2228 updated = session.run(self.outputs + [self.updates_op],

-> 2229 feed_dict=feed_dict)

2230 return updated[:len(self.outputs)]

2231

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in run(self, fetches, feed_dict, options, run_metadata)

776 try:

777 result = self._run(None, fetches, feed_dict, options_ptr,

--> 778 run_metadata_ptr)

779 if run_metadata:

780 proto_data = tf_session.TF_GetBuffer(run_metadata_ptr)

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in _run(self, handle, fetches, feed_dict, options, run_metadata)

980 if final_fetches or final_targets:

981 results = self._do_run(handle, final_targets, final_fetches,

--> 982 feed_dict_string, options, run_metadata)

983 else:

984 results = []

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in _do_run(self, handle, target_list, fetch_list, feed_dict, options, run_metadata)

1030 if handle is None:

1031 return self._do_call(_run_fn, self._session, feed_dict, fetch_list,

-> 1032 target_list, options, run_metadata)

1033 else:

1034 return self._do_call(_prun_fn, self._session, handle, feed_dict,

~/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/client/session.py in _do_call(self, fn, *args)

1050 except KeyError:

1051 pass

-> 1052 raise type(e)(node_def, op, message)

1053

1054 def _extend_graph(self):

InvalidArgumentError: Incompatible shapes: [64] vs. [128]

[[Node: gradients_1/add_1_grad/BroadcastGradientArgs = BroadcastGradientArgs[T=DT_INT32, _class=["loc:@add_1"], _device="/job:localhost/replica:0/task:0/cpu:0"](gradients_1/add_1_grad/Shape, gradients_1/add_1_grad/Shape_1)]]

Caused by op 'gradients_1/add_1_grad/BroadcastGradientArgs', defined at:

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/runpy.py", line 85, in _run_code

exec(code, run_globals)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel_launcher.py", line 16, in <module>

app.launch_new_instance()

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/traitlets/config/application.py", line 658, in launch_instance

app.start()

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/kernelapp.py", line 477, in start

ioloop.IOLoop.instance().start()

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/zmq/eventloop/ioloop.py", line 177, in start

super(ZMQIOLoop, self).start()

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tornado/ioloop.py", line 888, in start

handler_func(fd_obj, events)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tornado/stack_context.py", line 277, in null_wrapper

return fn(*args, **kwargs)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/zmq/eventloop/zmqstream.py", line 440, in _handle_events

self._handle_recv()

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/zmq/eventloop/zmqstream.py", line 472, in _handle_recv

self._run_callback(callback, msg)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/zmq/eventloop/zmqstream.py", line 414, in _run_callback

callback(*args, **kwargs)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tornado/stack_context.py", line 277, in null_wrapper

return fn(*args, **kwargs)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/kernelbase.py", line 283, in dispatcher

return self.dispatch_shell(stream, msg)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/kernelbase.py", line 235, in dispatch_shell

handler(stream, idents, msg)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/kernelbase.py", line 399, in execute_request

user_expressions, allow_stdin)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/ipkernel.py", line 196, in do_execute

res = shell.run_cell(code, store_history=store_history, silent=silent)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/ipykernel/zmqshell.py", line 533, in run_cell

return super(ZMQInteractiveShell, self).run_cell(*args, **kwargs)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/IPython/core/interactiveshell.py", line 2698, in run_cell

interactivity=interactivity, compiler=compiler, result=result)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/IPython/core/interactiveshell.py", line 2802, in run_ast_nodes

if self.run_code(code, result):

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/IPython/core/interactiveshell.py", line 2862, in run_code

exec(code_obj, self.user_global_ns, self.user_ns)

File "<ipython-input-67-de37f8a93ea6>", line 2, in <module>

grads = K.gradients(loss, model.input)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/keras/backend/tensorflow_backend.py", line 2264, in gradients

return tf.gradients(loss, variables, colocate_gradients_with_ops=True)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/gradients_impl.py", line 560, in gradients

grad_scope, op, func_call, lambda: grad_fn(op, *out_grads))

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/gradients_impl.py", line 368, in _MaybeCompile

return grad_fn() # Exit early

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/gradients_impl.py", line 560, in <lambda>

grad_scope, op, func_call, lambda: grad_fn(op, *out_grads))

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/math_grad.py", line 598, in _AddGrad

rx, ry = gen_array_ops._broadcast_gradient_args(sx, sy)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/gen_array_ops.py", line 411, in _broadcast_gradient_args

name=name)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/op_def_library.py", line 768, in apply_op

op_def=op_def)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 2336, in create_op

original_op=self._default_original_op, op_def=op_def)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 1228, in __init__

self._traceback = _extract_stack()

...which was originally created as op 'add_1', defined at:

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/runpy.py", line 193, in _run_module_as_main

"__main__", mod_spec)

[elided 18 identical lines from previous traceback]

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/IPython/core/interactiveshell.py", line 2862, in run_code

exec(code_obj, self.user_global_ns, self.user_ns)

File "<ipython-input-67-de37f8a93ea6>", line 1, in <module>

loss = sum(style_loss(l1[0], l2[0]) for l1,l2 in zip(layers, targs))

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/math_ops.py", line 821, in binary_op_wrapper

return func(x, y, name=name)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/ops/gen_math_ops.py", line 73, in add

result = _op_def_lib.apply_op("Add", x=x, y=y, name=name)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/op_def_library.py", line 768, in apply_op

op_def=op_def)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 2336, in create_op

original_op=self._default_original_op, op_def=op_def)

File "/Users/zaoyang/anaconda3/envs/ml_py3/lib/python3.6/site-packages/tensorflow/python/framework/ops.py", line 1228, in __init__

self._traceback = _extract_stack()

InvalidArgumentError (see above for traceback): Incompatible shapes: [64] vs. [128]

[[Node: gradients_1/add_1_grad/BroadcastGradientArgs = BroadcastGradientArgs[T=DT_INT32, _class=["loc:@add_1"], _device="/job:localhost/replica:0/task:0/cpu:0"](gradients_1/add_1_grad/Shape, gradients_1/add_1_grad/Shape_1)]]