This is a wiki post - feel free to edit to add links from the lesson or other useful info.

Lesson resources

- Lesson Videos

This is a wiki post - feel free to edit to add links from the lesson or other useful info.

is it a bad idea to use median in your append_stats as opposed to the mean? or use both?

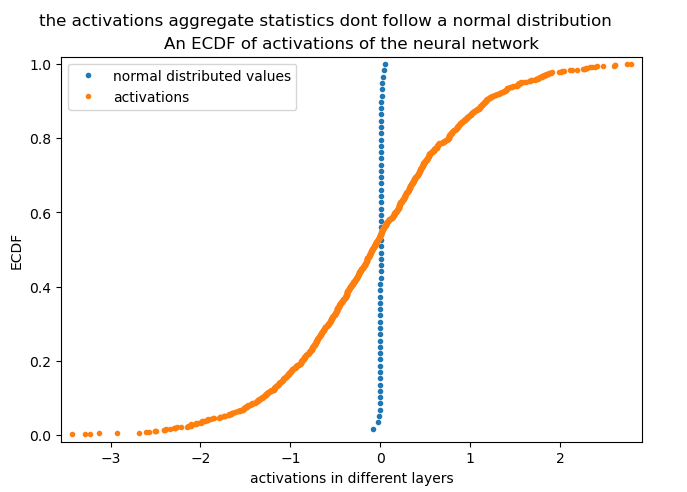

Could an Empirical Cumulative Distribution Function (ECDF) help with the visualization since we are aiming for the plots to have a normal distribution for the activations in the different layers?

I am trying to create a new callback but before even starting I am stuck creating the baseline model from the HF dataset.

The code bellows throws an error, but I have no idea what am I doing wrong.

from __future__ import annotations

import torch.nn.functional as F

import torchvision.transforms.functional as TF

from torcheval.metrics import MulticlassAccuracy

from datasets import load_dataset # HF datasets

from fastai.vision.all import *

x,y = 'image','label'

name = "fashion_mnist"

dsd = load_dataset(name)

bs = 1024

def inplace(f):

def _f(b):

f(b)

return b

return _f

@inplace

def transformi(b): b[x] = [TF.to_tensor(o) for o in b[x]]

tds = dsd.with_transform(transformi)

dls = DataLoaders.from_dsets(tds['train'], tds['test'], bs=bs)

learner = vision_learner(

dls=dls,

arch=resnet18,

pretrained=False,

n_out = 10,

loss_func=F.cross_entropy,

metrics=[MulticlassAccuracy()]

)

learner.fit(1)```Please provide the full error and stack trace so we can help you.

I think for both of your questions it would be interesting to try and see how they go!

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

Cell In [21], line 26

17 dls = DataLoaders.from_dsets(tds['train'], tds['test'], bs=bs)

18 learner = vision_learner(

19 dls=dls,

20 arch=resnet18,

(...)

24 metrics=[MulticlassAccuracy()]

25 )

---> 26 learner.fit(1)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:256, in Learner.fit(self, n_epoch, lr, wd, cbs, reset_opt, start_epoch)

254 self.opt.set_hypers(lr=self.lr if lr is None else lr)

255 self.n_epoch = n_epoch

--> 256 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:193, in Learner._with_events(self, f, event_type, ex, final)

192 def _with_events(self, f, event_type, ex, final=noop):

--> 193 try: self(f'before_{event_type}'); f()

194 except ex: self(f'after_cancel_{event_type}')

195 self(f'after_{event_type}'); final()

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:245, in Learner._do_fit(self)

243 for epoch in range(self.n_epoch):

244 self.epoch=epoch

--> 245 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:193, in Learner._with_events(self, f, event_type, ex, final)

192 def _with_events(self, f, event_type, ex, final=noop):

--> 193 try: self(f'before_{event_type}'); f()

194 except ex: self(f'after_cancel_{event_type}')

195 self(f'after_{event_type}'); final()

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:239, in Learner._do_epoch(self)

238 def _do_epoch(self):

--> 239 self._do_epoch_train()

240 self._do_epoch_validate()

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:231, in Learner._do_epoch_train(self)

229 def _do_epoch_train(self):

230 self.dl = self.dls.train

--> 231 self._with_events(self.all_batches, 'train', CancelTrainException)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:193, in Learner._with_events(self, f, event_type, ex, final)

192 def _with_events(self, f, event_type, ex, final=noop):

--> 193 try: self(f'before_{event_type}'); f()

194 except ex: self(f'after_cancel_{event_type}')

195 self(f'after_{event_type}'); final()

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:199, in Learner.all_batches(self)

197 def all_batches(self):

198 self.n_iter = len(self.dl)

--> 199 for o in enumerate(self.dl): self.one_batch(*o)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:226, in Learner.one_batch(self, i, b)

224 self.iter = i

225 b = self._set_device(b)

--> 226 self._split(b)

227 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

File ~/.miniconda3/envs/py39/lib/python3.9/site-packages/fastai/learner.py:190, in Learner._split(self, b)

188 def _split(self, b):

189 i = getattr(self.dls, 'n_inp', 1 if len(b)==1 else len(b)-1)

--> 190 self.xb,self.yb = b[:i],b[i:]

TypeError: unhashable type: 'slice'

The problem is that you’re using the fastai Learner, not the miniai Learner. They’re not identical.

I would recommend not importing anything from fastai in this course. The goal is that we create that stuff ourselves! ![]()

I just pushed a callback using miniai.

It was explained at the beginning of 17…

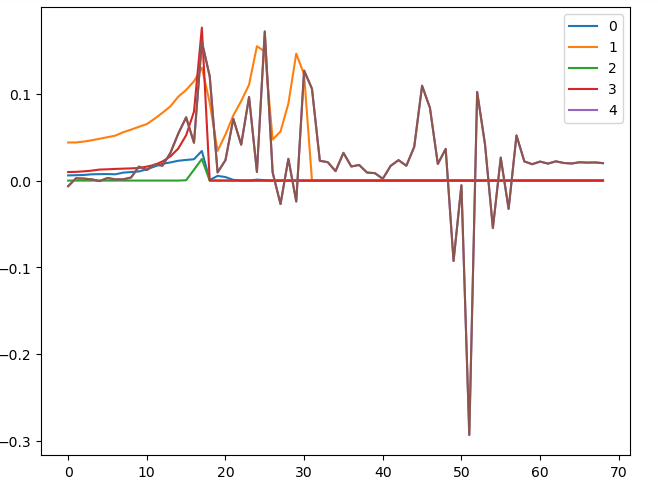

I am still toying with callbacks and hooks and I was trying to customize the ProgressCB to plot any metric (no just loss). Why not plot the progress of activation stats, for example?

I was able to add other metrics to the plot but I noticed that fastprogress.master_bar has the legend names ['train', 'valid'] hardcoded and that made me wonder if it I should change fastprogress and ProgressCB or use something like TensorBoard or WandB for this end.

They’re not hard-coded - that’s just a default. You can change them to anything you like! You can change NBMasterBar.names to change all objects created by the class, or change the names attr in an object to modify just that object.

Alright. Just cleaning up the notebook with the experiments mentioned and will share what I have in the next 2 weeks.

I made some initial experiments with using the median as an aggregate function. I used this since it uses a couple of data points. But it’s not so immediately useful to see skew like mean but I’ll go through the following notebooks to see if I get more intuitive plots. I think the ECDF was a bit better I plotted two plots on top of each other and I immediately noticed the training was not stable give the shape of the plot compared to the one with orange points. I think having a bit of better better training loop should more towards the shape of the orange point cloud. The y axis tells us the percentage of how many points we have majority of we have loads of zeros for this one.

notebook on github

I was practicing Context Managers and Callbacks.

For those interested in my progressive exploration, here is the Colab Notebook.

My question or doubt is.

Lets call it level order in which the highest level is fit, then epoch and then the lower level is batch.

When raising an Exception in a before_{LEVEL} function, i.e. before_fit, before_epoch or before_batch.

CancelFitException in a before_fit funcThen calling learn.fit() is going to return the following outputs:

@contextmanagerRuntimeError: generator didn't yield

class _CbCtxInnerCancelFitException:

As noted in the notebook 09_learner.ipynb, _CbCtxInner was created because

contextlib.context_managerhas a surprising “feature” which doesn’t let us raise an exception before theyield.

RuntimeError: generator didn't yield and throwing Cancel{LEVEL}Exception: instead?I’m not sure if the combination of rising exceptions of lower levels in “before” functions of higher levels makes sense. But I tried the following:

CancelEpochException in a before_fit func.Then calling learn.fit() is going to return the following exceptions:

@contextmanager and class _CbCtxInnerCancelEpochException:

There are no errors, and there are no differences between @contextmanager and class _CbCtxInner.

class _CbCtxInner does is managing the exception differently when both the exception and the “before” function are in the same level. That means that @contextmanager returns RuntimeError: generator didn't yield and class _CbCtxInner returns Cancel{LEVEL}Exception:. Are those results what was intended with class _CbCtxInner?Thanks.

Yeah this stuff isn’t working at the moment. I think we’ll need to move away from context managers.

Hi Jeremy, should the new class with_cbs have a finally: o.callback(f'cleanup_{self.nm}') for LRFinderCB to plot the learning rate?

Oh yes will fix now! Well spotted ![]()

Hi there, a great lecture! I wanted to ask about LR finder results. Let’s say there is a pre-trained deep network and we figured out that a particular value of LR works best, i.e., the value X. Now, we want to train/fine-tune the net using a one-cycle scheduler. Should we set OneCycle(max_lr=X) in this case? I wonder if this discovered LR works as expected in this case, or should we somehow incorporate the schedule into LR finder to know for sure?

In other words. How well do the LR finding techniques work with various LR scheduling algorithms? Like it finds the best “point estimate” of the LR to start with, and I wonder if we can somehow find the “best trajectory”, or schedule, for a specific problem.