In continuation of my efforts to dig deeper and understand how the fastai library works, I embarked upon the task of finding out how the learner that we create in Lesson 1 operates. As a part of the same I have created this post and along with it a jupyter notebook that explains the code line by line as well as a presentation (converted into pdf) on the same.

Let’s go ahead with the learning about the learner. So we have the code

learn = create_cnn(data,models.resnet34,metrics=error_rate)

and then all of a sudden magic happens. We are able to fit the same to our dataset and then predict classes for our cat and dog breeds using a pretrained resnet34 model. Let’s dive deeper into the code to understand how this happens.

The inputs to the create_cnn function are as follows:

• data (DataBunch)

• arch (Model)

• cut (layers to be removed)

• pretrained(True or False)

• lin_ftrs(Parameters to add additional layers)

• ps(dropout = 0.5)

• custom_head(new layer to be added to fit the number of classes based on dataset)

• split_on(where to split the model to initialize the weights)

• classification(default = True)

• **kwargs(Additional Arguments)

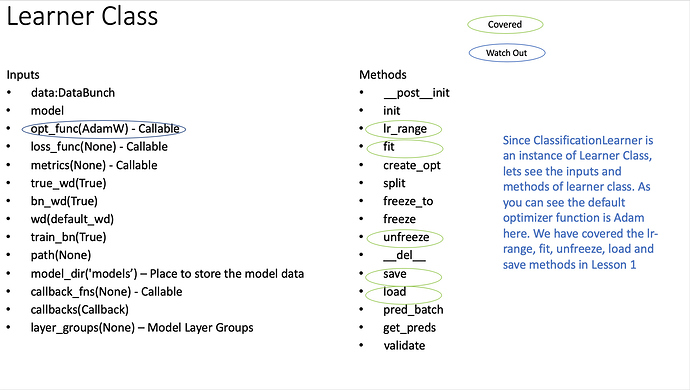

The function creates a classification learner as an intermediate step which is in itself an instance of learner class. The inputs and methods of a learner class are given below as we are using some of them in Lesson1.

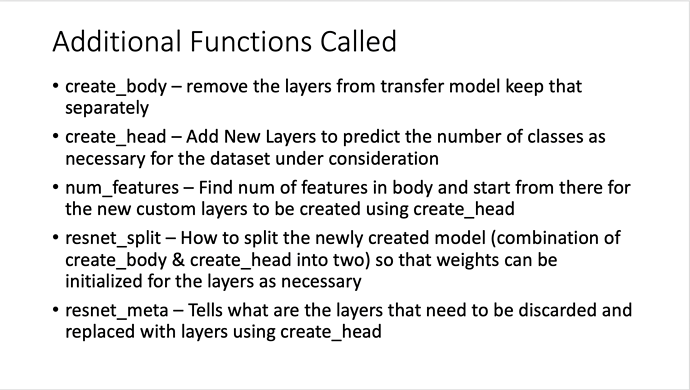

Let us now deep dive into the various things that happen when you run the code for create_cnn function. This function in turn calls various other functions that are detailed below:

The code for create_cnn is as follows:

def create_cnn(data:DataBunch, arch:Callable, cut:Union[int,Callable]=None, pretrained:bool=True,

lin_ftrs:Optional[Collection[int]]=None, ps:Floats=0.5,

custom_head:Optional[nn.Module]=None, split_on:Optional[SplitFuncOrIdxList]=None,

classification:bool=True, **kwargs:Any)->ClassificationLearner:

"Build convnet style learners."

assert classification, 'Regression CNN not implemented yet, bug us on the forums if you want this!'

meta = cnn_config(arch)

body = create_body(arch(pretrained), ifnone(cut,meta['cut']))

nf = num_features_model(body) * 2

head = custom_head or create_head(nf, data.c, lin_ftrs, ps)

model = nn.Sequential(body, head)

learn = ClassificationLearner(data, model, **kwargs)

learn.split(ifnone(split_on,meta['split']))

if pretrained: learn.freeze()

apply_init(model[1], nn.init.kaiming_normal_)

return learn

Line 1: meta = cnn_config(arch)

This code tries to get the meta data for the architecture (in our example for Lesson 1 it is Resnet34) and wants to get the ‘cut’ value.

def cnn_config(arch):

torch.backends.cudnn.benchmark = True

return model_meta.get(arch, _default_meta)

In the fast.ai library, the number of layers that needs to be cut from transfer learning models like resnet34 is defined in model_meta.

model_meta = {models.resnet18 :{_resnet_meta},

models.resnet34: {_resnet_meta},models.resnet50 :{_resnet_meta},

models.resnet101:{_resnet_meta},models.resnet152:{**_resnet_meta}}

If the data is not available in model_meta then it is taken from default_meta.

_default_meta = {‘cut’:-1, ‘split’:_default_split}

As you can see, based on the model meta has the details defined for resnet34 in a value called resnet_meta. The resnet meta says that the last two layers needs to be cut.

models.resnet34: {**_resnet_meta}

_resnet_meta = {‘cut’:-2, ‘split’:_resnet_split }

Line 2: body = create_body(arch(pretrained), ifnone(cut,meta[‘cut’]))

This line of code takes the resnet34 architecture, removes the last two layers and stores the remaining ones in a model called body. Refer the jupyter notebook and presentation for a more intuitive understanding code wise.

Line 3: nf = num_features_model(body) * 2

This takes the maximum number of features in the body model and multiplies that by 2. As you will in the code, the max features in the body model is 512 and therefore the nf value becomes 1024 since 512 is to be multiplied by 2. Refer the jupyter notebook and presentation for a more intuitive understanding code wise.

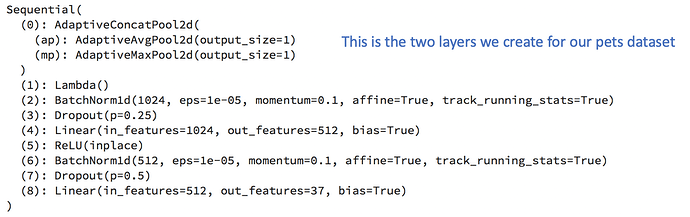

Line 4: head = custom_head or create_head(nf, data.c, lin_ftrs, ps)

This creates the last two layers that we removed from the resnet34 model. But now the ouput will be equal to the number of classes for out Lesson 1 Dataset which happens to be 37. So the two layers that we will create are

-

- AdaptiveConcatPool2D ()

-

- Flatten()

As you can see in the code above, the output from the final layer is 37 features which is equal to the number of classes for our Lesson 1 Dataset. The code applies

-

- Input (1024), Output (512), Dropout (0.25), Activation (ReLU) to Layer 1

-

- Input (512), Output (37), Dropout (0.5), Activation (None) to Layer 2

Line 5: model = nn.Sequential(body, head)

Here we make a combined model of the resnet34 architecture (minus its original last two layers) and the custom two layers we have created as per our Lesson1 Dataset.

Line 6-10: Learner Creation

learn = ClassificationLearner(data, model, **kwargs)

learn.split(ifnone(split_on,meta[‘split’]))

if pretrained: learn.freeze()

apply_init(model[1], nn.init.kaiming_normal_)

return learn

Here we take the model that we created in Line 5, add the pets dataset(data) and create a classification learner. We then create identifiable splits on the model using resnet split. The identifiable splits in this are model[0][6] and model[1]. The model is also freezed so that we don’t change the weights except for the last two layers which have been added. Since we need to initialize the weights for the last two layers using Kaiming He initializers.

From then we use this learner to fit the dataset and make predictions which is what we learn in Lesson 1. Hopefully this post helps to understand what goes on behind the scenes for us to do what we do.

Link to Jupyter Notebook:

Link to Presentaion PDF: