Hello to everyone in the fast.ai community,

I would like to share a Kaggle competition that I am co-hosting. The competition is called “Understanding Clouds from Satellite Images” and has just launched with a total of $10,000 prize money. It is an image segmentation challenge but with a twist.

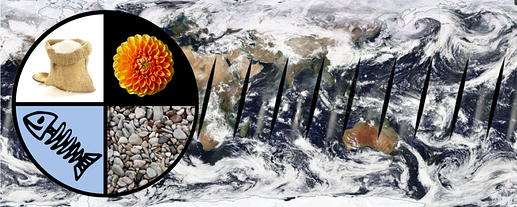

The task of the competition is to detect four different cloud patterns (Sugar, Flower, Fish and Gravel) from satellite images. (If you want to find out why we care about this sort of thing, check out the arXiv paper or the blog post below.) The first difference to typical segmentation tasks like Carvana is that the classes are subjective. Further, they do not have clear boundaries. The way the ground truth labels were created is through a crowd-sourcing activity (try it out here) where around 70 scientists sat down for a day and labeled 30,000 images. Since there is no definite truth, the challenge in this competition is to build a model that agrees with the human average.

The reason I am sharing this here is that, for me personally, this Kaggle competition is the latest step on a machine learning journey that started with fast.ai about two years ago. I was a PhD student in meteorology at the time, a little fed up with my research, and curious to learn more about this “machine learning” everyone was talking about. So after doing Andrew Ng’s original ML course on Coursera, I discovered the first version of fast.ai. I started it and immediately became hooked. I don’t think I ever learned as much in such a short amount of time as I did going through the fast.ai lectures.

In the two years since, I’ve worked on various projects that try to improve weather and climate predictions using ML, this cloud classification project among them. I love the work that I do and I would like to take this opportunity to thank @rachel and @jeremy for making this possible.

If you want to find out more about the competition and data, check out the blog I wrote and the arXiv paper.

I hope that many of you will participate in the Kaggle competition. In fact, for the arXiv paper I used the fastai library to create the segmentation model and it was pretty easy, so there are no excuses