Hello @j.laute, when I am mimicking your method, I found the dimensions doesn’t quite match, so I am still confused.

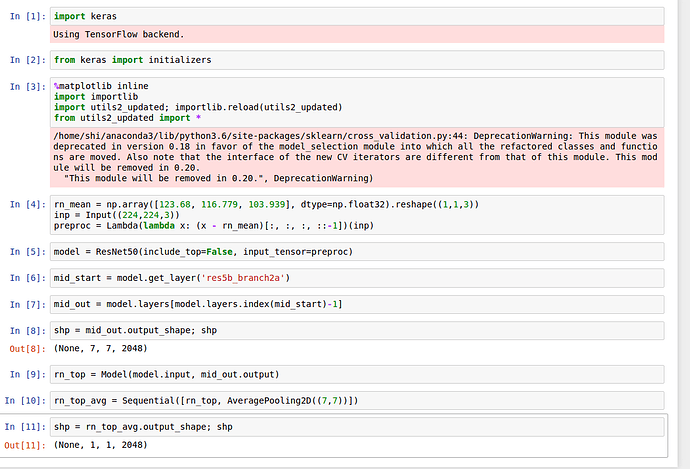

This is what I have done so far (in Keras 2 and TF as backend, but I guess that does not matter):

rn_mean = np.array([123.68, 116.779, 103.939], dtype=np.float32).reshape((1,1,3))

inp_resnet = Input((224,224,3))

preproc = Lambda(lambda x: (x - rn_mean)[:, :, :, ::-1])(inp_resnet)

resnet_model = ResNet50(include_top=False, input_tensor=preproc)

res5b_branch2a = resnet_model.get_layer('res5b_branch2a')

last_conv_layer = resnet_model.layers[resnet_model.layers.index(res5b_branch2a)-1].output

resnet_model_conv = Model(inp_resnet, Flatten()(AveragePooling2D((7,7))(last_conv_layer)))

I think this is the same as what @jeremy mentioned in the video.

When I checked the summary of this resnet_model_conv, it says the output shape should be (None, 2048), so I am expecting that if i throw 200 images to this resnet_model_conv, the outcome should have a shape of (200, 2048). But the actual output had a shape of (800, 2048)…

So later on, when I throw this output into the fully_connected model, it turns out that I have 800 rows of input but only 200 labels (images), so the fc model does not work…

I am using only 200 images since I am just testing the method. I am really confused about which part is wrong. If you could please kindly give me a hint, I would highly appreciate it!

Best,

Shi