Hello friends, I’ve had troubles explaining my thoughts yesterday on twitter and I think that the a longer format of a post might be useful for me to express better! Plus, maybe I can spark an interesting discussion and we (or selfishly, I ![]() ) can learn something from it!

) can learn something from it!

For context here is the twitter post from @radek (thanks for starting the discussion!) with replies from @jeremy in the comments!

I’m essentially having trouble sharing the idea that the convolutions described are wasteful …

First point : link reference in the video

Jeremy shows how for a 3x3 patch in the starting image (so 9 pixels, the image has only 1 channel), using 3x3 kernel and 8 filters you end up with 8 activations.

True, of course; the 9x8 matrix he shows is the “equivalent” matrix (wrt the 8 3x3 conv kernels) acting on the flattened 3x3 patch, that produce these 8 activations.

Jeremy says we’re just re-ordering / shuffling them, without doing any useful computation. I don’t think I can see it this way…

For fully connected layers I have the a similar intuition, you’re computing linear combinations (sorry, I should say affine transforms!) of your inputs and there you’d love to reduce the dimension, to find a lower dimensional space that contains the same info as the starting point but it’s easier to deal with.

But here we’re actually using convolutions, so the same 72 numbers are shifted around the all image, sharing information between the patches and producing correlated activation! You don’t want to go TOO SHALLOW or you’re not going to catch anything at all (because of all the correlations!). Think in the ‘limit’ if you had just one kernel that detect a particular type of edge, say this '' … you’d be losing all the info about ‘/’ and ‘|’ and ‘-’ etc etc …

Moreover there’s this idea of the universal function approximations even for fully connected layers! In the limit for an infinite amount of filters, even a single fully connected layer should be able to approximate any function! So more is generally better!

We know now that deeper is surely more efficient than wider (as we introduce more non-linearities etc… etc…) but I don’t think we can dismiss the fact that wider still helps even if a bit counter-intuitive!

Am I missing something in what Jeremy is explaining here?

Second point video (rephrased a bit later here )

Switching to Imagenet models (which have 3 channels → 27 weights in the each kernel), Jeremy sais that going from 27 to 32 is “wasting information” because we’re diluting the information we had at the start.

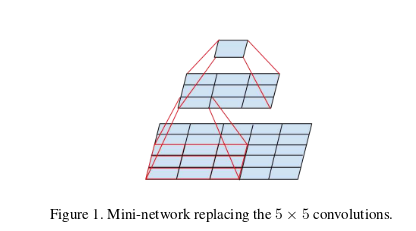

He proposes then to use a 5x5 kernel at the beginning of the net, which makes the transformation from 75 input (5x5x3) to 32 activations.

In contrast ResNet-C in the Bag of Tricks paper, borrowing from the Inception architecture, actually argues in favor of multiple small kernels at the stem.

Quoting from Szegedy et al. 2016

[…] we can use the computational and memory savings (due to the factorization the convolutions) to increase the filter-bank sizes of our network while maintaining our ability to train each model replica on a single computer

emphasis mine. So in their idea a higher number of filters would actually be better, which sort of bounce back to the “universal approximator” idea I brought out before!

The general idea in the lesson is nonetheless that we shouldn’t be using a lot of filters at the start (In He et al 2018 they actually go back to increasing the number filters more gradually, using 32 for the very first 2 convolutions and then going to 64), which I agree with, but for different reasons that has more to do with efficiency than anything else!

I think you want to gradually increase the portion of the image you’ve looked at and the number of filters/features ‘together’, because the 3x3 kernels look at a very small part of the image and (in that space, i.e. the original image) there’s only so much you can “catch” with a 3x3 kernel (edges, small corners, … ) and it’s better in my opinion to use more computations at higher layers where the receptive fields capture representations of bigger patches of the original image!

This has more to do with the “semantics” of the image classification (or representation rather) rather then the actual computations done. It has to do with the fact that we’re talking about the actual input image and not just a 1 x width x height tensor of activations of some kind! (*)

To make what I mean clearer as in what I find unsettling about the lesson, in principle you could “shift Jeremy’s argument up a few layers” and take any 3x3 patch of activations from below in the network. By his arguments it seems that when using a 3x3 convolution we ought to use max 8 filters regardless of where we are in the network, because we’d end up with a similar amount of activations as we have inputs; think of a non-bottleneck layer in ResNet, where you go for example from a X channels “image” made of activations from below to another X channel output … with padding the 2 activations will actually have the same dimensions, but I don’t think we’re doing nothing there, nor I think that a possible intermediate ReLU is doing all the work!

But again I’m not sure Jeremy is implying that at all, I’m just extrapolating here! Maybe afterall my thinking is not so different from Jeremy’s, just expressed in a different way ![]()

I find it intriguing though that the idea of using small 3x3 kernels at the beginning of the net stemmed from a computational point of view (same receptive field but lower num of parameters) but has since been shown to work well in practice. I find myself having to rethink this every time every time I do image classification because I struggle intuitively with the idea that a 3x3 kernel could actually capture anything meaningful from an image ( I mean 9 pixels!! ), especially for more structured “images” like spectrograms or piano rolls ( I like to deal with sound and music, you should definitely talk about the fastai audio at some point! ![]() ) … but they always work best! I wonder where is the magical intuition for that!

) … but they always work best! I wonder where is the magical intuition for that!

Aaaanyway, this became much longer that I thought! I’m glad I didn’t decide to make it into a twitter thread!

What are your thoughts?

Anybody can help me clarify / discuss / elaborate the points I made above? ![]()

Sidenote: Thinking back I don’t know what I was referring to when I said “compression” in my tweet; maybe to the fact that using the same 72 weights across the image you get as output correlated activations that are sharing some structure, so “compressible” rather than “compressed”. It might just have been the wrong word (Friday night) altogether! Sorry!

(*) I find really fascinating in NNs works like DeconvNets and derivations exactly because they give us clues of this “semantics” for the intermediate layers! ![]()