I am facing the same issue with the FastAI v2 library. Similar to the question previously asked about 2 years back https://forums.fast.ai/t/learn-fit-one-cycle-makes-me-run-out-of-memory-on-cpu-while-i-train-on-gpu/45428

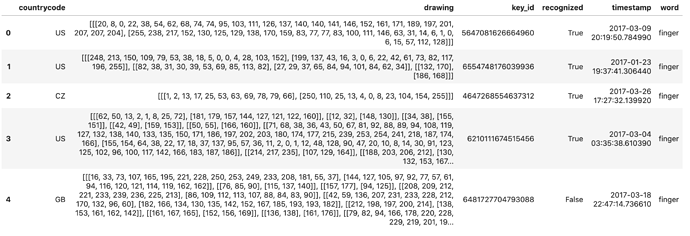

I am trying to work on Kaggle’s QuickDraw dataset. The data comes in the form of a CSV file like below:

The “drawing” column represents the drawing data points from which we can construct an image while the “word” column represents the label. Now of course, I can first create and save the images into folder and then train a Fastai model in the general imagenet way, or I could generate the images on the fly.

The drawback of the first method is, It will take forever to save the images to disk, not to mention the storage space. The better way is to generate it the images on the fly.

So I wrote the following Datablock to be able to do it.

# Function to generate image from strokes

def draw_to_img(strokes, im_size = 256):

fig, ax = plt.subplots()

for x, y in strokes:

ax.plot(x, -np.array(y), lw = 10)

ax.axis('off')

fig.canvas.draw()

A = np.array(fig.canvas.renderer._renderer) # converting them into array

plt.close('all')

plt.clf()

A = (cv2.resize(A, (im_size, im_size)) / 255.) # image resizing to uniform format

return A[:, :, :3]

class ImageDrawing(PILBase):

@classmethod

def create(cls, fns):

strokes = json.loads(fns)

img = draw_to_img(strokes, im_size=256)

img = PILImage.create((img * 255).astype(np.uint8))

return img

def show(self, ctx=None, **kwargs):

t1 = self

if not isinstance(t1, Tensor): return ctx

return show_image(t1, ctx=ctx, **kwargs)

def ImageDrawingBlock():

return TransformBlock(type_tfms=ImageDrawing.create, batch_tfms=IntToFloatTensor)

And here is the full datablock:

QuickDraw = DataBlock(

blocks=(ImageDrawingBlock, CategoryBlock),

get_x=lambda x:x[1],

get_y=lambda x:x[5].replace(' ','_'),

splitter=RandomSplitter(valid_pct=0.005),

item_tfms=Resize(128),

batch_tfms=aug_transforms()

)

data = QuickDraw.dataloaders(df, bs=16, path=path)

With this, I am able to effectively create the images on the fly and train the model. But the problem is, mid training using learn.fit_one_cycle(), The Ram memory is being slowly used up to complelely and the training just halts without any error or warning.  Its exactly as the previous question’s author has described.

Its exactly as the previous question’s author has described.

I tried the solution which seemingly worked for the previous question’s author, but it doesnt work for me. Im using the V2 Library and by this point I have spent quite some time trying to fix this, would really appreciate any help!

Thanks in advance.