Edit:

Paperspace has a Dockerfile for fastai on GitHub - Paperspace/fastai-docker: Fast.AI course complete docker container for Paperspace and Gradient. If you have nvidia docker, you can get started immediately with:

sudo docker run --runtime=nvidia -d -p 8888:8888 paperspace/fastai:cuda9_pytorch0.3.0

Any code you edit in the container however will be in the container’s file system, and will disappear if you delete the container without moving it out first. If you’d like to keep your code and data on your main file system you can follow my setup. You can also edit Paperspace’s Dockerfile accordingly to allow for this setup.

(End edit)

For those familiar with Docker, here’s a Dockerfile for the fastai library and the Dockerfile’s README for setup details.

Jeremy’s warning

I haven’t experienced any perceivable issues, but I haven’t investigated Docker’s interaction with CUDA to any detail. I’m using NVIDIA’s CUDA 9.0 image as a base image, which might be helping. @jeremy Could you elaborate on these issues? Maybe I’m suffering performance losses from Docker without realizing it.

The beginning of the README

2018-01-12: The Docker image works with the lesson1 notebook. It’s untested for other notebooks.

Why Docker

To not let dependencies slow you or anyone else down.

- If you make a mistake while sorting out a mess of dependencies, you can just delete the Docker container and start from a fresh one; as opposed to trying to undo it on your operating system.

- Once you sort out a mess of dependencies, you’ll never have to do it again. Even if you install a new operating system or move to a new computer, you can quickly recover your environment by downloading the corresponding Docker image or Dockerfile.

- Also, no one else will have to go through that mess, because you can send them the Docker image or Dockerfile.

For more information, check out this Docker tutorial for data science.

Assumptions

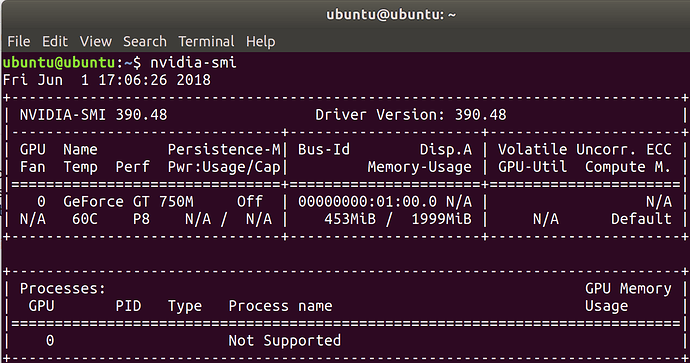

- You have a machine with an NVIDIA GPU in it.

- If you don’t, check out Paperspace or consider building your own deep learning workstation.

- Your machine is running Ubuntu.

- nvidia docker only works on Linux, unfortunately.

- You’ve installed an NVIDIA driver.

- You’ve installed docker.

- You’ve installed nvidia docker.

How to use the Docker image

If you’ve followed the setup:

- Enter

fastaiinto a terminal.- Enter

j8into the container’s terminal that popped up as a result.A Jupyter server will now be running in a fastai environment with all of fastai’s dependencies.

For those unfamiliar with Docker but would like to learn it, @hamelsmu wrote a great Docker tutorial.

I’m running a 1080Ti with a i7-5930K and 32 GB RAM. I’m also using nvidia-docker, but I’m using a pre-build pytorch image from nvidia. The 3 epochs (directly above the cyclic LR schedule image in the notebook) take 2:33 minutes. Would be interesting to see if nvidia has used some “secret optimizing sauce” or if it’s just the same in comparison to the cuda images from docker hub!

I’m running a 1080Ti with a i7-5930K and 32 GB RAM. I’m also using nvidia-docker, but I’m using a pre-build pytorch image from nvidia. The 3 epochs (directly above the cyclic LR schedule image in the notebook) take 2:33 minutes. Would be interesting to see if nvidia has used some “secret optimizing sauce” or if it’s just the same in comparison to the cuda images from docker hub!