If you use our Learner.distributed it’ll disable distributed validation automatically, FYI.

FYI, tests/test_callbacks_csv_logger.py fails very often on CI - inconsistent behavior:

https://dev.azure.com/fastdotai/fastai/_build/results?buildId=2625

New stuff is being worked on - your input is sought out:

I started working on gpu mem utils. You can see the initial implementation here: https://github.com/fastai/fastai/blob/master/fastai/utils/mem.py and the test suite is here: https://github.com/fastai/fastai/blob/master/tests/test_utils_mem.py. At the moment all the docs are in the code, and will make proper docs once the API is stable.

Currently, the main need behind this API, is to be able to measure GPU RAM in tests to detect memory leaks (see the last test in the test module linked above). But, of course, many other uses are possible.

We no longer need nvidia-smi, and use a much much faster nvml API.

This is all new, so feedback is welcome. The idea is to make the api easy to use w/ and w/o gpu, so less code is needed on the user side and as few try/except as possible.

Thank you.

edit: Added a test utils module: https://github.com/fastai/fastai/blob/master/tests/utils/mem.py

and documented them here: https://docs.fast.ai/dev/test.html#testing-memory-leaks

first leakage test that actually measures GPU RAM leaks: https://github.com/fastai/fastai/blob/master/tests/test_vision_train.py#L87

I’m working on a notebook that will demonstrate where fastai needs to embed gc.collect() calls to minimize GPU RAM fragmentation and allow for running a tighter ship memory-wise.

For example, currently fastai causes fragmentation and temp bad memory usage with learn.load. it must not allocate new gpu ram until it freed the memory used by the already loaded model. So, learn.load needs to clear the old model first, gc.collect and only then load a new one, thus not causing fragmentation and temporary memory overhead, which gpu might not be able to accommodate. The notebook will show that problem visually, since currently learn.load consumes twice the size of the model memory size until the moment gc.collect() arrives down the road, which could be too far for a user to be able to continue using the GPU. added a test demonstrating the problem: https://github.com/fastai/fastai/blob/master/tests/test_vision_train.py#L87

Where would be a good place to have such a notebook, so that we could have an ongoing way to visually diagnose things. For identified temp leaks/fragmentation I intend to make these into hard tests of course (that’s why I need all that fastai.utils.mem api).

side note: currently fastai has lots of issues with circular references, which lead to temporary memory leakages. python 3.4+ untangles circular references via gc.collect(), including problematic __del__ which in the past were leading to leaked memory that couldn’t be reclaimed. Except in the case of fastai we can’t wait for gc.collect() to arrive at some point in the future, but must call those explicitly in strategic points. Of course, untangling circular references would be an even better approach, but I’m not sure that it’ll happen. Until then we need a practical solution. I don’t think the recent attempt at weakref implementation made any difference. gc.collect still reports clearing circular references.

RAM fragmentation is a big problem, since you can have a ton of free memory, but not be able to use it.

So if I’m reading this correctly, testing for gpu mem leaks should be one of the top priorities for the test suite? (Improving/Expanding Tests).

I’d like to help. On the tests front, I’ll play around and ping you on the dev project thread. If you have a specific scenario or part of work in mind that would be helpful in the next few days, let me know, I’ll focus on that.

I won’t say it’s the top priority, since the current fastai code base doesn’t have too many issues with that. It’s just not utilizing all the available memory at times, because it doesn’t manage it tightly (1) due to cyclic references (2) due to fragmentation, caused by gpu mem allocation made before freeing the no longer needed memory in some situations. Ideally, the code should be cyclic reference free, so that when any object is removed it should be instantly reclaimed and if gpu is involved, its memory freed. But it’s not the case.

Thank you for the offer, @xnutsive. My plan is to add a few tests for the core functionality (create-learner-train-save-load sequence and its parts), and develop useful utils to make it easy to write them quickly. And then we can start expanding it to other parts. I know a few people are actively working on trying to get the ‘text’ classes to utilize less memory. e.g. LanguageModelLoader.

Have a look at https://github.com/fastai/fastai/blob/master/tests/test_vision_train.py#L87 (test_model_load_mem_leak) for a basic model. It’s now trivial to write leak tests, you just measure used memory before and after and you need to understand how to measure the real used memory.

I think the dev_nb folder in fastai_docs is probably the best place to share development notebooks. Thanks for investigating this!

Perfect. Thank you, @sgugger.

@stas do you know if we can instruct the GPU to stop keeping a mem cache so that we can see the timeline for mem allocations.

When i restart the PC and/or jupyter notebook then i can see a surge in GPU-mem when starting training of a language model. i think it is when backprop starts. This would be easier to pin down without the cache hiding the amount of used memory

do you know if we can instruct the GPU to stop keeping a mem cache so that we can see the timeline for mem allocations.

I don’t know, perhaps there is a way to compile pytorch w/ caching disabled? Ask at http://discuss.pytorch.org/ and report back your findings?

Until, then try to run torch.cuda.empty_cache() at strategic points.

And you might find this cell-by-cell gpu memory logger that I have just released useful: GitHub - stas00/ipygpulogger: GPU Logger for jupyter/ipython (memory usage, execution time) - it’s totally new so I’m still tweaking the interface (and feedback is welcome!). But the main reason I mentioned it to you is that it runs empty_cache() automatically for you before and after each cell is run to measure the gpu memory usage correctly. (and gc.collect() but that can be turned off)

I have also just discovered this pytorch CUDA memory profiler, which perhaps can be useful to you. A CUDA memory profiler for pytorch · GitHub

I have just started a new thread GPU Optimizations Central - let’s have that discussion over there and use that thread for compiling all the knowledge we collectively discover.

When i restart the PC and/or jupyter notebook then i can see a surge in GPU-mem when starting training of a language model. i think it is when backprop starts.

It could be this too: GitHub - stas00/ipygpulogger: GPU Logger for jupyter/ipython (memory usage, execution time) - if it’s the first 0.5GB then it certainly is the case.

thx

I have installed it and removed a ton statements to measure used memory from my notebook/.py files.

Good idea to preload pytorch:

New: we can now directly export the Learner which avoids having to redefine the model at inference time (it’s saved with the data). You can check the inference tutorial for all the details, but you basically say learn.export() when you are ready, then learn = load_learner(path) when you want to load your inference learner.

Breaking change: the adult_sample dataset has been updated, you need to manually destroy the one you have to trigger a download.

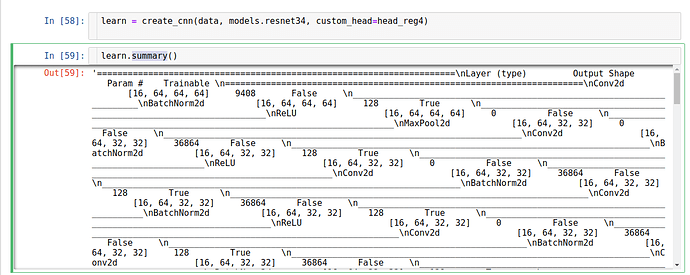

@jeremy changed it not to print() the output but to return the data. And ipython/jupyter doesn’t interpret special raw characters, you have to run the data through print().

In [1]: y = "\n".join(["a","b"])

In [2]: y

Out[2]: 'a\nb'

In [3]: print(y)

a

b

So basically you now have to do this:

print(learn.summary())

The function could probably be smart and detect if it’s in an ipython shell or not and print() instead of returning data then, but perhaps it’d be an inconsistent behavior.

Currently, fastai has an inconsistent mix of some functions returning data, others printing.

My guess is that the change was done to better support the use of fastai outside of jupyter environment, where “unsolicited” printing is not a correct function behavior.

Of course, the other solution is to have a set of ipython wrappers that:

def summary_p(self): print(self.summary())

and then you use the wrapper:

learn.summary_p()

or something like that.

is SWA already implemented in fast.ai v1 ? I found nothing from the docs.

Not yet, @wdhorton said he was working on it I believe.

Ok thank you. @wdhorton I am available if you need help.

Just finished:

-

LanguageModelLoader(used behind the scenes byTextLMDataBunch) has now been replaced byLanguageModelPreLoaderwhich isn’t aDataLoaderbut an intermediate between the dataset and a pytorchDataLoader. It’s aDatasetand aCallbackat the same time, and is responsible for reading a portion of the stream created by all the texts concatenated. - Which means we can have pre-loader now that are

Callback. The only events we can call areon_epoch_beginoron_epoch_endsince the multiprocessing in pytorchDataLoader(with num_workers>=1) makes a copy of the underlying dataset that is only synchronized at the end of the iteration.

this is really nice and memory usage is down. THX

Here is a small suggestion for def getitem__(self, k:int): inserting the blow line just before the comment “#Returning the right portion”. will allow users to provide the token id’s in a format that match the vocab . FX: np.uint16 for a vocab of size 64k.

if concat.dtype != np.int64: concat = concat.astype(np.int64)

Will add.

Also note I removed the varying bptt because it doesn’t add anything now that we shuffle the texts at each batch (tested on witkitext-2).